ASP.NET Core Hosting Models in 2026: Kestrel vs IIS In-Process vs IIS Out-of-Process vs Nginx — Enterprise Decision Guide

Choosing the wrong ASP.NET Core hosting model in production is one of those decisions that seems trivial until it isn't. Teams routinely leave performance on the table, misconfigure reverse proxies, or build deployments that work fine in development and silently misbehave under load. Understanding which ASP.NET Core hosting model your team should use — and why — is a foundational architectural decision that affects throughput, security posture, deployment complexity, and operational cost.

🎁 Want implementation-ready .NET source code you can drop straight into your project? Join Coding Droplets on Patreon for exclusive tutorials, premium code samples, and early access to new content. 👉 https://www.patreon.com/CodingDroplets

ASP.NET Core ships with several hosting options, and the landscape has shifted meaningfully in 2026. Kestrel has matured into a production-grade edge server. IIS in-process hosting has become the default for Windows-based deployments. Nginx and other reverse proxies remain the standard for Linux and containerised workloads. This guide walks through each option, the trade-offs enterprise teams need to understand, and a decision matrix for selecting the right model for your environment.

What Are ASP.NET Core Hosting Models?

ASP.NET Core hosting models define the relationship between the HTTP server that receives requests and the process that runs your application code. There are four practical configurations most teams encounter:

- Kestrel as an edge server — Kestrel runs directly exposed to external traffic, with no reverse proxy in front.

- IIS In-Process hosting — Your app runs inside the IIS worker process (

w3wp.exe). IIS handles the TCP connection and hands it directly to Kestrel's in-process variant. - IIS Out-of-Process hosting — IIS acts as a reverse proxy in front of Kestrel. Your app runs in a separate process, and IIS forwards requests over the loopback adapter.

- Kestrel behind a reverse proxy (Nginx, Apache, YARP, Azure Front Door) — Kestrel runs your app in a dedicated process; a reverse proxy handles TLS termination, load balancing, and edge concerns.

Each configuration makes different promises about performance, security, and operational footprint.

Kestrel as an Edge Server: When It Works and When It Doesn't

Kestrel has come a long way. In .NET 10, it supports HTTP/1.1, HTTP/2, and HTTP/3 (QUIC) natively, with TLS termination built in. For internal microservices, Azure-hosted APIs behind Azure Front Door or Application Gateway, and containerised workloads where the container orchestrator handles ingress, Kestrel alone is often the right answer.

Where Kestrel-only works well:

- APIs deployed behind a cloud load balancer or API gateway (Azure Front Door, AWS ALB, Cloudflare)

- Internal service-to-service communication in Kubernetes clusters

- Containerised apps where the pod is never directly internet-facing

- Teams that want the simplest possible deployment unit

Where Kestrel-only creates risk:

- Directly internet-facing applications without a hardened reverse proxy — Kestrel is a fast HTTP server, but it is not a battle-tested web server in the sense that IIS or Nginx are. It lacks mature request filtering, IP reputation blocking, and fine-grained rate-limiting controls at the infrastructure level.

- Multi-app servers — Kestrel cannot serve multiple sites on port 443 without SNI-based routing, which requires additional setup.

- Applications that require Windows Authentication — Kestrel doesn't support Windows Authentication by itself; HTTP.sys or IIS is required.

If your application is internet-facing and you don't have a cloud-native edge layer in front of it, running Kestrel alone is not recommended for production. Put a reverse proxy in front of it.

IIS In-Process Hosting: The Default for Windows Deployments

IIS in-process hosting, introduced in ASP.NET Core 2.2 and made the default in 3.x and beyond, runs your application inside the IIS worker process using a variant of Kestrel called IISHttpServer. Requests never leave the process boundary — there is no loopback proxy hop.

The performance difference is meaningful. Microsoft's own benchmarks show that in-process hosting delivers significantly higher request throughput than out-of-process, precisely because requests don't travel over the loopback adapter.

When to use IIS in-process:

- Your team operates in a Windows-first environment with existing IIS infrastructure

- You need the highest performance available on Windows-based hosts

- You rely on IIS features like Windows Authentication, IP filtering, URL rewrite rules, or custom error pages

- You want unified process management, logging, and application pool recycling through IIS Manager

Limitations to understand:

- Only one app per IIS application pool can use in-process hosting — the IIS worker process becomes your app's process

- Application pool recycling restarts your app, affecting warm-up time and any in-memory state (caches, background services)

- Debugging in-process is slightly more complex because your app is inside

w3wp.exe - Not available on Linux — in-process hosting is Windows-only

For teams with existing Windows infrastructure, IIS in-process hosting is typically the right default. The performance gains over out-of-process are real, and the operational model is familiar to .NET developers who have worked with IIS for years.

IIS Out-of-Process Hosting: The Legacy Default You Might Not Need

Out-of-process hosting was the original hosting model for ASP.NET Core on IIS. Your app runs in a dedicated dotnet.exe process. IIS receives requests, hands them to the ASP.NET Core Module, which proxies them to Kestrel over the loopback adapter (HTTP on localhost).

The extra network hop introduces latency and reduces throughput compared to in-process. For most workloads, this difference is measurable under high load.

When out-of-process still makes sense:

- Teams that want process isolation between IIS and the application — a crash in the app doesn't take down the IIS worker process

- Applications that require platform-consistent behavior across Windows and Linux (since your app always runs under Kestrel regardless of OS)

- Debugging scenarios where you need console output from the app process independently of IIS

- Apps that need to start, restart, or recycle independently of the application pool lifecycle

The honest assessment: For the vast majority of new ASP.NET Core projects on Windows, in-process hosting is the better default. Out-of-process is a deliberate choice for specific operational or isolation requirements — not a default you should fall back to without a reason.

Kestrel Behind Nginx: The Standard for Linux and Containers

On Linux — whether bare metal, virtual machines, or containers — Kestrel behind Nginx (or another reverse proxy) is the most widely used production pattern. Nginx handles TLS termination, serves static files, performs request buffering, and can enforce connection limits. Kestrel handles the .NET application layer.

This is exactly how ASP.NET Core recommends deploying on Linux hosts. The separation of concerns is clean: the reverse proxy handles infrastructure-level concerns, and Kestrel handles application concerns.

Why a reverse proxy in front of Kestrel matters for production:

- TLS termination at the proxy reduces the certificate management surface of your app

- The proxy can buffer slow client connections, protecting your app from slowloris-style attacks

- Nginx can serve static assets without hitting the .NET process at all

- Header forwarding rules (

X-Forwarded-For,X-Forwarded-Proto) are standardised, ensuring your app sees correct client IP addresses and scheme information - Zero-downtime deployments become easier when the proxy can drain connections while your app restarts

Common mistakes with this model:

- Forgetting to configure

ForwardedHeadersMiddlewarein your ASP.NET Core pipeline — without it, your app sees Nginx's IP address as the client IP, which breaks IP-based rate limiting, geolocation, and logging - Setting

ASPNETCORE_URLSto bind on all interfaces instead oflocalhost— your app should only accept connections from the reverse proxy, not directly from the internet - Not configuring the

AllowedHostssetting inappsettings.json, leaving the app open to host header injection attacks

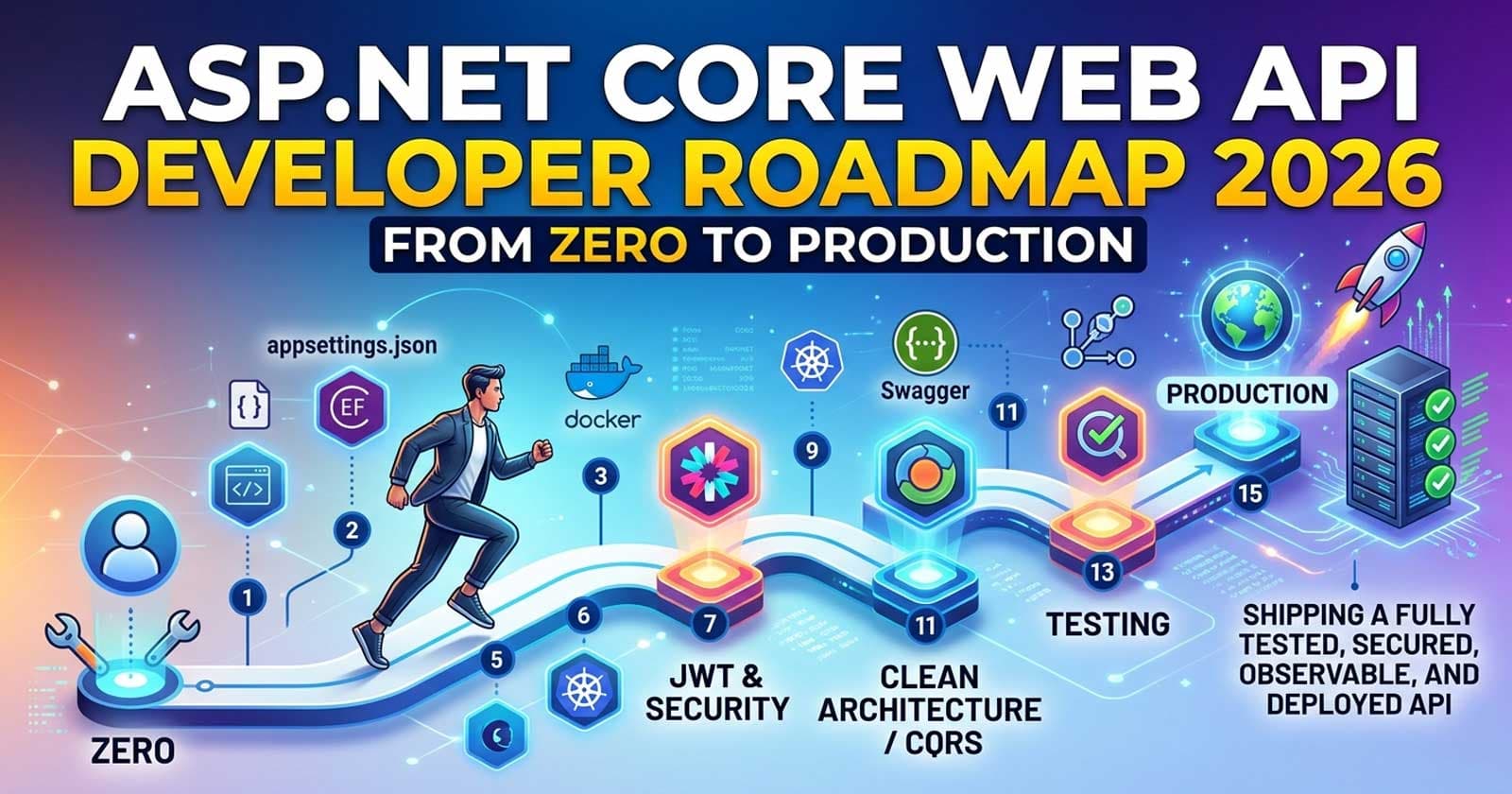

The ASP.NET Core Zero to Production course covers the full deployment chapter — including how the hosting model, Nginx configuration, and ForwardedHeaders middleware fit together in a production-ready API pipeline.

What About HTTP.sys?

HTTP.sys is a Windows-only server that sits at the kernel level, predating Kestrel. It supports Windows Authentication natively and can host multiple apps on the same port using the Windows HTTP Server API.

HTTP.sys is rarely the right choice for modern ASP.NET Core APIs. The main case for it is when you need Windows Authentication without IIS, or when you need kernel-level request filtering on Windows. Outside those scenarios, IIS in-process or Kestrel-behind-Nginx covers the requirements better.

Is There a Right Answer for Kubernetes?

In Kubernetes, your ASP.NET Core app runs in a container. The cluster provides ingress (NGINX Ingress Controller, Traefik, Istio, or Azure Application Gateway Ingress). Your pod typically only needs Kestrel — no IIS, no in-process hosting.

The hosting model for Kubernetes is therefore the simplest one: Kestrel bound to 0.0.0.0:8080 (non-root-friendly, no privileged ports), behind the cluster's ingress layer. TLS terminates at the ingress, not inside your pod.

The one thing teams consistently get wrong in Kubernetes deployments is not configuring graceful shutdown correctly. When the Kubernetes scheduler sends SIGTERM, your pod needs to finish in-flight requests before the container is killed. ASP.NET Core's IHostApplicationLifetime handles this, but only if your app is configured to respect it. Misconfigured shutdown handling leads to dropped requests during rolling updates.

Decision Matrix: Which Hosting Model Should Your Team Use?

| Deployment Target | Recommended Hosting Model | Reason |

| Windows + IIS (existing infra) | IIS In-Process | Best throughput, operational familiarity |

| Windows, needs process isolation | IIS Out-of-Process | Crash isolation, independent lifecycle |

| Linux / Ubuntu / Debian | Kestrel + Nginx (reverse proxy) | Standard Linux production pattern |

| Docker / Containers | Kestrel directly | Ingress handled by orchestrator |

| Kubernetes | Kestrel directly | Pod-level server, ingress at cluster layer |

| Azure App Service (Windows) | IIS In-Process | Default on App Service Windows stacks |

| Azure App Service (Linux) | Kestrel (managed) | App Service handles the edge layer |

| Internet-facing, no cloud edge | Kestrel + Nginx or IIS | Kestrel alone is insufficient for internet edge |

| Windows Authentication required | IIS In-Process or HTTP.sys | Kestrel cannot handle NTLM/Kerberos alone |

Anti-Patterns Enterprise Teams Should Avoid

Mixing hosting models across environments: Running IIS in-process in production and Kestrel-only in development is acceptable because Kestrel behavior is consistent. But switching between in-process and out-of-process between environments introduces subtle differences in how the application initializes and how the host lifecycle works.

Not forwarding headers in reverse proxy deployments: Any Kestrel-behind-proxy setup requires explicit ForwardedHeadersMiddleware configuration. Teams that skip this end up logging 127.0.0.1 for every request and producing rate-limiting rules that are entirely ineffective.

Ignoring connection limits: Kestrel has default connection limits that are reasonable for most apps but need tuning for high-concurrency workloads. The KestrelServerOptions.Limits API lets you configure maximum connections, request body size, and concurrent upgrade requests. Teams that don't review these limits often discover them only when load tests reveal unexpected throttling.

Assuming IIS out-of-process is the same as in-process: They are not the same. Startup behavior, logging pipeline initialization, and application pool recycling interactions differ. Teams that migrate from out-of-process to in-process without reviewing the differences will encounter edge cases in production.

Binding to 0.0.0.0 when behind a proxy: Your app should only accept connections from localhost (or the internal proxy network) when running behind a reverse proxy. Binding to all interfaces exposes Kestrel's HTTP port to external traffic, bypassing the proxy entirely.

How Does This Affect Your Security Posture?

The hosting model directly affects your security surface:

- IIS in-process: IIS's request filtering module acts as a first line of defense before requests reach your .NET code. You benefit from IIS-level IP filtering, URL authorization, and request size limits.

- Kestrel + reverse proxy: Security depends on the proxy configuration. If Nginx or another proxy is correctly configured to enforce TLS, limit request size, and strip dangerous headers, the surface is comparable to IIS. If the proxy is misconfigured, Kestrel receives whatever the client sends.

- Kestrel edge (no proxy): Your .NET code is the first thing that touches the request. Request size limits, connection throttling, and header validation all need to be handled explicitly in your ASP.NET Core middleware pipeline.

For teams that handle sensitive data or operate in regulated environments, IIS in-process on Windows or a hardened Nginx reverse proxy on Linux is the more defensible choice over bare Kestrel.

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

Frequently Asked Questions

What is the default hosting model in ASP.NET Core in 2026? When hosting on IIS, the default is in-process hosting since ASP.NET Core 3.x. For containerised and Linux deployments, Kestrel is the default server, typically running behind a reverse proxy at the infrastructure layer.

Is Kestrel production-ready for internet-facing APIs in 2026? Yes, when deployed behind a reverse proxy or cloud edge layer (Azure Front Door, AWS ALB, Cloudflare). Kestrel alone — directly internet-facing without a proxy — is not recommended because it lacks the hardened request filtering and security features of a dedicated web server. For APIs behind a cloud gateway, Kestrel-only is perfectly appropriate.

What is the performance difference between IIS in-process and out-of-process hosting? IIS in-process hosting eliminates the loopback proxy hop that out-of-process requires. In Microsoft's benchmarks, in-process delivers meaningfully higher request throughput. For most business APIs, the difference won't be the bottleneck, but for high-throughput workloads, in-process is the correct choice.

Should I use Kestrel with HTTP/3 in production? HTTP/3 (QUIC) support is available in Kestrel and has matured in .NET 9 and 10. It requires UDP support in your network stack and TLS 1.3. For teams on modern infrastructure with clients that support HTTP/3, enabling it can reduce latency for initial connections. For most enterprise APIs, HTTP/2 with Kestrel covers the majority of use cases without the additional configuration complexity.

What is ForwardedHeadersMiddleware and why is it critical?

ForwardedHeadersMiddleware tells ASP.NET Core to trust and parse X-Forwarded-For, X-Forwarded-Proto, and X-Forwarded-Host headers set by an upstream reverse proxy. Without it, your application sees the proxy's IP as the client IP, and Request.Scheme will always report http even on HTTPS connections. This breaks IP-based rate limiting, geo-detection, security audit logs, and HTTPS redirects. Always configure it when running behind a proxy.

Can I use IIS in-process hosting in Docker containers? IIS does not run inside Linux containers. For Docker deployments, Kestrel is the correct server. On Windows containers, IIS in-process is technically possible but adds container size and complexity — most teams use Kestrel in containers on both Windows and Linux for consistency.

What about HTTP.sys — should my team consider it? HTTP.sys is worth evaluating only if you specifically need Windows Authentication without IIS (for scenarios like internal tools on an intranet), or if you need kernel-level request filtering on Windows. For standard public APIs, IIS in-process or Kestrel-behind-Nginx covers requirements better with less operational complexity.