HTTP/3 and QUIC in ASP.NET Core: Enterprise Decision Guide for .NET Teams in 2026

HTTP/3 and QUIC support in ASP.NET Core has been production-ready since .NET 9, yet most enterprise .NET teams haven't enabled it—and many aren't sure they should. The question isn't whether HTTP/3 is technically impressive. It is. The question is whether it belongs in your production stack right now, and what the operational cost of enabling it looks like in a real enterprise environment.

This guide gives you an honest, architecture-level answer.

🎁 Want implementation-ready .NET source code you can drop straight into your project? Join Coding Droplets on Patreon for exclusive tutorials, premium code samples, and early access to new content. 👉 https://www.patreon.com/CodingDroplets

What HTTP/3 Actually Changes

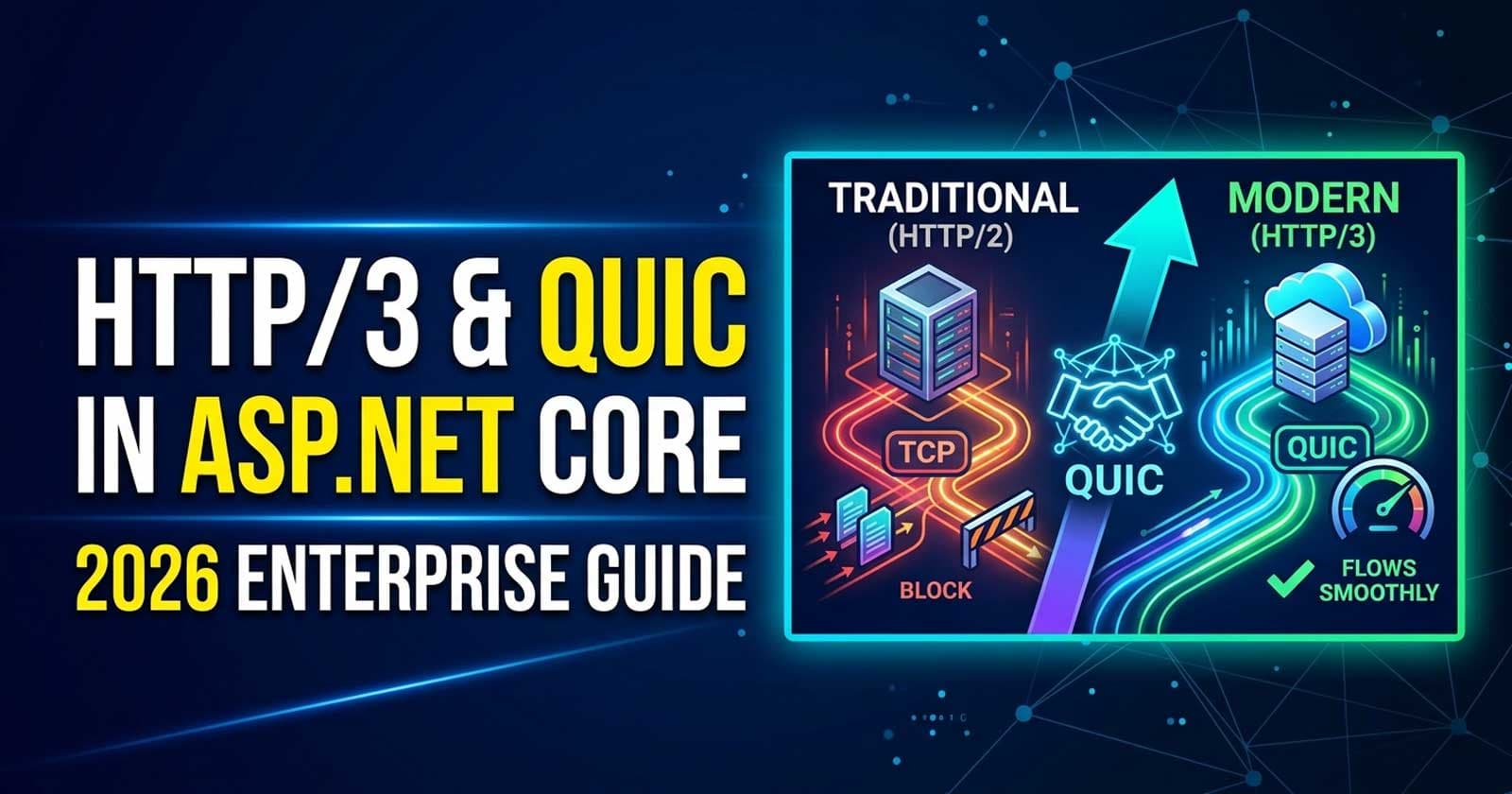

HTTP/3 replaces TCP with QUIC (Quick UDP Internet Connections), a transport protocol defined in RFC 9000. The shift from TCP to UDP-based transport is the architectural difference that matters.

Under HTTP/2, all multiplexed streams run over a single TCP connection. If a single packet is lost, TCP's head-of-line blocking stalls every stream until retransmission completes. QUIC gives each stream independent packet loss recovery—a dropped packet on stream A doesn't freeze stream B.

The other meaningful improvement is connection establishment. HTTP/2 over TLS requires multiple round trips before data flows. QUIC combines the transport and TLS handshake, reducing connection time to a single round trip for new connections and potentially zero round trips for resumed sessions (0-RTT).

For enterprise APIs, these improvements matter most in two scenarios: high-latency or lossy network conditions (mobile clients, international users) and APIs that serve many concurrent, short-lived connections simultaneously. If your API runs on a fast internal network with stable connections, the gains will be minimal.

ASP.NET Core HTTP/3 Support: What's Available in .NET 10

In .NET 10, Kestrel supports HTTP/3 with the following characteristics:

- HTTP/3 is opt-in — you configure it explicitly on Kestrel endpoints

- The implementation depends on MsQuic, the Microsoft QUIC library, which must be present on the host OS

- On Windows 11 / Windows Server 2022 and later, MsQuic ships in-box

- On Linux, MsQuic must be installed as a separate package (

libmsquic) - Kestrel falls back to HTTP/1.1 or HTTP/2 automatically if HTTP/3 is unavailable on the platform

- HTTP/3 requires TLS — you cannot run it over plain HTTP

The ASP.NET Core team has done the hard work of making HTTP/3 a graceful opt-in. Fallback is automatic. The risk isn't in what happens if the platform doesn't support it — it's in what happens when you introduce a new protocol into an infrastructure stack that wasn't designed around UDP.

The Enterprise Infrastructure Problem With QUIC

This is the single most important consideration for enterprise teams, and it's the one that most tutorials skip.

Most enterprise load balancers and reverse proxies have partial or no QUIC support.

QUIC runs over UDP port 443. Many enterprise environments:

- Block UDP traffic at the firewall by default

- Use load balancers that don't support UDP pass-through for QUIC

- Have network appliances (WAFs, IDS/IPS, DLP) that don't inspect QUIC traffic correctly

- Terminate TLS at the load balancer, which breaks end-to-end QUIC

If your stack terminates TLS at an NGINX, HAProxy, or cloud load balancer, and that layer doesn't forward QUIC traffic, HTTP/3 simply won't reach Kestrel—or worse, it will reach it unreliably. The Alt-Svc header mechanism that advertises HTTP/3 to clients works correctly only when the client can actually reach the server on UDP 443.

Before enabling HTTP/3, audit your full traffic path: client → CDN → WAF → load balancer → Kestrel. Every hop needs to support QUIC.

When Should Your Team Enable HTTP/3?

The honest answer depends on your client profile and your infrastructure posture.

Enable HTTP/3 when:

- You serve browser-based clients or mobile apps directly (not API-to-API only)

- Your API is publicly accessible over the internet and users experience high latency

- Your hosting infrastructure supports QUIC end-to-end (e.g., Azure, CloudFront, Cloudflare with HTTP/3 proxy enabled)

- Your load balancer supports UDP 443 forwarding or you're running Kestrel edge-facing

- You've profiled your application and confirmed head-of-line blocking is a real bottleneck

Do not enable HTTP/3 when:

- Your API is internal-only (service-to-service, backend APIs behind a private network)

- Your infrastructure team hasn't verified UDP 443 is open end-to-end

- Your load balancer or WAF doesn't support QUIC (check explicitly — "HTTP/3 support" claims vary widely)

- You're running on Linux and haven't validated

libmsquicinstallation in your container image - Your clients are exclusively

HttpClient-based .NET services — the gains are minimal and the complexity isn't worth it

The Decision Matrix

| Scenario | Recommendation |

|---|---|

| Public API, mobile/browser clients, CDN with QUIC support | Enable HTTP/3 — expected benefit is real |

| Public API, no CDN, own load balancer that supports QUIC | Test in staging, enable in production if validated |

| Public API, load balancer without verified QUIC support | Hold — audit infrastructure first |

| Internal API, service-to-service over private network | Don't enable — negligible benefit |

| Kubernetes cluster with ingress that doesn't support UDP 443 | Hold — ingress change required first |

| Azure-hosted, Azure Front Door or Application Gateway v2 | Check per-SKU HTTP/3 support — varies |

| Container-based Linux deployment | Verify libmsquic is in your base image |

HTTP/3 vs HTTP/2: The Performance Trade-Off In Context

The performance story isn't as simple as "HTTP/3 is always faster." Benchmarks run on controlled networks consistently show HTTP/3 wins under packet loss (even 1–2% loss dramatically favors QUIC). On stable, low-latency connections (typical internal enterprise networks), HTTP/2 is competitive and sometimes marginally faster due to lower CPU overhead from QUIC's encryption-at-the-transport-layer design.

For enterprise teams running APIs primarily consumed by internal services, HTTP/2 remains the right default. HTTP/3 becomes the right choice when the client is on the other side of an uncertain network — mobile, international, or over commodity internet.

What the Alt-Svc Mechanism Means for Your Rollout

HTTP/3 uses an upgrade mechanism: the server includes an Alt-Svc header (or HTTP/2 push) in its HTTP/1.1 or HTTP/2 response, advertising that it supports HTTP/3 on a specific port. The client then attempts to connect via QUIC on the next request. The initial request always falls back gracefully.

This means HTTP/3 adoption is incremental by design. Clients that don't support QUIC (or can't reach the UDP port) will continue using HTTP/1.1 or HTTP/2 without any error. The risk surface is small — you won't break existing clients. What you will face is observability complexity: your logs will show a mix of HTTP/1.1, HTTP/2, and HTTP/3 connections, and correlating performance differences requires your APM tooling to be protocol-aware.

Platform Readiness Checklist Before Enabling HTTP/3

Before you enable HTTP/3 in production, validate every item:

- OS support confirmed — Windows Server 2022+, or Linux with

libmsquicinstalled - UDP port 443 open — verified at every firewall and security group layer

- Load balancer / reverse proxy — explicitly verified QUIC support (not just "supports HTTP/3" marketing — verify UDP pass-through behavior)

- CDN configuration — if using Cloudflare, Azure Front Door, or CloudFront, confirm HTTP/3 is enabled on the CDN-to-origin path, not just CDN-to-client

- Container base image —

libmsquicincluded if deploying on Linux containers - TLS certificate — valid and correctly bound; HTTP/3 is TLS-only

- APM / logging — updated to attribute protocol version to requests

- Kestrel configuration tested in staging — HTTP/3 enabled explicitly with HTTP/1.1 and HTTP/2 as fallback

- Connection migration testing — if mobile clients are a target, test connection migration across IP changes

- Load test with mixed protocol clients — confirm no regressions under realistic traffic

Anti-Patterns to Avoid

Enabling HTTP/3 without validating the UDP path. The most common mistake. Kestrel will advertise HTTP/3 via Alt-Svc, clients will attempt QUIC connections, and they'll silently fall back to HTTP/2 after timeouts. The result is slower first-connection performance for supported clients — the opposite of what you wanted.

Assuming your cloud load balancer supports QUIC. "HTTP/3 support" on cloud services often means CDN-to-client support, not origin-facing support. Azure Application Gateway, AWS ALB, and GCP Cloud Load Balancing have different HTTP/3 support postures per SKU and region. Verify your specific tier.

Enabling HTTP/3 on internal services expecting throughput gains. Service-to-service communication on a stable private network gains nothing meaningful from QUIC. The complexity of validating QUIC infrastructure for internal services outweighs any measurable benefit.

Skipping the libmsquic install in Docker images. If your Dockerfile starts from mcr.microsoft.com/dotnet/aspnet:10.0 on Linux, libmsquic is not included by default. You need to install it explicitly. Missing this leads to HTTP/3 silently disabled — or worse, a startup exception depending on your Kestrel configuration.

Using HTTP/3-only without fallback. Always configure Kestrel to support HTTP/1.1 and HTTP/2 alongside HTTP/3. HTTP/3 should be additive, not exclusive.

Is HTTP/3 Ready for Enterprise Production in 2026?

Yes — with caveats. The protocol is stable, RFC-complete, and Kestrel's implementation is production-quality. The ASP.NET Core team's fallback behavior means you won't break anything by enabling it correctly. The risk is entirely in infrastructure readiness and the operational overhead of managing a new protocol layer.

For public-facing APIs with external client traffic: run the infrastructure checklist, validate your load balancer and CDN, and enable it. The latency and connection establishment improvements for mobile and international users are real.

For internal APIs and service meshes: wait. The complexity-to-benefit ratio doesn't favour HTTP/3 on stable internal networks. HTTP/2 continues to be the right default.

The teams that will benefit most are those running edge-facing APIs behind a modern CDN (Cloudflare, Azure Front Door, CloudFront) where HTTP/3 to the CDN PoP is already handled, and where the CDN-to-origin path can also be upgraded. That's a realistic 2026 setup for many enterprise SaaS products.

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

Frequently Asked Questions

Does enabling HTTP/3 in Kestrel break existing HTTP/1.1 and HTTP/2 clients?

No. HTTP/3 is additive. Kestrel advertises HTTP/3 availability via the Alt-Svc response header. Clients that don't support QUIC or can't reach UDP 443 continue using HTTP/1.1 or HTTP/2 without any interruption. Fallback is automatic and transparent to the client.

Does HTTP/3 work behind NGINX or IIS? Only if NGINX or IIS is configured to forward QUIC traffic. Standard NGINX reverse proxy configurations do not forward UDP 443 traffic to Kestrel. You need to either run Kestrel edge-facing (no reverse proxy) or use a reverse proxy version that explicitly supports QUIC forwarding. As of 2026, NGINX has experimental HTTP/3 support but configuration for forwarding QUIC to an upstream is not standard practice.

Does HTTP/3 in ASP.NET Core require a paid add-on or separate license?

No. HTTP/3 support via MsQuic is included in .NET 9 and .NET 10 at no additional cost. MsQuic on Linux requires installing the libmsquic package, which is open source and freely available.

What happens if MsQuic is not installed and HTTP/3 is configured? Kestrel will log a warning and disable HTTP/3, falling back to HTTP/2 and HTTP/1.1. If you've configured Kestrel to require HTTP/3 without fallback (which is not recommended), you'll get a startup error. The recommended configuration always includes HTTP/1.1 and HTTP/2 alongside HTTP/3.

Is 0-RTT session resumption safe to enable for enterprise APIs? 0-RTT (zero round-trip time resumption) allows cached session data to be sent on reconnection before the handshake completes. The security trade-off is that 0-RTT data is vulnerable to replay attacks. For enterprise APIs that handle idempotent GET requests, it's generally safe. For APIs with state-mutating endpoints, disable 0-RTT or ensure your endpoints are replay-safe before enabling it.

How should we monitor HTTP/3 traffic in production? Your APM tool needs to be updated to attribute protocol version to individual requests. OpenTelemetry with ASP.NET Core instrumentation captures the HTTP protocol version in span attributes. Ensure your dashboards include a protocol-version dimension so you can correlate performance data by HTTP/1.1, HTTP/2, and HTTP/3 connections independently. Without this, performance analysis across a mixed-protocol deployment becomes noise.

Does HTTP/3 affect how Kubernetes ingress is configured? Yes, significantly. Standard Kubernetes ingress controllers (NGINX Ingress, Traefik) route TCP traffic. HTTP/3 over QUIC is UDP. You'll need to add a UDP port 443 exposure on your service and either configure a NodePort/LoadBalancer for UDP or use an ingress controller with explicit QUIC support. This is a non-trivial infrastructure change. Most teams using Kubernetes will find it simpler to terminate HTTP/3 at the CDN or cloud load balancer layer and continue using standard ingress for origin traffic.