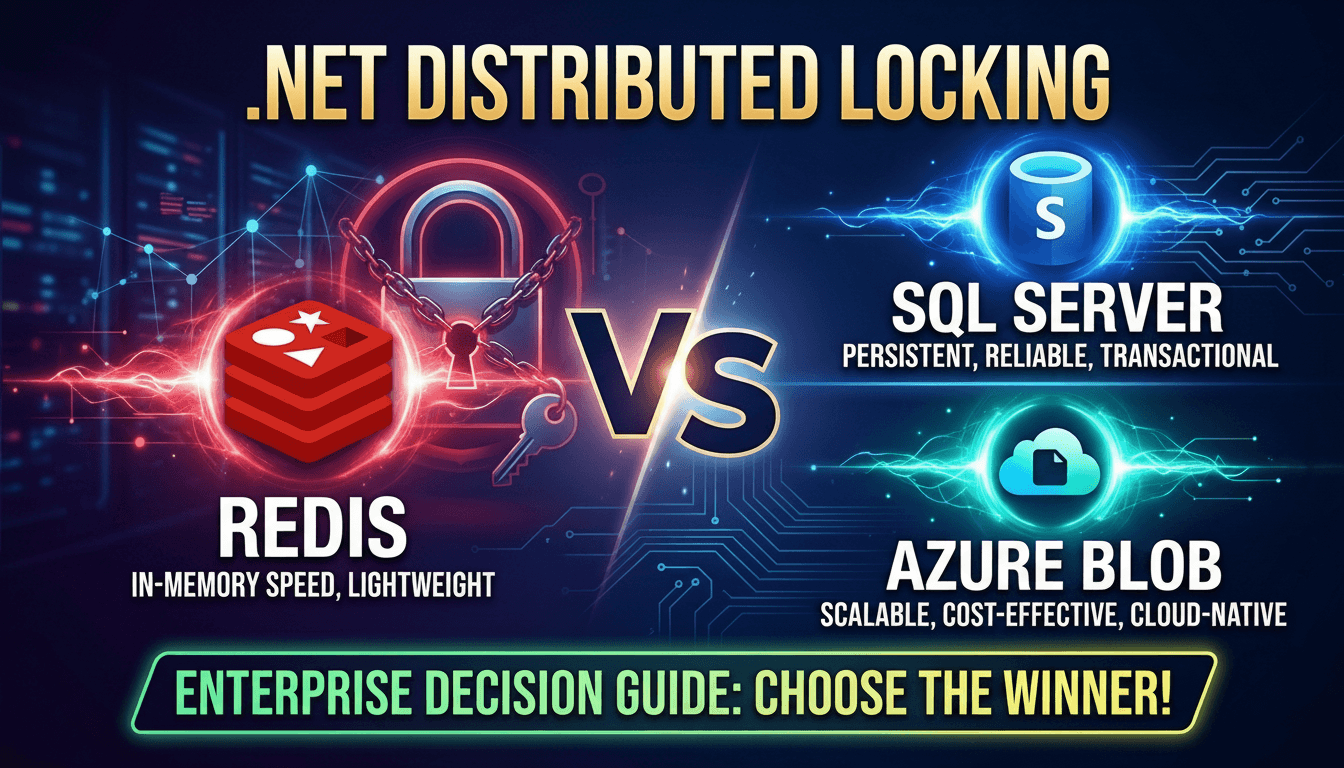

Distributed Locking in .NET: Redis vs SQL Server vs Azure Blob — Enterprise Decision Guide

Redis, SQL Server, and Azure Blob Leases — Which One Belongs in Your Stack?

When your .NET application scales beyond a single process, a whole class of correctness problems emerges that in-process locking cannot solve. Two pods processing the same scheduled job. Two API instances updating the same record simultaneously. A payment processing step firing twice because a retry overlapped with the original call. These are not bugs in your business logic — they are concurrency failures at the infrastructure boundary.

Distributed locking is the mechanism that restores the mutual exclusion guarantee across multiple hosts, containers, or availability zones. But the .NET ecosystem offers several viable backends — Redis, SQL Server, Azure Blob Storage, and PostgreSQL advisory locks among them — and each carries meaningfully different tradeoffs across durability, latency, operational complexity, and failure modes. Choosing the wrong one does not just leave performance on the table; it can introduce subtle correctness risks that surface only under partial-failure conditions.

Want implementation-ready .NET source code you can adapt fast? Join Coding Droplets on Patreon. 👉 https://www.patreon.com/CodingDroplets

Why In-Process Locking Breaks in Scaled-Out Systems

The familiar lock keyword and SemaphoreSlim are scoped to a single process's memory. In a Kubernetes deployment with multiple replicas, or any horizontally scaled deployment behind a load balancer, each instance has its own independent lock state. Two requests routed to two different pods will both successfully acquire what each believes to be the global lock, producing a race condition that in-process coordination cannot prevent.

This is not a hypothetical scenario. Any background job scheduler that runs on more than one replica, any checkout flow that retries under load, or any cache population routine that should run exactly once will encounter this failure. The fix requires externalizing the locking primitive to a shared backing store that all instances can access.

The Three Primary Strategies in .NET

Redis and the Redlock Algorithm

Redis is the most common distributed lock backend in the .NET ecosystem, and for good reason: it is fast, it has native TTL support for lock expiry, and the StackExchange.Redis client is mature and well understood. The widely adopted DistributedLock.Redis library (from the madelson/DistributedLock project) wraps Redis with a clean .NET abstraction.

For single-instance Redis, the basic SETNX-with-TTL approach works correctly in practice. For multi-node Redis deployments where availability is a concern, the Redlock algorithm acquires locks on a majority of independent Redis nodes, providing a higher correctness guarantee under node failure scenarios.

The primary risk with Redis is what happens when the Redis connection is interrupted mid-lock. The lock will expire after the configured TTL, but if the TTL is set too short, the holding process may still be in its critical section when another process acquires. Setting TTL too long delays recovery. Enterprise teams must calibrate TTL to the actual worst-case duration of their critical sections, not to a round number.

Redis is the right choice when your team already runs Redis for caching (avoiding a new infrastructure dependency), when sub-millisecond lock acquisition latency matters, and when the critical sections are short-lived and deterministic in duration.

SQL Server Application Locks

SQL Server has a native locking primitive called an application lock, accessible via sp_getapplock. These are session-scoped locks managed entirely within the database engine, with no external library required beyond a database connection. The DistributedLock.SqlServer library surfaces this capability with the same .NET abstraction layer used for the Redis backend.

SQL Server locks have an important advantage: their lifecycle is tied to the database connection. If a process crashes or its connection drops, the lock is released automatically and immediately — no TTL expiration delay. This eliminates the split-brain window that Redis TTL creates.

The trade-off is latency and connection pool pressure. Acquiring a SQL Server application lock requires a round trip to the database and holds a connection for the lock's duration. Under high lock-acquisition rates, this can exhaust connection pool capacity. For locks held only for milliseconds, this is usually acceptable. For longer-held locks, the connection hold time becomes a resource concern.

SQL Server is the pragmatic choice for enterprise teams that already operate SQL Server and want to avoid introducing Redis as a new infrastructure dependency. It is particularly well-suited for locks protecting low-concurrency operations like singleton background jobs, admin operations, and ETL coordination.

Azure Blob Storage Leases

Azure Blob Storage supports a Blob Lease API that can function as a distributed lock. A lease on a blob prevents concurrent writes to that blob and can be set for 15–60 second durations or as infinite leases with explicit release. The DistributedLock.Azure package wraps this into the standard .NET abstraction.

Blob leases are the right tool for long-lived distributed locks — operations that may take minutes rather than milliseconds, such as batch ETL runs, cross-service data migrations, or scheduled report generation jobs. Unlike Redis, there is no need to configure a TTL that you hope covers your operation's worst-case runtime; infinite leases are released explicitly, and fixed leases renew automatically via the library's lock handle.

The trade-off is latency — blob lease operations are slower than Redis by a factor of 10–100x for acquisition and release. For high-frequency, short-duration locking scenarios, this overhead is prohibitive. For coarse-grained coordination of long-running processes in Azure environments, the latency is acceptable and the operational simplicity is compelling.

The PostgreSQL Advisory Lock Honorable Mention

Teams running PostgreSQL should evaluate advisory locks before introducing Redis or a separate SQL Server instance. PostgreSQL advisory locks are session-scoped, require no additional infrastructure, and integrate cleanly via the DistributedLock.Postgres library. They carry the same connection-lifecycle correctness guarantee as SQL Server application locks and perform well for low-to-moderate lock acquisition rates. If your backend is already PostgreSQL, this is often the lowest-complexity path.

The DistributedLock Library: A Unified Abstraction

The open-source DistributedLock library by Matthew Ness (madelson/DistributedLock on GitHub) is the recommended starting point for most enterprise .NET teams. It provides a consistent IDistributedLock abstraction across Redis, SQL Server, PostgreSQL, Azure Blob, and several other backends. This means you can swap backends without changing the locking logic in your application code — only the configuration changes.

For ASP.NET Core applications, the library supports dependency injection registration, making the lock provider a first-class service in your DI container. Lock handles implement IDisposable and IAsyncDisposable, allowing idiomatic using patterns that ensure release even on exception paths.

Decision Matrix for Enterprise Teams

Three questions drive the backend selection.

First, what infrastructure already exists? If Redis is already in the stack for caching, use it. If only SQL Server exists and adding Redis is a new operational burden, SQL Server application locks are the pragmatic choice. If the workload runs on Azure, blob leases deserve evaluation for long-running coordination.

Second, how short-lived are the critical sections? Millisecond-range critical sections benefit from Redis latency. Minute-range critical sections are better served by Azure Blob or SQL Server. The latency of Redis is a feature only if the operations are fast enough to realize it.

Third, what are the correctness requirements under partial failure? Connection-lifecycle release (SQL Server, PostgreSQL) provides stronger correctness guarantees on crash recovery than TTL-based release (Redis). For financial transactions, inventory control, or any idempotency-critical path, the connection-lifecycle backends warrant serious consideration even if Redis is available.

Common Enterprise Mistakes

The most frequent error is setting Redis TTL too short relative to the critical section's worst-case runtime. Under high load or I/O latency spikes, operations take longer than the median. A TTL calibrated against average performance will cause spurious lock expiry under the conditions where correctness matters most.

The second mistake is acquiring distributed locks for operations that are not actually concurrent. If a background job runs on a single instance by deployment design, adding distributed locking adds latency and operational complexity for no correctness benefit. Audit the actual concurrency model before reaching for a distributed lock.

The third mistake is not monitoring lock wait times. Distributed locks are a congestion point. Unexpectedly long lock acquisition times are an early warning signal of capacity problems or runaway processes holding locks, and they should appear in your observability dashboards alongside the rest of your latency metrics.

Operational Considerations

Whichever backend you choose, instrument the lock acquisition path. Track acquisition latency, lock contention rates, and how frequently lock expiry (for TTL-based systems) occurs. High expiry rates indicate your TTL configuration is misaligned with real-world runtime.

Define a lock naming convention as a team standard. Lock names that encode the resource type and resource identifier — rather than generic names like global-lock — make debugging lock contention dramatically easier in production.

Document the expected critical section duration for each lock in your codebase. This is not gold-plating; it is the information needed to set correct TTL values and to understand the blast radius of a lock holder that crashes or hangs.

FAQ

What is a distributed lock and why do I need one in .NET? A distributed lock is a mutual exclusion mechanism backed by a shared external store — Redis, SQL Server, a database — that coordinates access across multiple processes or hosts. You need one whenever multiple instances of your application can concurrently reach the same critical section and in-process locking cannot provide coordination across those instances.

Is Redis always the best distributed lock backend for .NET? No. Redis has the lowest latency and is the right choice when you already run Redis and your critical sections are short-lived. SQL Server application locks are often the better pragmatic choice for teams that already operate SQL Server and want to avoid a new infrastructure dependency. Azure Blob leases are the right tool for long-running coarse-grained coordination in Azure environments.

What happens if the process holding a Redis lock crashes before releasing it? The lock will be released automatically when its TTL expires. The gap between crash and TTL expiry is the window during which no other process can acquire the lock. Setting the TTL to the expected worst-case critical section duration minimizes this window while preventing a hung process from holding the lock forever.

Does SQL Server distributed locking use actual database row locks?

No. SQL Server application locks (sp_getapplock) are a separate mechanism from row-level or table-level locks used in transaction isolation. They are advisory locks managed by the database engine at the application level. They do not block database queries against the underlying tables; they only block other acquisition attempts on the same named lock.

What is the Redlock algorithm and when does it matter? Redlock is an algorithm by Redis's creator that acquires a lock on a quorum (majority) of independent Redis nodes. It provides a stronger correctness guarantee than single-node Redis under node failure scenarios. It matters in production environments where Redis high availability is achieved via multiple independent nodes rather than a single Redis cluster. For most teams, single-node Redis with proper TTL configuration is sufficient.

Can I use distributed locking to replace idempotency keys? No. Distributed locking prevents concurrent execution of a critical section. Idempotency keys prevent a completed operation from being applied twice. These solve different problems. A payment that completed successfully still needs idempotency key protection against retries that arrive after completion. A distributed lock helps only while the operation is in progress.

How does the DistributedLock library compare to IDistributedCache for locking?

IDistributedCache is a caching abstraction and is not designed for distributed locking. It lacks the atomic acquire-or-fail semantics and automatic release-on-crash guarantees that locking requires. Do not use distributed cache as a locking primitive. Use a purpose-built library like DistributedLock that implements the correct algorithm for each backend.