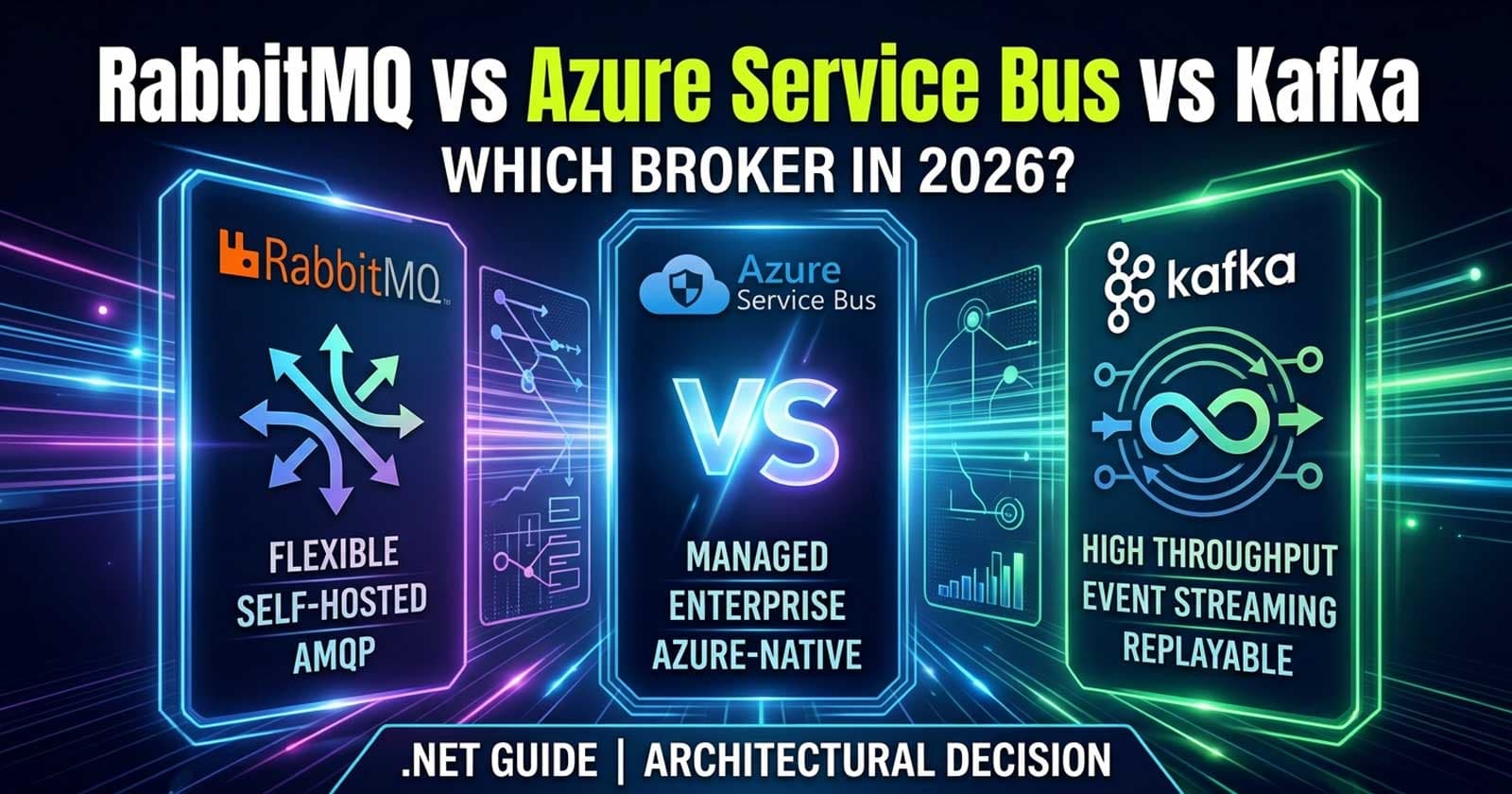

What's New in .NET 10 Runtime Performance: JIT, GC, and NativeAOT Changes Enterprise Teams Should Know

Overview of .NET 10 Runtime Performance Improvements

The .NET 10 runtime delivers its most significant set of low-level performance improvements in years. For enterprise ASP.NET Core teams running high-throughput APIs, the upgrades to the JIT compiler, garbage collector, and NativeAOT pipeline are not just incremental tweaks — they shift what you can expect from the platform in production. Understanding what changed, what matters for your workloads, and which improvements require action on your part is the right lens through which to evaluate the upgrade.

🎁 Want implementation-ready .NET source code you can drop straight into your project? Join Coding Droplets on Patreon for exclusive tutorials, premium code samples, and early access to new content. 👉 https://www.patreon.com/CodingDroplets

This article walks through the most production-relevant runtime improvements in .NET 10, explains the real-world impact on ASP.NET Core applications, and tells you what your team should adopt now versus monitor for later.

JIT Compiler Improvements in .NET 10

The JIT compiler in .NET 10 received several substantial upgrades that affect how the runtime generates and optimises native machine code from your managed C# code.

Improved Struct Argument Code Generation

.NET 10 improves how the JIT handles struct arguments passed between methods. In previous versions, when struct members needed to be packed into a single CPU register, the JIT first wrote values to memory and then loaded them back into a register — an unnecessary round-trip. With .NET 10, the JIT can now place promoted struct members directly into shared registers without the intermediate memory write.

For enterprise teams making heavy use of value types, record structs, or performance-sensitive domain models passed across method boundaries, this translates into measurably fewer memory operations in hot paths. Benchmarks from the .NET team confirm this eliminates redundant memory access in scenarios where [MethodImpl(MethodImplOptions.AggressiveInlining)] or physical promotion is active.

Loop Inversion via Graph-Based Loop Recognition

The JIT compiler has shifted from a lexical analysis approach to a graph-based loop recognition implementation for loop inversion. Loop inversion is the transformation of a while loop into a conditional do-while, removing the need to branch to the top of the loop on each iteration to re-evaluate the condition.

The graph-based approach is more precise: it correctly identifies all natural loops (those with a single entry point) and avoids false positives that previously blocked optimisation. The practical impact is higher optimisation potential for .NET programs with for and while constructs, especially in data processing pipelines, collection manipulation, and query materialisation — all common in enterprise ASP.NET Core backends.

Array Interface Method Devirtualisation

One of the key .NET 10 de-abstraction goals is reducing the overhead of common language features. Array interface method devirtualisation is a direct result of this effort.

Previously, iterating an array via IEnumerable<T> left enumerator calls as virtual dispatch — blocking inlining and stack allocation. Starting in .NET 10, the JIT can devirtualise and inline these array interface methods, eliminating the abstraction cost. For applications that pass arrays through generic or interface-typed pipelines (a common pattern in service layers and middleware), this can meaningfully reduce GC allocation pressure by enabling the enumerator to be stack-allocated rather than heap-allocated.

What This Means for Your Team

These JIT improvements are passive — your application benefits by simply upgrading to .NET 10. No code changes are required. However, applications already using value types, avoiding unnecessary heap allocations, and keeping hot paths simple will see the most pronounced gains.

Garbage Collector Improvements: DATAS and Beyond

What Is DATAS?

DATAS (Dynamic Adaptation to Application Sizes) is a runtime feature that automatically tunes the GC heap thresholds to fit real application memory requirements. Introduced as an opt-in feature in earlier .NET versions, .NET 10 continues to refine its behaviour for server workloads.

Why Enterprise Teams Should Care

Traditional GC tuning in .NET required careful profiling and manual configuration of GCHeapHardLimit, GCHighMemPercent, and related environment variables. DATAS shifts this burden to the runtime by observing actual application behaviour and adjusting heap segments accordingly.

For Kubernetes-deployed ASP.NET Core APIs, this is particularly relevant. Container workloads with strict memory limits benefit from a GC that adapts to the container's actual memory ceiling rather than defaulting to host-level estimates. Teams that previously set DOTNET_GCConserveMemory or DOTNET_GCHeapHardLimit as blunt instruments should re-evaluate those settings under .NET 10 — in many cases, DATAS handles this automatically.

Background GC Optimisations

The background GC in .NET 10 has been further optimised for throughput. The improvements target reduced pause time during Gen2 collections, which are the collections most disruptive to request latency in long-running ASP.NET Core services. Enterprise teams operating high-throughput APIs where P99 latency matters will benefit from these changes without any configuration effort.

NativeAOT Improvements in .NET 10

Expanded Type Preinitialiser Support

NativeAOT in .NET 10 expands its type preinitialiser to support all variants of conv.* and neg opcodes. This allows preinitialization of methods that include casting or negation operations, further reducing startup-time overhead. The practical effect is that a broader range of your application's static initialisation logic can be precomputed at build time rather than at application startup.

Reduced Binary Size and Startup Time

.NET 10 NativeAOT builds produce smaller binaries and faster startup times compared to .NET 9. Benchmark data from the .NET team and community shows startup time improvements in the range of 20–40% for typical ASP.NET Core minimal API services published as NativeAOT, depending on the application's dependency graph and reflection usage.

Is NativeAOT Right for Your Team in 2026?

NativeAOT remains best suited to ASP.NET Core Minimal API services, Azure Functions, and standalone microservices with well-contained dependency graphs. Applications that rely heavily on runtime reflection, dynamic code generation (System.Reflection.Emit), or third-party libraries not yet trimming-compatible will still face challenges with NativeAOT.

The key question for enterprise teams is: does your service's startup time, binary size, or container density justify the NativeAOT adoption cost? For greenfield microservices built with Minimal APIs, the answer is increasingly yes. For large monolithic ASP.NET Core applications with rich reflection-heavy ORMs, middleware stacks, and plugin architectures, the conventional JIT runtime remains the pragmatic choice through at least 2026.

You can find detailed guidance on evaluating NativeAOT deployment in the Microsoft NativeAOT documentation.

What to Adopt Now vs. Monitor

Adopt Now

Upgrade to .NET 10 to passively receive JIT gains. The struct argument code generation, loop inversion, and array devirtualisation improvements require no application-level changes. The ROI is immediate for any team currently on .NET 9 or .NET 8 LTS.

Re-evaluate GC configuration for containerised workloads. If your team manually set GC-related environment variables to constrain memory usage in Kubernetes, test your application under .NET 10 with DATAS active and default settings. You may find that explicit tuning is no longer necessary.

Consider NativeAOT for new Minimal API services. New microservices being designed today should include NativeAOT feasibility as a first-class consideration during the architecture phase, not as an afterthought.

Monitor for Later

Advanced NativeAOT for EF Core workloads. The EF Core team continues to make progress on trimming compatibility, but EF Core-heavy applications are not yet fully NativeAOT-compatible without workarounds. Monitor the EF Core GitHub milestones for complete NativeAOT support announcements.

Hardware acceleration paths (AVX10.2, Arm64 SVE). .NET 10 adds support for AVX10.2 and Arm64 SVE hardware intrinsics. For teams running compute-intensive workloads on modern server hardware, these paths can unlock significant throughput gains, but they require explicit use of System.Runtime.Intrinsics APIs. This is specialist territory — valuable for data processing teams, not general-purpose web APIs.

How Do These Improvements Compare to Previous Versions?

Is .NET 10 Runtime Faster Than .NET 9?

Yes, measurably so — but the improvements are surgical rather than sweeping. The JIT changes benefit hot paths that use value types, loops, and interface-typed array iteration. The GC improvements reduce pause time. NativeAOT reduces startup and binary size. Applications that already profile well on .NET 9 will see incremental gains, not a step-function change.

For teams evaluating whether to upgrade from .NET 8 LTS to .NET 10, the runtime performance improvements compound with everything that shipped in .NET 9, making the total improvement gap significant enough to justify upgrade planning for most production workloads.

Do You Need to Change Your Code to Benefit?

No, for the majority of these improvements. The JIT, GC, and NativeAOT gains are delivered by the runtime itself. Applications running on .NET 10 receive them automatically. The exception is NativeAOT: adopting NativeAOT requires publishing explicitly with PublishAot=true and validating trimming compatibility, which does require deliberate engineering work.

Also worth reading: ASP.NET Core Response Compression: Enterprise Decision Guide for another dimension of performance tuning in production APIs, and What's New in EF Core 10 for the data layer improvements that pair with these runtime gains.

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

Frequently Asked Questions

What are the most impactful .NET 10 runtime performance improvements for ASP.NET Core applications?

The most immediately impactful improvements are the JIT compiler upgrades — specifically improved struct argument code generation, enhanced loop inversion via graph-based loop recognition, and array interface method devirtualisation. These take effect automatically when you run your application on .NET 10 without requiring any code changes. For containerised deployments, the GC DATAS improvements that automatically tune heap thresholds to container memory limits are also highly relevant.

Do I need to rewrite any code to benefit from .NET 10 JIT improvements?

No. The JIT improvements in .NET 10 are transparent to application code. Your existing ASP.NET Core application will benefit from better struct argument handling, improved loop code generation, and array interface devirtualisation simply by targeting the .NET 10 runtime. No source code changes are needed.

Is NativeAOT production-ready for ASP.NET Core APIs in .NET 10?

NativeAOT is production-ready for ASP.NET Core Minimal APIs with well-contained dependency graphs. It is well-suited for microservices, serverless functions, and container-optimised workloads where startup time and binary size matter. It is not yet fully compatible with EF Core, some reflection-heavy libraries, or applications that rely on dynamic code generation. Evaluate NativeAOT readiness using the dotnet publish --dry-run trimming analysis tooling before committing to an AOT deployment.

How does DATAS GC mode help with Kubernetes deployments?

DATAS (Dynamic Adaptation to Application Sizes) allows the .NET GC to automatically tune its heap size to match your application's actual memory consumption patterns, including container memory limits. For Kubernetes workloads with strict memory ceilings, DATAS reduces the need for manual GC tuning via environment variables like DOTNET_GCHeapHardLimit. Test your application under load with default .NET 10 settings before adding manual GC configuration — you may find it performs well without intervention.

What is the practical difference between .NET 10 NativeAOT and the JIT runtime for enterprise APIs?

The JIT runtime compiles your application's methods to native code at runtime (Just-In-Time), which allows for full reflection, dynamic code generation, and broad library compatibility. NativeAOT compiles everything ahead of time, producing a self-contained native binary with faster startup, smaller footprint, and no JIT overhead — but at the cost of not supporting certain reflection patterns or libraries that aren't trimming-compatible. For enterprise APIs with complex middleware stacks, the JIT runtime remains the pragmatic default. NativeAOT is best introduced incrementally, starting with new lightweight microservices.

Should enterprise teams skip .NET 9 and go directly to .NET 10?

If you are currently on .NET 8 LTS and planning your next upgrade, .NET 10 is the next LTS release (scheduled for November 2026). Moving from .NET 8 directly to .NET 10 is a supported and common migration path. You will receive the cumulative runtime performance improvements from both .NET 9 and .NET 10 in a single upgrade cycle, which makes this a reasonable strategy for teams that cannot upgrade with every release.

How do the .NET 10 hardware intrinsics improvements affect typical web APIs?

For the vast majority of ASP.NET Core APIs, the new AVX10.2 and Arm64 SVE hardware intrinsics in .NET 10 are not directly applicable unless you are writing explicit vector or SIMD code using System.Runtime.Intrinsics. These improvements benefit teams building high-performance numerical computing, image processing, or data transformation pipelines in .NET. Standard CRUD APIs, middleware pipelines, and database-backed services will not see meaningful gains from the hardware intrinsics additions directly.