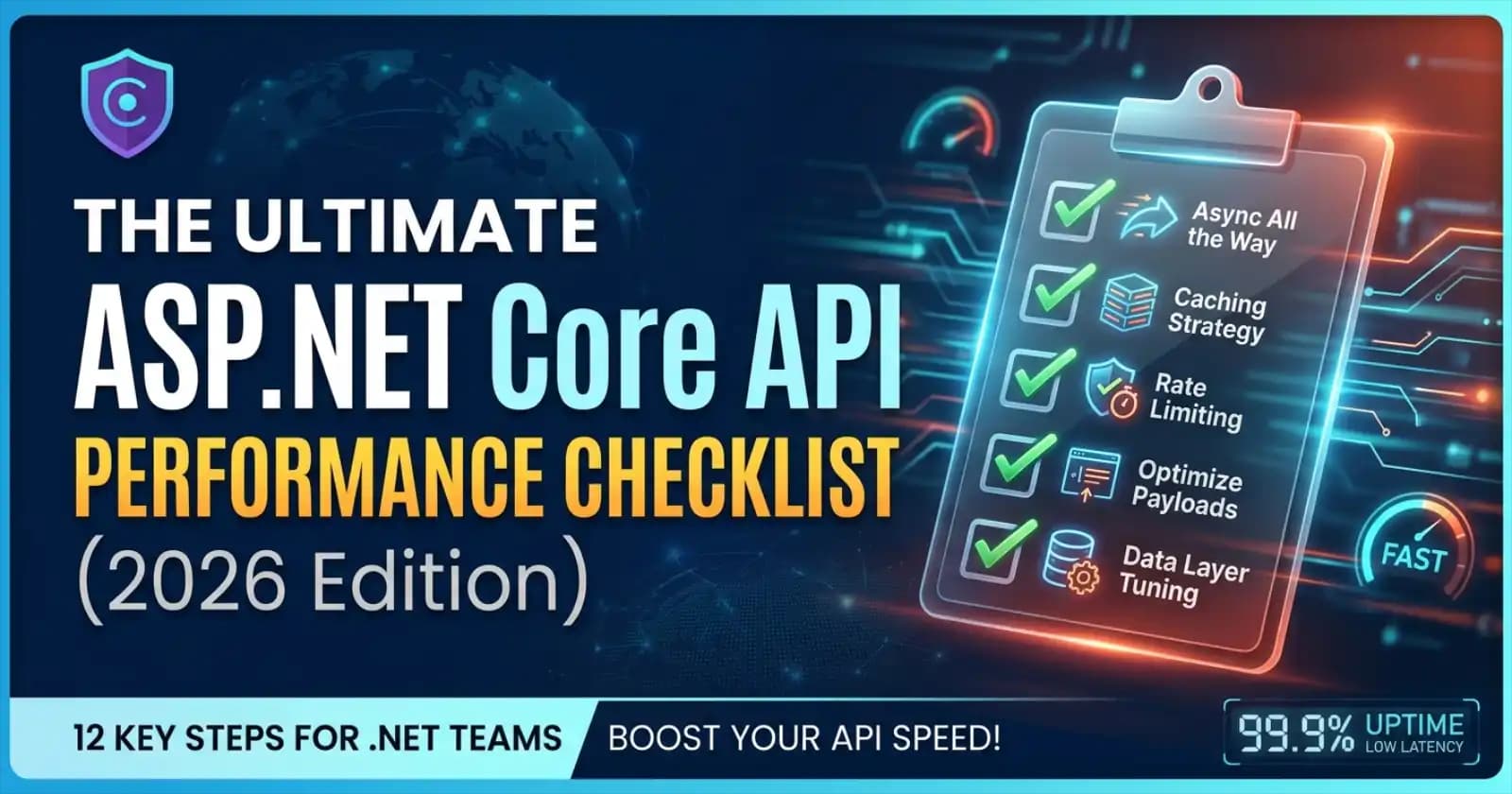

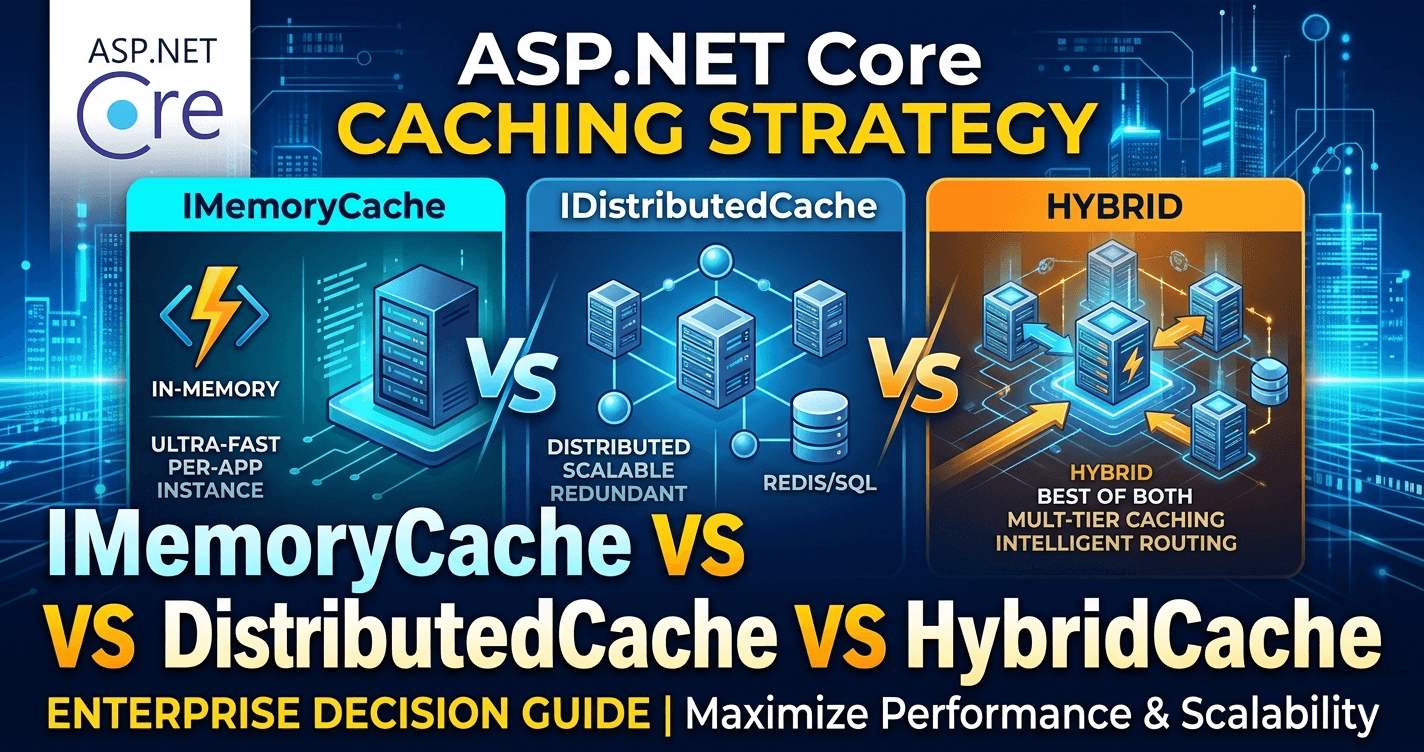

ASP.NET Core Caching Strategy: IMemoryCache vs IDistributedCache vs HybridCache — Enterprise Decision Guide

Caching is one of the highest-leverage decisions in any ASP.NET Core system — and one of the most misapplied. Most teams reach for IMemoryCache out of habit, bolt on IDistributedCache when they scale out, and end up with inconsistent cache management scattered across the codebase. With .NET 9 and .NET 10, that pattern has a proper answer: HybridCache. But HybridCache is not a universal replacement — choosing the right abstraction still depends on your deployment topology, data consistency requirements, and team maturity. This guide gives enterprise teams the decision framework to pick correctly from day one.

Want implementation-ready .NET source code you can adapt fast? Join Coding Droplets on Patreon. 👉 https://www.patreon.com/CodingDroplets

Why Caching Decisions Age Poorly

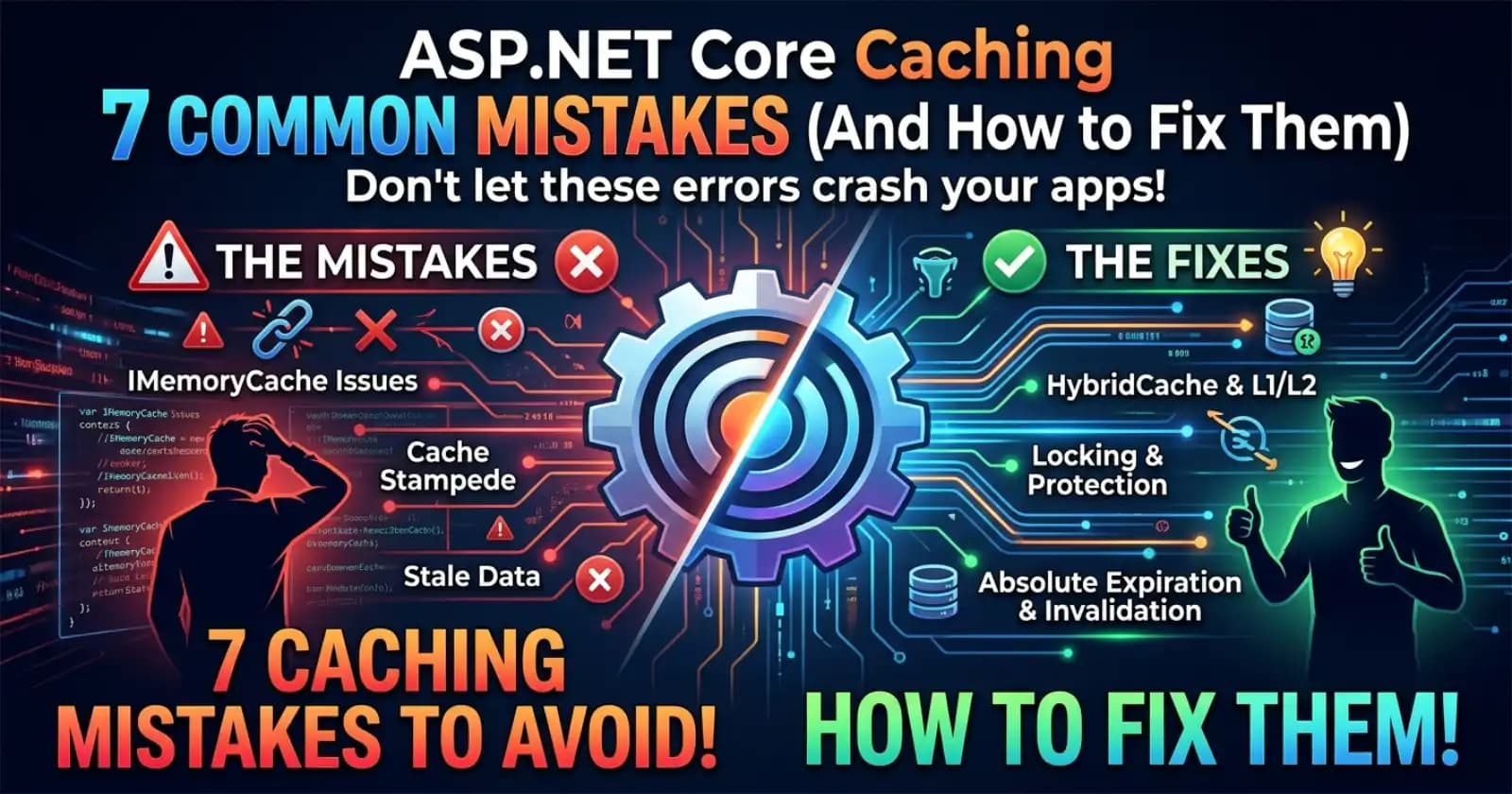

Caching strategy tends to be decided early, when the architecture is simple, and revisited only after production pain. A single-server API that works perfectly with IMemoryCache becomes a source of cache inconsistency bugs the moment a second instance is added. A distributed cache that gets bolted on late introduces serialization overhead and new failure modes that were not planned for. The cost of retrofitting is high. The cost of choosing correctly upfront is low — if you have the right framework.

Understanding the Three Abstractions

Before comparing, it helps to understand what each abstraction actually represents.

IMemoryCache: Process-Local Speed

IMemoryCache is the ASP.NET Core built-in for in-process, in-memory caching. It stores raw .NET object references — no serialization involved. Lookups are nanosecond-range because they are simple dictionary lookups against the heap. The lifecycle is tied to the host process: a restart wipes the cache. There is no built-in coordination between multiple processes or servers.

This is an excellent fit for: reference data that changes rarely and whose staleness across instances is acceptable, computed values that are expensive to re-derive but process-local, and single-server or development environments.

It is a poor fit for: horizontally scaled deployments where cache inconsistency across instances causes correctness issues, session data that must be shared across instances, and any data where consistency matters more than raw speed.

IDistributedCache: Deployment-Safe Consistency

IDistributedCache is the abstraction for out-of-process, shared caching — typically backed by Redis, SQL Server, or a similar external store. Every instance in the deployment reads from and writes to the same cache store. This solves the multi-instance inconsistency problem, but at a cost: every read and write involves network I/O and object serialization (typically JSON or MessagePack). At high request rates, that serialization overhead accumulates.

IDistributedCache is a low-level API: you manage serialization, expiry policy, and cache-miss handling yourself. This creates boilerplate that is often duplicated inconsistently across a codebase.

This is an excellent fit for: shared session state across instances, distributed rate-limiting counters, and any data that must be consistent across all running instances.

It is a poor fit for: hot-path data read at extremely high frequency (where network round-trip cost becomes material), scenarios where teams lack the discipline to manage serialization consistently, and single-server deployments where the overhead adds no benefit.

HybridCache: The Unified Abstraction

HybridCache (GA in .NET 9, available via Microsoft.Extensions.Caching.Hybrid) unifies both layers. It operates as a two-tier cache: an in-process L1 layer (semantically similar to IMemoryCache) backed by an optional out-of-process L2 layer (semantically similar to IDistributedCache). On a cache hit, it returns from L1 without a network round-trip. On an L1 miss, it checks L2 before calling the factory function. On an L2 miss, it executes the factory, writes to both layers, and returns.

Beyond two-tier retrieval, HybridCache adds several enterprise-relevant capabilities that neither predecessor offers cleanly:

Stampede protection: When many concurrent requests miss the cache simultaneously, HybridCache coalesces them into a single factory execution. Only one request computes the value; the rest wait and receive it. This prevents thundering-herd failures after a cache warm-up or invalidation.

Tag-based invalidation: Cache entries can be associated with semantic tags. Calling RemoveByTagAsync("product-catalog") invalidates all entries tagged with that key. This is far more ergonomic than tracking and invalidating individual keys across a large codebase.

Drop-in migration path: HybridCache is designed as a drop-in replacement for IMemoryCache and IDistributedCache. Teams can migrate incrementally, adding L2 distribution later without changing call sites.

Serialization governance: Serialization configuration is centralized at registration, not scattered across individual call sites.

The Enterprise Decision Matrix

The right choice depends on several variables that enterprise teams should evaluate explicitly.

Deployment Topology

If your API runs as a single instance (dev environments, small internal tools, certain Lambda/App Service scenarios where state is never shared), IMemoryCache is appropriate and introduces no unnecessary complexity. Once you deploy two or more instances behind a load balancer — which is the norm for any production SaaS or enterprise API — IMemoryCache alone creates cache inconsistency that is often silent and hard to diagnose.

For multi-instance deployments, the decision narrows to IDistributedCache (for full consistency with network overhead) or HybridCache (for consistency with in-process read performance). In most cases, HybridCache is the better choice unless your L1 TTL would create unacceptable consistency windows.

Consistency Tolerance

L1 in HybridCache introduces a consistency window equal to its TTL. During that window, an instance may serve stale data that the L2 layer has already been updated with. For catalog data, pricing data, and configuration data where a few minutes of staleness is acceptable, this is irrelevant. For financial ledger data, user permissions, or anything where real-time accuracy is a hard requirement, the L1 TTL must be zero or near-zero — which effectively degrades HybridCache to IDistributedCache behavior.

Understanding your consistency tolerance per data category is the most important governance decision in a caching strategy.

Throughput and Latency Profile

At extremely high request rates — hundreds of thousands of requests per second against a small set of cache keys — the serialization and network round-trip cost of IDistributedCache becomes a measurable contributor to latency. HybridCache with an aggressive L1 TTL absorbs the vast majority of reads in-process, making the distributed backplane a fallback rather than the hot path. If your profiling shows cache reads contributing materially to p99 latency, HybridCache is the correct architectural response.

For APIs at more modest scale — a few thousand requests per minute — the performance difference between IDistributedCache and HybridCache is unlikely to be observable. In those cases, HybridCache is still preferable for its stampede protection and invalidation ergonomics, but the performance argument is secondary.

Team and Codebase Maturity

IDistributedCache is deceptively low-level for general codebase use. Teams that adopt it without a shared abstraction layer tend to accumulate inconsistent serialization patterns, missed expiry configurations, and duplicated cache-miss handling. If your team is standardizing a new caching approach, HybridCache provides a higher-level API that reduces boilerplate and enforces consistent serialization at registration time.

Migration Path for Existing Codebases

Teams with IMemoryCache usage can migrate to HybridCache without changing call sites — the API is designed for compatibility. Register HybridCache via AddHybridCache, and the L1 behavior is equivalent. Add a Redis or SQL Server IDistributedCache implementation, and HybridCache automatically begins using it as L2 without further code changes at call sites.

Teams with IDistributedCache usage face a slightly higher migration cost because IDistributedCache and HybridCache have different calling conventions. The GetOrSetAsync pattern in HybridCache replaces the GetAsync/SetAsync call pair of IDistributedCache. Migration can be done incrementally, subsystem by subsystem, without a big-bang rewrite.

The stampede protection benefit is realized immediately upon migration — no additional configuration required.

Governance Decisions Enterprise Teams Should Standardize

A caching strategy is only as good as the team policy that enforces it. Several decisions benefit from explicit team-level standardization rather than being left to individual developers.

Default abstraction: Decide which caching abstraction is the default for new code. In multi-instance deployments on .NET 9 or later, HybridCache should be the default unless there is a specific reason to deviate.

L1 and L2 TTL standards: Define TTL tiers by data category. Reference data (product categories, configuration flags) might have L1 TTLs of 5 minutes and L2 TTLs of 30 minutes. User-session-adjacent data might have L1 TTLs of 30 seconds. Standardizing these prevents the common failure mode of every developer setting TTLs arbitrarily.

Tag naming conventions: If your team uses HybridCache tag-based invalidation, define a naming convention for tags at the domain-aggregate level. This prevents fragmentation where tags are invented ad hoc and invalidation becomes unreliable.

Stampede protection awareness: Teams should understand that HybridCache stampede protection works within a single instance. Across instances, the L2 layer provides consistency but not stampede protection. For extremely hot keys on cold-start scenarios, a warm-up strategy remains advisable.

Cache key governance: Define a cache key structure convention (e.g., {aggregate}:{id}:{version}) and enforce it through a shared key-builder utility. Unstructured cache keys are a common source of stale-data bugs and naming collisions.

When to Retain IMemoryCache or IDistributedCache Directly

HybridCache does not eliminate every use case for the older abstractions.

IMemoryCache remains appropriate for lightweight, single-server scenarios where the added dependency of HybridCache is not justified — small internal tools, CLI utilities, or development-only services.

IDistributedCache remains the correct choice when you need fine-grained control over serialization per entry, when your consistency requirements preclude any L1 TTL, or when you are building a library that needs to honor the DI-registered distributed cache implementation without taking a HybridCache dependency.

In a well-structured enterprise codebase, all three abstractions may coexist: HybridCache for high-throughput domain data, IDistributedCache for session state, and IMemoryCache for process-local computed values. The key is that each choice is intentional and documented, not incidental.

FAQ

Is HybridCache a drop-in replacement for IMemoryCache? For the GetOrSetAsync pattern, yes. HybridCache provides semantically equivalent in-process caching when no L2 (distributed) implementation is registered. The call-site API differs slightly — HybridCache.GetOrSetAsync replaces the IMemoryCache.GetOrCreate pattern — but behavior in a single-instance deployment is equivalent, with the added benefit of stampede protection.

Does HybridCache require Redis? No. HybridCache works without any IDistributedCache registration — it operates purely as an in-process cache in that configuration. Adding a Redis IDistributedCache implementation enables the L2 layer automatically. The choice to add a distributed backing store is decoupled from the choice to use the HybridCache API.

What happens to the L1 cache during a rolling deployment? Each new instance starts with an empty L1 cache and populates it from L2 on demand. The L2 cache (Redis) persists across instance restarts, so the warm-up period for a new instance is bounded by the L2 TTL coverage — most data that is in use will be in L2 and re-populated into L1 on first access. This is a significant operational improvement over IMemoryCache-only deployments, where every new instance is completely cold.

How does stampede protection work across multiple instances? Stampede protection in HybridCache is per-instance. Within a single instance, multiple concurrent requests for the same missing cache key are coalesced into one factory execution. Across instances, each instance independently handles its own cold-start misses. For extremely hot keys on a multi-instance cold start, consider pre-warming via a background service or a dedicated warm-up endpoint, particularly for keys with expensive factory computations.

Should we migrate IDistributedCache usage to HybridCache in a running production system? Yes, but incrementally. Start by adopting HybridCache for new cache call sites. Then migrate existing IDistributedCache call sites one subsystem at a time, beginning with those that have the highest read volume or the most complex stampede risk. Avoid a big-bang migration that introduces a large diff with no functional safety net. Validate that L1 TTLs are set appropriately for each migrated category before moving on.

Is there a performance cost to using HybridCache over IMemoryCache for single-server apps? The overhead is minimal and unlikely to be observable in most workloads. HybridCache adds a small amount of indirection and coalescing logic on the hot path, but this overhead is negligible compared to the computational cost of typical cache factory functions (database queries, HTTP calls, complex calculations). For single-server apps, the stampede protection and API ergonomics of HybridCache are worth the negligible overhead.

How is HybridCache serialization configured? Serialization is configured at registration time via AddHybridCache options, not at individual call sites. By default, HybridCache uses System.Text.Json for L2 serialization. Teams can substitute a custom serializer (e.g., MessagePack for lower serialization overhead on high-throughput paths) at registration, and that configuration applies uniformly across all call sites — a significant governance improvement over IDistributedCache where serialization was typically handled ad hoc.