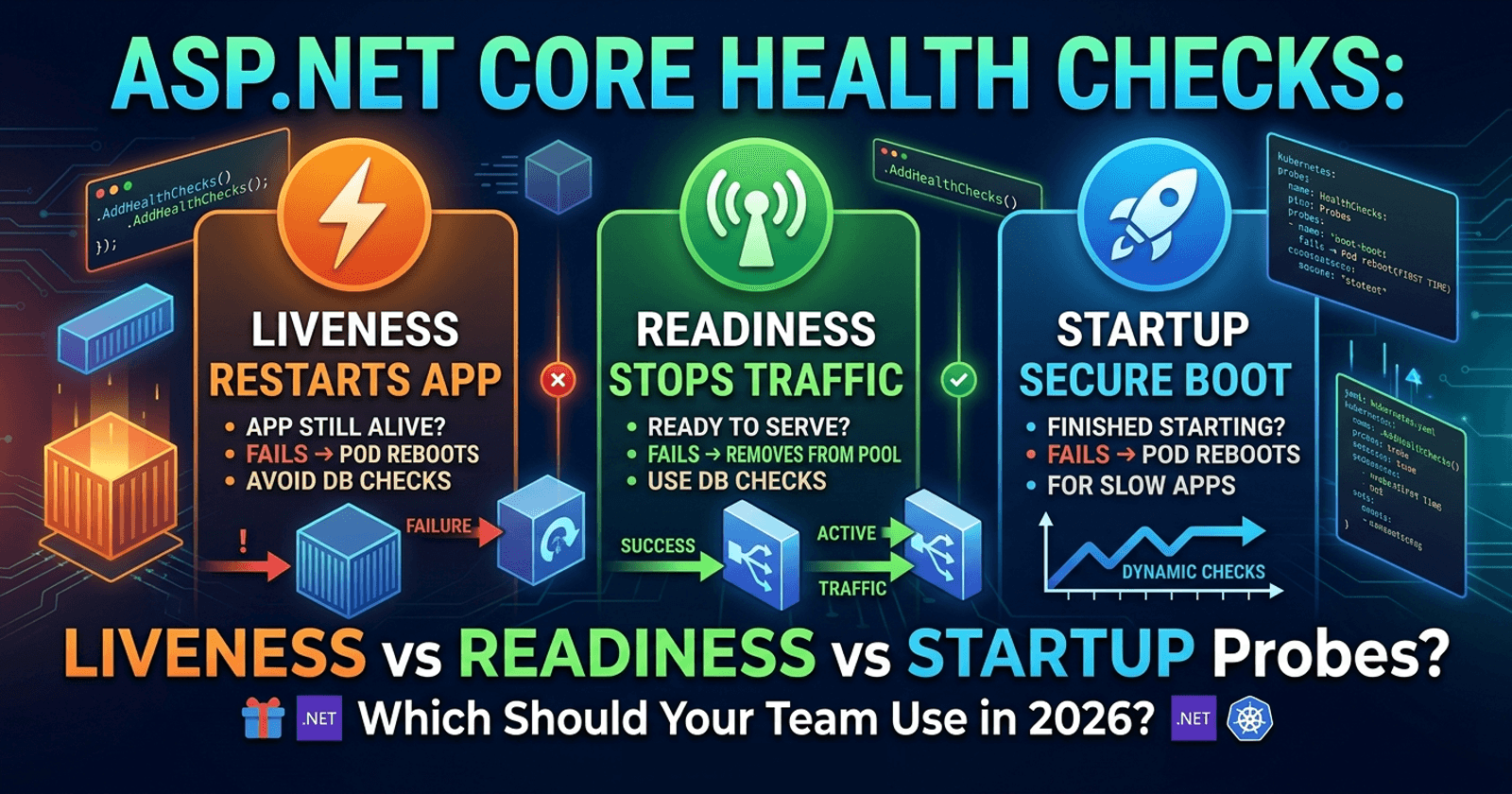

ASP.NET Core Health Checks: Liveness vs Readiness vs Startup Probes in .NET — Which Should Your Team Use in 2026?

ASP.NET Core health checks are one of the most critical yet under-configured aspects of production .NET APIs. When you deploy to Kubernetes, Azure Container Apps, or any orchestrated environment, getting your liveness, readiness, and startup probes right is the difference between zero-downtime deployments and cascading restarts that take down your service at 2 AM. Most teams wire up a single /health endpoint and call it done — but that one-size-fits-all approach quietly causes real incidents. This article breaks down each probe type, when to use each, how they interact, and what your team should standardise on for production workloads.

🎁 Want implementation-ready .NET source code you can drop straight into your project? Join Coding Droplets on Patreon for exclusive tutorials, premium code samples, and early access to new content. 👉 https://www.patreon.com/CodingDroplets

Understanding the Three Health Check Probe Types

Before comparing them side-by-side, it helps to understand what each probe is asking the orchestrator to do when it fails:

- Liveness probe — "Is the app still alive?" A failure triggers a container restart.

- Readiness probe — "Is the app ready to serve traffic?" A failure removes the instance from the load balancer pool without restarting it.

- Startup probe — "Has the app finished starting up?" A failure triggers a container restart only during the initial boot window. Once it passes, the startup probe is never called again.

These three probes serve fundamentally different purposes. Conflating them into a single endpoint causes the most common deployment failures that .NET teams encounter.

What Is the Difference Between Liveness, Readiness, and Startup Probes?

Liveness checks whether the process is still functioning at a basic level — it hasn't deadlocked, crashed into an unrecoverable state, or gone completely silent. In ASP.NET Core terms, this means the HTTP pipeline can respond and the application host is alive. Liveness checks should be lightweight and should almost never include dependency checks (database connectivity, downstream APIs). If your liveness check queries the database and the database is down, Kubernetes will restart your pods — not your database. That amplifies the problem rather than solving it.

Readiness checks whether the app can handle incoming requests right now. It is allowed to include dependency checks because a readiness failure only removes the instance from the load balancer rotation. If your database is temporarily unavailable, a readiness failure gracefully stops new traffic from reaching that instance without restarting the application. This is especially valuable during rolling deployments where some instances may have already migrated to a new schema while others haven't.

Startup checks are a guard against slow-starting applications being killed prematurely by liveness probes. Without a startup probe, you configure liveness with a large initialDelaySeconds — which is a rough estimate that varies across environments and hardware. With a startup probe, you configure a generous boot window (say, 90 seconds across 30 attempts every 3 seconds), and once the startup probe succeeds, the normal liveness probe takes over. This is the right model for ASP.NET Core apps that perform background data loading, warm up caches, or connect to external services during IHostedService.StartAsync.

Side-by-Side Comparison

| Dimension | Liveness | Readiness | Startup |

|---|---|---|---|

| Primary purpose | Detect unrecoverable app state | Detect temporary unreadiness | Allow slow-start apps to boot safely |

| On failure | Container restarts | Instance removed from load balancer | Container restarts |

| Dependency checks? | ❌ No — avoid | ✅ Yes — appropriate | ⚠️ Minimal — just enough to confirm boot |

| Polling lifecycle | Continuous after startup | Continuous after startup | Only during boot window — stops once passed |

| Response time requirement | < 1 second | < 5 seconds typical | Flexible — failure window is wide |

| Kubernetes probe type | livenessProbe |

readinessProbe |

startupProbe |

| ASP.NET Core tag | Custom tag or named check | Named/tagged check | Named check |

| Main risk if misconfigured | Restart storms on dependency failure | Traffic routed to unhealthy instances | App killed before it finishes starting |

When to Use Liveness Checks

Use liveness for self-contained state checks only:

- Is the HTTP server responding?

- Is the thread pool saturated (deadlock detection)?

- Are memory allocations within acceptable bounds?

- Are critical background services (

IHostedService) still running?

Do not include external service checks in liveness. If your Redis cache goes down and your liveness check hits Redis, every pod restarts — creating a thundering-herd effect when Redis comes back up. For external dependencies, use readiness instead.

A common ASP.NET Core pattern is to register a liveness check with the "live" tag and map it to /health/live. The built-in HealthCheckService supports filtering by tag so you can isolate probe endpoints cleanly.

When to Use Readiness Checks

Use readiness for anything that makes the instance unable to serve requests:

- Database connectivity — can the app reach its primary data store?

- Message broker connectivity — is the Kafka/RabbitMQ connection healthy?

- Downstream dependency availability — are critical upstream APIs reachable?

- Warming state — has an in-memory cache been populated enough to serve requests?

- Circuit breaker state — if you use Polly circuit breakers, a half-open or open state could signal temporary unreadiness

The key mental model: readiness failure should be self-healing. When the dependency recovers, the pod becomes ready again automatically without a restart.

For teams using AspNetCore.Diagnostics.HealthChecks (the popular Xabaril package), this is where the library shines — it provides pre-built health checks for SQL Server, PostgreSQL, Redis, RabbitMQ, and dozens of other dependencies that map naturally to readiness checks.

When to Use Startup Checks

Startup probes solve a very specific problem: your app takes longer to fully initialise than your liveness probe's tolerance. This happens when:

IHostedService.StartAsyncimplementations load data from external sources- The application warms up ML models or large in-memory caches

- Background jobs pre-populate lookup tables before the API can serve accurate responses

- Your container image is large and takes time to initialise its runtime

Without a startup probe, the typical workaround is initialDelaySeconds: 30 on the liveness probe — a hardcoded guess that will be too short on slow hardware and unnecessarily long everywhere else. The startup probe replaces this with a dynamic window: keep checking every 3 seconds for up to 30 attempts (90 seconds total), and only hand off to liveness once the app confirms it's done starting.

For ASP.NET Core specifically, startup checks should verify that the IHostApplicationLifetime.ApplicationStarted token has fired and that any critical hosted services have completed their boot sequence.

The Dangerous Anti-Pattern: One Endpoint for Everything

The single /health endpoint pattern is the most common health check mistake in .NET teams:

GET /health → Checks DB + cache + downstream APIs

When this endpoint is mapped to the liveness probe:

- Database has a transient timeout

- Health check returns

Unhealthy - Kubernetes interprets this as a dead container

- All pods restart simultaneously

- Your database receives a connection storm from all pods reconnecting at once

- This triggers another timeout

- All pods restart again

- Repeat until the database falls over entirely

This is a production outage caused by a health check — not by the original transient fault.

The correct architecture separates probe concerns into dedicated endpoints with appropriate tag filtering:

/health/live→ liveness checks only (no external dependencies)/health/ready→ readiness checks (dependencies that affect request handling)/health/startup→ startup completion checks (only polled during boot)

Recommendation for Enterprise .NET Teams

For teams running ASP.NET Core in containerised environments, the recommended standard is:

Always implement all three probes with separated endpoints. The additional setup is minimal and the operational benefit is substantial.

Prioritise readiness probe accuracy over simplicity. A thorough readiness check that accurately reflects whether the instance can serve requests is more valuable than a simple 200 OK. Use the AspNetCore.Diagnostics.HealthChecks package for production-ready checks against your actual dependencies.

Keep liveness checks dependency-free as a hard rule. Make it a team standard, document it in your ADRs, and enforce it in code reviews. A liveness check that queries an external service is a latent production incident.

Use startup probes instead of initialDelaySeconds for any service with a non-trivial boot sequence. This makes deployments more reliable across environments without environment-specific configuration.

Surface health check dashboards internally. Health check UI (available via the AspNetCore.HealthChecks.UI package) gives your ops team a real-time view of dependency health across all services — a significant upgrade over log-diving when an incident occurs.

Integrate with OpenTelemetry. ASP.NET Core 10 and .NET 10's built-in OpenTelemetry pipeline can export health check status as metrics, enabling alerting and dashboarding through your existing observability stack.

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

FAQ

What happens if I only configure a liveness probe and skip readiness? Traffic continues to be routed to your instance even during dependency failures, database connection drops, or startup warm-up periods. Users receive errors until the instance either recovers or gets restarted. For high-availability workloads, skipping readiness is equivalent to removing graceful degradation from your deployment strategy.

Can I use a single health check registered with multiple tags? Yes. ASP.NET Core allows a health check to be registered with multiple tags, and you can filter endpoints by tag. However, in most cases it's cleaner to register separate checks for separate concerns — a lightweight self-check for liveness and a dependency-aware check for readiness — rather than reusing the same check with multiple tags.

Should startup probes check external dependencies?

Minimally. The startup probe's job is to confirm the application has finished its internal boot sequence — not to certify that all dependencies are healthy. If your app successfully completes StartAsync but the database is temporarily unavailable, the readiness probe should handle that, not the startup probe. Conflating the two delays startup unnecessarily when dependencies are slow but not broken.

How do I configure health check probes in Azure Container Apps?

Azure Container Apps supports liveness and readiness probes with the same semantics as Kubernetes. As of 2026, Azure Container Apps also supports startup probes. You configure them under the probes section in your Container Apps configuration, pointing to your dedicated /health/live, /health/ready, and /health/startup endpoints. The Microsoft documentation for Azure Container Apps health probes provides the full configuration schema.

What is the right polling interval for each probe type? A reasonable starting point: liveness every 15–30 seconds with a failure threshold of 3, readiness every 10 seconds with a failure threshold of 3, and startup every 3 seconds with a failure threshold of 30 (giving 90 seconds to complete boot). Adjust based on your app's observed startup time and the cost of unnecessary restarts in your environment.

Does the AspNetCore.Diagnostics.HealthChecks package work with ASP.NET Core 10?

Yes. The Xabaril AspNetCore.Diagnostics.HealthChecks package (available on GitHub and NuGet) maintains compatibility with current ASP.NET Core versions. It provides pre-built, production-tested health checks for over 100 external services and is the standard choice for enterprise teams that need database, message broker, and storage health checks without writing them from scratch.

What is the difference between HealthStatus.Degraded and HealthStatus.Unhealthy in ASP.NET Core?

Unhealthy means the check has failed — the component is not functioning. Degraded means the component is functioning but at reduced capacity or with elevated risk. In practice, Degraded is a useful signal for human alerting and dashboards but does not necessarily warrant taking an instance out of rotation. Most teams configure readiness endpoints to treat both Degraded and Unhealthy as failure states for readiness, but only Unhealthy as a failure for liveness.