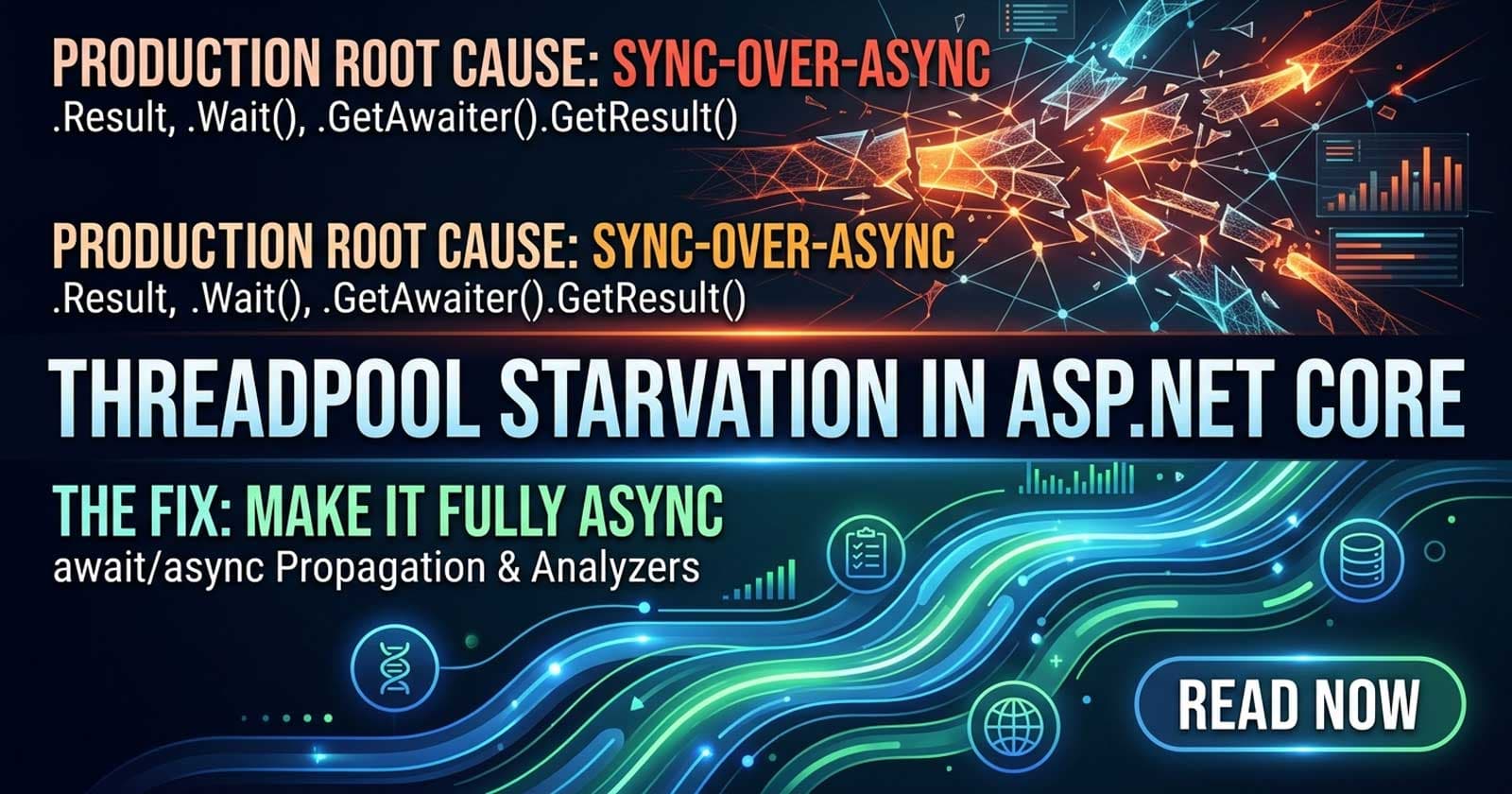

ThreadPool Starvation in ASP.NET Core: Production Root Cause and Fix

ThreadPool starvation is one of the most deceptive failure modes in ASP.NET Core — the application appears healthy under low load but becomes unresponsive or extremely slow under moderate traffic, often with no obvious exception in the logs. The root cause, in the vast majority of cases, is sync-over-async code: calling .Result, .Wait(), or .GetAwaiter().GetResult() on Task-returning methods inside code paths that run on ThreadPool threads. If you want to go deeper with working implementations and real-world diagnosis scenarios, the full production codebase is available on Patreon — including annotated examples of the patterns covered here, wired into a complete ASP.NET Core API.

Understanding how sync-over-async causes starvation is particularly valuable in the context of background services and long-running tasks. Chapter 12 of the Zero to Production course walks through IHostedService, BackgroundService, and System.Threading.Channels inside a full production codebase — showing exactly how improper blocking patterns ripple through the request pipeline and what the async-safe alternatives look like in practice.

This article covers the problem description, why it happens mechanically, how to diagnose it in production, the fix, and how to prevent it from recurring.

The Problem: API Stops Responding Under Load

The classic symptom looks like this: under low traffic, everything works fine. As concurrent requests increase — say, past 20-30 simultaneous users — the API starts taking 30, 60, even 120+ seconds to respond to requests that normally complete in under 100ms. CPU usage is low. Memory looks normal. No exceptions appear. Healthcheck endpoints time out.

This pattern almost always points to ThreadPool exhaustion, not resource contention at the database or network layer.

Why ThreadPool Starvation Happens

ASP.NET Core uses the .NET ThreadPool to process requests. The ThreadPool maintains a pool of worker threads and grows it when work items are queued faster than they can be completed — but growth is throttled (typically one new thread per 500ms) to avoid the overhead of spawning too many threads rapidly.

When code calls .Result or .Wait() on an incomplete Task, the calling thread blocks — it holds its ThreadPool thread but does no useful work. The blocked task needs a free thread to resume on when the awaited work completes. If all threads are blocked, no thread is available. The ThreadPool tries to inject new threads, but the injection rate is too slow to keep up. Requests queue up waiting for a thread. Response times spike.

This is the sync-over-async anti-pattern: synchronous code blocking on an async operation, consuming a thread while waiting and preventing that thread from serving other requests.

Where It Hides in Production Code

The most common places sync-over-async appears in ASP.NET Core applications:

Legacy code integration. A library originally written for synchronous execution gets called from async middleware or background services via .Result or .GetAwaiter().GetResult(). The original code "worked in development" because load was too low to saturate the ThreadPool.

Constructor injection of async services. Constructors cannot be async, so developers sometimes call .Result in a constructor to resolve something asynchronously — a database value, configuration from an external source, or an initial cache load.

Background services with blocking calls. An IHostedService implementation calls external services synchronously inside its ExecuteAsync override, blocking the ThreadPool threads it holds.

Third-party middleware. Older middleware components not written for ASP.NET Core's async pipeline may internally block on async calls.

Unit test helpers leaking into production. Code paths originally written for test convenience — where blocking was acceptable — end up reused in production code without review.

Why It Doesn't Show Up in Development

Development environments have low concurrency. A developer machine rarely saturates 8–16 available ThreadPool threads. The problem only manifests under real load — staging environments with realistic user counts, or production under peak traffic.

This is why ThreadPool starvation is so dangerous: it passes all unit tests, all integration tests, and all smoke tests, then surfaces the first time the application sees meaningful concurrent traffic.

How to Diagnose ThreadPool Starvation

Step 1: Confirm Thread Starvation with dotnet-counters

The fastest confirmation tool is dotnet-counters, available as a global tool:

dotnet counters monitor --process-id <pid> System.Runtime

Look for these signals:

ThreadPool Queue Lengthgrowing without boundThreadPool Thread Countincreasing steadily (the runtime is injecting new threads trying to keep up)ThreadPool Completed Work Item Countis low relative to the queue lengthMonitor Lock Contention Countelevated (secondary symptom of blocking)

A healthy application under load has a stable thread count and a near-zero queue length. Starvation shows thread count climbing and queue length growing.

Step 2: Capture a Thread Dump

Use dotnet-stack or dotnet-dump to capture what every thread is doing:

dotnet-stack report --process-id <pid>

In the output, look for threads with call stacks containing:

Task.Wait()Task.ResultGetAwaiter().GetResult()SynchronizationContext.Wait

Multiple threads blocked at these points while a large queue of ThreadPoolWorkQueue items wait is the definitive confirmation.

Step 3: Identify the Culprit Call Site

Once you've confirmed starvation and found blocking calls in the stack trace, trace them back to the source:

- Which controller action, middleware component, or background service is the entry point?

- Is it in application code, a library, or a framework component?

- How many simultaneous requests are needed to trigger saturation?

The Microsoft Diagnostics documentation on debugging ThreadPool starvation provides a detailed walkthrough with sample apps and ProcDump-based analysis for both Windows and Linux.

The Fix

Primary Fix: Make the Call Stack Fully Async

The correct resolution is to propagate async/await through the entire call chain that contains the blocking call. This means:

- Replace

.Resultwithawait - Replace

.Wait()withawait - Replace

.GetAwaiter().GetResult()withawait - Mark the containing method as

async Task(orasync Task<T>) - Propagate

asyncup through all callers until every blocking path is eliminated

This is straightforward in new code. In legacy codebases with deep call hierarchies, it requires a systematic refactor — changing one method forces the next caller to become async, which forces its caller, and so on ("the async virus"). This is by design: async propagation is how the runtime ensures no thread blocks.

Handling the Constructor Problem

Since constructors cannot be async, code that previously blocked in a constructor needs an alternative approach:

- Factory pattern: Replace direct constructor injection with an async factory that the DI container calls via

IHostedServicestartup, or via a lazy-initialized wrapper. - Lazy async initialization: Use

Lazy<Task<T>>withLazyThreadSafetyMode.ExecutionAndPublicationto ensure initialization happens once without blocking. - Move initialization to

IHostedService.StartAsync: If the blocking logic is startup-time initialization, anIHostedServicethat runs before the application starts accepting requests is the right place for it.

Handling Third-Party Synchronous Libraries

When a third-party library only exposes synchronous APIs:

- Offload to a dedicated thread: Use

Task.Run(() => library.SynchronousCall())to run the blocking call on a ThreadPool thread that is explicitly dedicated to it — this avoids blocking the request thread but adds overhead. - Evaluate alternatives: Most modern .NET libraries provide async APIs. If the library does not, evaluate whether a replacement exists.

- Wrap in a dedicated thread: For high-throughput paths, consider a dedicated thread (not ThreadPool) using

Threador a customTaskSchedulerto avoid the ThreadPool entirely.

Important:

Task.Run()does not eliminate the blocking — it moves it off the request thread to a different ThreadPool thread. This is only acceptable if the blocking duration is short and the concurrency is bounded. For long-running or high-concurrency workloads, fully async code is the only correct solution.

How to Handle Background Services

If the starvation originates in a BackgroundService or IHostedService, the fix is to ensure ExecuteAsync is fully async and that all I/O calls inside the service use their async counterparts. The IServiceScopeFactory pattern (injecting a scope factory rather than a scoped service directly) ensures DbContext and other scoped services are resolved correctly without blocking — see Chapter 12 of the Zero to Production course for the complete implementation.

Prevention: Structural Guards Against Regression

Enable ValidateScopes and ValidateOnBuild in Development

builder.Services.Configure<ServiceProviderOptions>(options =>

{

options.ValidateScopes = true;

options.ValidateOnBuild = true;

});

This doesn't catch sync-over-async directly, but validates DI lifetime mismatches that often accompany it.

Use Roslyn Analyzers

The Microsoft.VisualStudio.Threading.Analyzers NuGet package adds Roslyn analyzers that flag sync-over-async patterns at compile time. Rules like VSTHRD002 (avoid problematic synchronous waits) and VSTHRD110 (observe the result of await) catch most of the common mistakes before they reach production.

Code Review Checklist

Add a specific item to your team's code review checklist:

- No

.Resultor.Wait()onTask-returning methods in request pipeline or background service code - No

GetAwaiter().GetResult()outside of genuinely synchronous entry points (e.g.,Mainin pre-.NET 6 apps) - All

async Taskmethods awaited at every call site

Load Testing in CI

ThreadPool starvation only manifests under load. A CI pipeline that runs load tests (even with modest concurrency — 20-50 virtual users) will expose starvation before it reaches production. Tools like k6, NBomber, or BenchmarkDotNet can serve this role.

What a Good Async Stack Looks Like Under Load

A fully async request pipeline means every I/O operation — database queries, HTTP calls, file reads — releases its ThreadPool thread while waiting for the I/O response. The thread returns to the pool, handles other requests, and resumes the original request when the I/O completes.

Under this model, a single application server can handle thousands of concurrent I/O-bound requests with a thread count that remains stable and proportional to CPU count — not to concurrent user count. This is the scalability model ASP.NET Core was designed around, and sync-over-async completely breaks it.

The Microsoft documentation on Debug ThreadPool Starvation includes a live sample app that demonstrates the starvation scenario end-to-end — useful for verifying your diagnosis tooling works before you need it in production.

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

Frequently Asked Questions

What is the difference between a deadlock and ThreadPool starvation in ASP.NET Core?

A deadlock is a circular dependency where two or more operations each wait for the other to complete — neither can proceed. ThreadPool starvation is a resource exhaustion problem: threads are available but all blocked, so new work cannot be scheduled. Deadlocks produce immediate hangs on specific operations; starvation produces progressive slowdown under load. Both can be caused by sync-over-async, but starvation is more common in ASP.NET Core because the framework uses a SynchronizationContext-free execution model that eliminates the classic deadlock scenario described in older .NET Framework guides.

Does ASP.NET Core have a SynchronizationContext that causes deadlocks like ASP.NET classic?

No. ASP.NET Core does not use a SynchronizationContext by default, which means the classic .Result/.Wait() deadlock described for ASP.NET classic (where the continuation needed to resume on the request thread, but the request thread was blocked) does not occur in ASP.NET Core. However, sync-over-async in ASP.NET Core still causes ThreadPool starvation, which is just as damaging to throughput, even without a deadlock.

Is Task.Run() an acceptable workaround for blocking calls?

Task.Run() offloads a blocking call from the request thread to a ThreadPool thread, which prevents holding a request thread hostage — but it still consumes a ThreadPool thread. For short, infrequent operations, this is tolerable. For high-concurrency or long-duration blocking, it simply moves the starvation problem rather than solving it. The correct solution is always to use genuinely async APIs end-to-end.

How do I find blocking calls in a large codebase?

Use the Microsoft.VisualStudio.Threading.Analyzers Roslyn package — it statically analyzes code and flags .Result, .Wait(), and GetAwaiter().GetResult() on Task instances. For runtime discovery in production, dotnet-stack and dotnet-dump with a memory analysis tool (like PerfView on Windows or dotnet-trace cross-platform) will show threads blocked at these call sites.

Will adding more threads to the ThreadPool fix starvation?

Temporarily, yes — calling ThreadPool.SetMinThreads() to increase the minimum thread count will inject threads faster and may reduce the latency spike under sudden load. However, this is a patch, not a fix. More threads mean more memory, more context-switching overhead, and a higher steady-state resource cost. The correct fix is to eliminate blocking calls so threads are not held.

Can ConfigureAwait(false) prevent ThreadPool starvation?

ConfigureAwait(false) tells the awaited task not to resume on the captured SynchronizationContext — it does not eliminate blocking. It prevents a specific class of deadlock in SynchronizationContext-using frameworks (like ASP.NET classic, WinForms, or WPF). In ASP.NET Core, where there is no ambient SynchronizationContext, ConfigureAwait(false) has no practical effect on deadlock prevention or starvation. Library code should still use it as a best practice to remain safe across all calling environments.

How does starvation differ between IHostedService and controller actions?

The mechanism is identical — both use ThreadPool threads. The difference is visibility: controller actions have request-scoped timing that shows up directly as response latency. Background services running inside IHostedService can starve the pool silently, causing request latency to spike with no obvious correlation to the background service in logs. This makes background-service-originated starvation harder to diagnose, which is why monitoring ThreadPool queue length and thread count is important even when symptoms appear in the request pipeline.