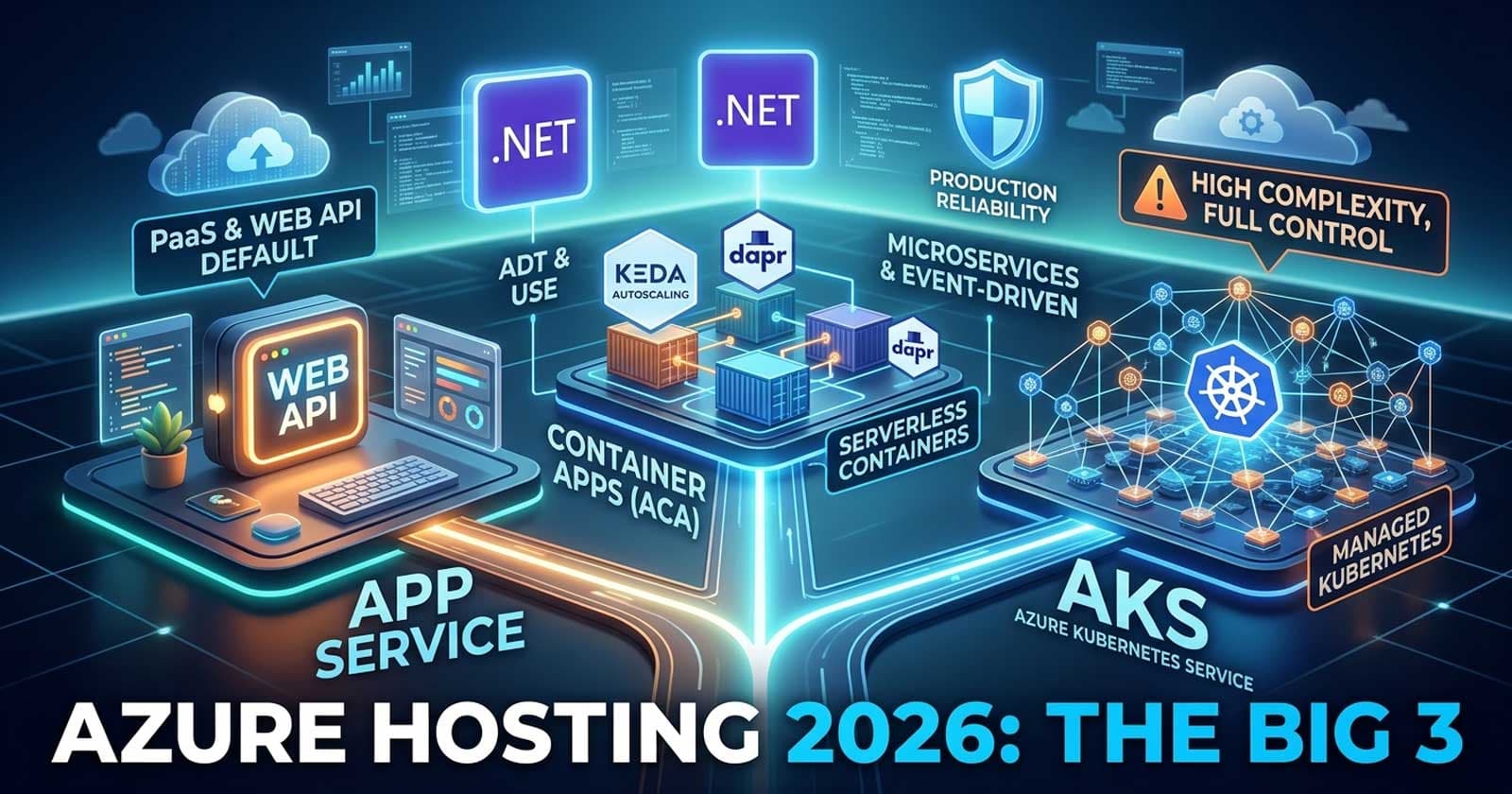

Azure App Service vs Azure Container Apps vs AKS in .NET: Which Azure Hosting Platform Should Your Team Use in 2026?

Choosing the right Azure hosting platform for an ASP.NET Core application is one of the most consequential infrastructure decisions a .NET team makes — and in 2026, the landscape is more nuanced than it used to be. Azure App Service, Azure Container Apps, and Azure Kubernetes Service (AKS) all run .NET workloads reliably in production, but they represent fundamentally different trade-offs around control, operational complexity, cost, and scalability. The wrong choice does not break an application, but it can quietly drain engineering time, inflate cloud bills, or create ceilings that you hit at the worst possible moment.

The full decision framework — including real-world team profiles, workload patterns, and annotated deployment configurations — is available on Patreon, where production-ready examples map directly to what enterprise teams actually ship.

Understanding how each platform fits into a real Azure deployment also becomes much clearer when you see it wired into a complete API. Chapter 15 of the ASP.NET Core Web API: Zero to Production course covers containerisation, multi-stage Dockerfiles, and CI/CD deployment pipelines end to end — so the hosting context is always visible alongside the code.

What Each Platform Actually Is

Before comparing them, it helps to understand what problem each service was designed to solve — because each started from a different philosophical premise.

Azure App Service is the oldest of the three. It is a fully managed PaaS platform optimised for web applications and HTTP APIs. You give it code or a container image, configure a few settings, and the platform handles the runtime, OS patching, TLS, and horizontal scaling. It is the closest thing Azure has to a "just make it run" service.

Azure Container Apps (ACA) is a newer, serverless-first container platform built on top of Kubernetes and KEDA (Kubernetes Event-Driven Autoscaling). Microsoft manages the underlying Kubernetes cluster entirely. You interact only with containers, revisions, and scaling rules — never with nodes, pods, or cluster upgrades. ACA is designed for teams that want container-native flexibility without the Kubernetes management overhead.

Azure Kubernetes Service (AKS) is a managed Kubernetes service. "Managed" here means Microsoft manages the control plane; you still provision and maintain worker nodes, apply OS patches, plan upgrades, and operate the cluster day-to-day. AKS gives you full Kubernetes primitives — custom resource definitions, service meshes, advanced networking policies — at the cost of significant operational responsibility.

Side-by-Side Comparison

| Dimension | App Service | Container Apps | AKS |

|---|---|---|---|

| Abstraction level | PaaS — fully managed | Serverless containers | Managed Kubernetes |

| Container support | Yes (but also code-based deploy) | Native — containers only | Native — containers only |

| Operational complexity | Low | Medium-low | High |

| Scale-to-zero | No (minimum 1 instance on standard plans) | Yes — native | Yes, with KEDA |

| Autoscaling triggers | CPU, memory, HTTP | HTTP, CPU, KEDA event sources | Any — custom HPA/KEDA |

| Networking control | Limited (VNet integration available) | VNET integration, internal/external ingress | Full — custom CNI, NSGs, ingress controllers |

| Multi-container workloads | Limited (sidecar in preview) | Yes — native multi-container environments | Yes — full pod spec |

| Blue/green + canary | Deployment slots | Traffic splitting by revision | Full ingress controller support |

| Cost model | Per plan (compute always-on) | Per vCPU-second + memory-second | Node pool VMs + AKS management fee |

| CI/CD integration | GitHub Actions, Azure DevOps, zip deploy | GitHub Actions, Azure DevOps | GitHub Actions, Flux, Argo CD |

| Kubernetes expertise required | None | Low | High |

| Best fit | Web APIs, simple workloads | Microservices, event-driven, scale-to-zero | Complex enterprise, custom networking |

Azure App Service: The Pragmatic Default

App Service earns its place as the default choice for most ASP.NET Core APIs because the operational surface is small. A team that deploys to App Service spends almost no time on infrastructure — no node maintenance, no cluster upgrades, no container runtime decisions. The platform absorbs that complexity.

When App Service Is the Right Call

The workload is a web API, web app, or background worker with predictable traffic patterns.

The team is small or does not have dedicated DevOps/platform engineering capacity.

Deployment simplicity matters more than container-native features.

The application does not need to scale to zero (App Service keeps at least one instance warm on standard and premium plans).

You want native integration with Azure AD, Application Insights, and Azure Key Vault without additional configuration layers.

Where App Service Falls Short

App Service shows its limits when teams move toward container-native architectures. Sidecar container support is still limited compared to what Kubernetes offers natively. Scale-to-zero is not available on plans where cold start latency matters for production APIs. Fine-grained networking controls require Premium v3 plans with VNet integration, adding cost and configuration overhead.

For teams building their first production ASP.NET Core API, App Service paired with GitHub Actions CI/CD (see the ASP.NET Core Deployment & Docker Interview Questions guide for common pitfalls) is often the fastest path to a running, maintainable production system.

Azure Container Apps: The Modern Middle Ground

Azure Container Apps occupies an interesting position: it is more flexible than App Service but dramatically less complex than AKS. It is the answer to a real question that many teams face — "we want container-native deployments, Dapr support, and event-driven scaling, but we don't want to operate Kubernetes ourselves."

When Container Apps Is the Right Call

The workload consists of multiple containers with different scaling requirements (e.g., an API container that scales on HTTP load and a background processor that scales on queue depth).

Scale-to-zero is genuinely useful — for workloads with irregular traffic or for non-production environments where keeping instances warm is wasteful.

The team wants KEDA-based event-driven autoscaling without managing KEDA infrastructure directly.

Dapr integration would simplify service-to-service communication or state management.

Revision-based traffic splitting (e.g., send 10% of traffic to a new revision before a full rollout) is a requirement.

Where Container Apps Falls Short

Container Apps sits on Kubernetes underneath, but you cannot access Kubernetes APIs directly. If you need custom resource definitions, a service mesh like Istio or Linkerd, advanced network policies, or access to node-level metrics, Container Apps will not accommodate that. The platform makes opinionated choices about ingress, scaling, and networking that work well for the majority of workloads but can become constraints for complex distributed systems.

Cost predictability can also be a concern. The per-vCPU-second billing model is economical for bursty or low-traffic workloads but can cost more than a reserved App Service plan for consistently high-throughput APIs.

AKS: Full Control, Full Responsibility

AKS is the choice when a team needs capabilities that no managed platform abstraction can provide. Custom networking with specific CNI plugins, multi-tenant cluster sharing, GPU workloads, advanced pod security policies, custom admission controllers — these belong in AKS.

When AKS Is the Right Call

The organisation already has Kubernetes expertise and platform engineering capacity to operate clusters.

Multiple services share a cluster, and cost allocation or namespace isolation is a business requirement.

The workload has networking requirements — specific ingress controllers, service mesh policies, egress filtering, custom DNS — that no PaaS platform exposes.

Regulatory compliance requires specific node configurations, OS hardening, or audit capabilities at the cluster level.

The deployment strategy involves GitOps tooling like Flux or Argo CD with custom controller workflows.

Where AKS Falls Short

AKS is not free infrastructure — it is a managed control plane sitting on top of a cluster you still operate. Worker node patching, cluster version upgrades (ideally tested in staging before production), node pool sizing, and cluster networking decisions are all your responsibility. The operational burden is real, and teams that underestimate it often end up with unmaintained clusters running outdated Kubernetes versions.

For teams earlier in their cloud-native journey, adopting AKS before the organisation has the engineering capacity to support it consistently creates more problems than it solves. The .NET Aspire vs Docker Compose guide covers the local development side of this transition — worth reading before committing to a Kubernetes-based production strategy.

How to Think About This Decision

There is a useful mental model for navigating this choice: the "ops budget" question.

How much engineering time per sprint is your team willing to spend on infrastructure operations?

Near zero → App Service

One to two days per sprint → Container Apps

Dedicated platform engineering team → AKS

This is not about capability — any competent team can operate AKS. It is about opportunity cost. Every hour spent on cluster upgrades and node debugging is an hour not spent on product features.

The Hybrid Reality

Many enterprise .NET teams end up with a hybrid approach: App Service or Container Apps for stable internal APIs and web applications, AKS for complex microservice meshes or workloads requiring granular control. This is not a compromise — it is a pragmatic acknowledgement that different workload types have different infrastructure needs.

What's New to Watch in 2026

Azure Container Apps has been gaining feature parity quickly. Native job support, improved VNet integration, and expanded KEDA scaler support have made it compelling for workloads that would have required AKS two years ago. Microsoft's own recommendation is increasingly "Container Apps first, AKS only when you genuinely need it" — a shift worth taking seriously.

The Recommendation

For the majority of .NET teams deploying ASP.NET Core APIs in 2026:

Start with App Service if the team is small, the workload is a straightforward HTTP API, and operational simplicity is a priority. You can always migrate to containers later.

Choose Container Apps if the workload is multi-container, event-driven, or needs scale-to-zero — and the team does not have the capacity to operate Kubernetes directly.

Choose AKS if the organisation already runs Kubernetes, has dedicated platform engineering, and needs capabilities that neither App Service nor Container Apps can provide.

The deciding factor is rarely technology. It is the team's real capacity to operate what they choose. A well-run App Service deployment consistently outperforms a poorly operated AKS cluster.

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

FAQ

Is Azure App Service suitable for containerised ASP.NET Core APIs in 2026? Yes. App Service supports container deployments on Linux with Docker images from Azure Container Registry or other registries. It is a solid choice for containerised workloads that do not require multi-container environments or Kubernetes-native features.

When should a .NET team choose Azure Container Apps over AKS? Choose Azure Container Apps when you need container-native flexibility — multi-container workloads, KEDA event-driven scaling, or scale-to-zero — but do not have the engineering capacity to operate and maintain a Kubernetes cluster. AKS is appropriate only when you need direct Kubernetes API access, custom cluster-level configuration, or have a dedicated platform engineering team.

Does Azure Container Apps support .NET Aspire? Yes. .NET Aspire has native publishing support for Azure Container Apps via the Aspire Azure hosting integrations. You can publish an Aspire AppHost project directly to Container Apps without writing manual Bicep or Kubernetes manifests, which significantly reduces the deployment complexity for multi-service .NET applications.

What is the cost difference between Azure App Service and Azure Container Apps for a typical ASP.NET Core API? It depends heavily on traffic patterns. App Service uses a fixed-cost compute plan (predictable billing regardless of traffic). Container Apps bills per vCPU-second and memory-second, making it cheaper for low or bursty traffic but potentially more expensive for consistently high-throughput workloads. For production APIs with steady traffic above a few hundred requests per minute, compare actual costs using the Azure Pricing Calculator with your expected vCPU and memory allocation before committing.

Can I run a .NET background service (hosted service / BackgroundService) on Azure Container Apps? Yes. Container Apps supports container jobs for task-oriented workloads and long-running containers for hosted services. For event-driven background processing — for example, processing messages from Azure Service Bus or Storage Queue — KEDA-based scaling rules in Container Apps trigger and scale container instances based on queue depth, which is a natural fit for BackgroundService consumers.

What happens when an AKS cluster version goes end-of-life for a .NET production workload? AKS requires active version management. When a Kubernetes version reaches end-of-life, the cluster must be upgraded to a supported version. Microsoft provides a maintenance window feature and documentation for in-place upgrades, but the upgrade must be planned and tested. For teams without dedicated platform engineering, the ongoing cluster version lifecycle is often the most underestimated operational cost of choosing AKS over a fully managed platform.

Is Azure App Service appropriate for microservices architectures? It can work for small numbers of services (two to five) where each service gets its own App Service Plan. However, as the number of services grows, the cost and management overhead scales linearly with each new plan. Container Apps is a better fit for microservices because it was designed for multi-container environments with independent scaling per service within a shared managed environment.