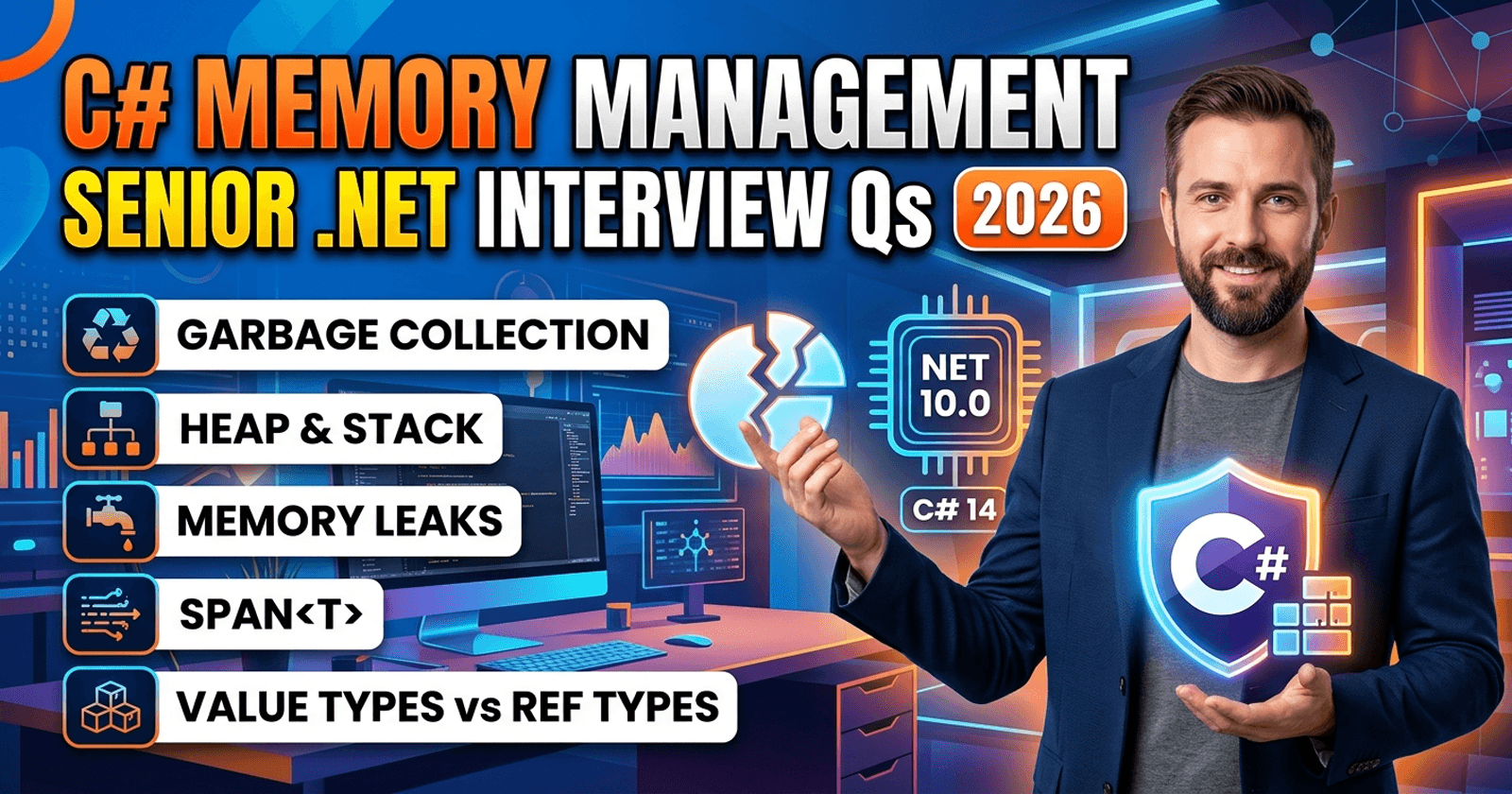

C# Memory Management Interview Questions for Senior .NET Developers (2026)

Memory management is one of those topics that separates senior .NET developers from the rest. You can write functional C# for years without deeply understanding how the garbage collector works, why IDisposable matters, or when Span<T> and ArrayPool<T> are the right tools. But in interviews and in production systems, that depth shows.

🎁 Want production-ready .NET code samples and exclusive tutorials? Join Coding Droplets on Patreon for premium content delivered every week. 👉 Join CodingDroplets on Patreon

This guide covers the memory management interview questions most commonly asked of senior .NET developers in 2026, grouped by difficulty, with clear answers that reflect how .NET actually works — not just the theory.

Basic Questions

Q1: What is the difference between stack and heap memory in C#?

The stack is a fixed-size, LIFO structure used for value types (structs, primitives) and method call frames. It is automatically managed — when a method returns, its stack frame is popped. The heap is a dynamically sized memory region used for reference type instances. Objects on the heap persist until no live references to them exist and the garbage collector reclaims them.

A common misconception is that all value types live on the stack. That is only true when a value type is a local variable. Value types stored as fields of a class live on the heap inside that class's memory allocation.

Q2: What is the .NET garbage collector and how does it work at a high level?

The .NET garbage collector (GC) is an automatic memory manager. It tracks all object references in your application and periodically identifies objects that are no longer reachable from any root (local variables, static fields, CPU registers, GC handles). Unreachable objects are collected and their memory is reclaimed.

The GC does not run on every allocation — it runs when memory pressure triggers it, typically when generation 0 fills up. Collections are generational, meaning short-lived objects are collected cheaply and frequently, while long-lived objects are promoted through generations and collected rarely.

Q3: What are GC generations and why do they matter?

The GC divides the heap into three generations:

- Generation 0 — New, short-lived objects. This is collected most frequently and cheaply. Most objects die here.

- Generation 1 — Objects that survived a Gen 0 collection. A buffer zone between Gen 0 and Gen 2.

- Generation 2 — Long-lived objects (caches, singletons, static data). Collections here are expensive and should be infrequent.

The generational hypothesis is the key insight: most objects die young. The GC exploits this by collecting Gen 0 (a small region) far more often than Gen 2 (the full heap). If your application promotes too many objects to Gen 2 — for example, by holding references longer than necessary — you will see longer GC pauses and higher memory pressure.

Q4: What is the Large Object Heap (LOH)?

Objects larger than 85,000 bytes (approximately 83 KB) are allocated on the Large Object Heap, a separate region of the managed heap. The LOH is collected as part of Gen 2 collections (not Gen 0 or Gen 1). Historically, the LOH was never compacted, which caused fragmentation over time — large allocations might fail despite sufficient total free space. Since .NET 4.5.1, you can trigger LOH compaction manually, and .NET 6+ can compact it automatically in some scenarios.

The practical implication: avoid frequent large allocations (large arrays, large strings, large buffers) inside hot paths. Pool them instead.

Q5: What does IDisposable do and why is it important?

IDisposable provides a deterministic cleanup mechanism for unmanaged resources — file handles, database connections, network sockets, native memory. The GC cannot collect these automatically because it only understands managed heap memory.

Implementing IDisposable correctly means releasing unmanaged resources in the Dispose() method and, optionally, implementing a finalizer as a safety net. The standard pattern also includes a bool disposed flag to prevent double-disposal and a GC.SuppressFinalize(this) call in Dispose() to avoid the overhead of the finalizer queue when Dispose has already run.

Always use using statements (or await using for async disposables) so that Dispose is called even when exceptions occur.

Intermediate Questions

Q6: Explain the dispose pattern. When should you implement a finalizer?

The full dispose pattern is:

- A public

Dispose()method that releases both managed and unmanaged resources, callsGC.SuppressFinalize(this), and sets adisposedflag. - A

protected virtual void Dispose(bool disposing)overload —disposing=truemeans called fromDispose()(safe to release managed objects);disposing=falsemeans called from the finalizer (only release unmanaged resources, because managed objects may already be collected). - A finalizer (

~MyClass()) that callsDispose(false)as a safety net.

Implement a finalizer only when your class directly holds an unmanaged resource handle (a raw pointer, a Win32 handle, an OS resource). If your class only wraps other IDisposable objects, you do not need a finalizer — just delegate to their Dispose(). Finalizers have real overhead: objects with finalizers are placed on the finalization queue, which delays their collection by one GC cycle.

Q7: What is GC.SuppressFinalize and why must you call it?

When you allocate an object that has a finalizer, the GC adds it to the finalization queue. When the object becomes unreachable, the GC promotes it to the next generation to let the finalizer run — this delays collection by at least one cycle and adds overhead.

If Dispose() has already cleaned up the unmanaged resource, there is nothing for the finalizer to do. Calling GC.SuppressFinalize(this) removes the object from the finalization queue, allowing it to be collected immediately in the next GC cycle without the extra promotion. This is a performance-critical call in Dispose().

Q8: What is GC pressure and how do you identify it?

GC pressure is when your application allocates objects faster than the GC can collect them, or allocates objects that promote to Gen 2 unnecessarily. Symptoms include: high CPU time in GC (visible in performance monitors), frequent Gen 2 collections, increased pause times, and LOH fragmentation.

You identify GC pressure using:

- dotnet-counters —

gc-heap-size,gen-0-gc-count,gen-2-gc-count - dotnet-trace + PerfView — shows GC event timings and allocation stacks

- Visual Studio Diagnostic Tools — memory usage timeline and GC events

- Application Insights / OpenTelemetry — custom GC metrics via

System.Diagnostics.Metrics

Common causes: boxing value types in hot paths, LINQ in tight loops creating intermediate collections, large string concatenations, holding references in long-lived collections.

Q9: What is Span<T> and when should you use it?

Span<T> is a stack-only ref struct that represents a contiguous region of memory — it can point into a managed array, a stack-allocated buffer, or unmanaged memory. Because it lives on the stack, it incurs zero heap allocation overhead. It cannot be stored in a field, used in async methods, or captured in closures.

Use Span<T> when you need to slice, parse, or transform data without copying it. Classic examples: parsing a CSV line from a string without string.Split(), processing a byte buffer from a socket without copying sub-ranges, or slicing an array for processing without creating a new array.

The performance benefit is significant in allocation-heavy scenarios: you replace heap allocations with stack references, reducing GC pressure entirely.

Q10: What is ArrayPool<T> and when is it appropriate?

ArrayPool<T> is a shared pool of reusable arrays. Instead of allocating a new array for each operation (which increases GC pressure and can end up on the LOH for large buffers), you rent one from the pool and return it when done.

Use ArrayPool<T> when you need temporary buffers of variable or large size in hot paths — network I/O, serialization, compression, encoding. The key discipline: always return rented arrays, even on exceptions (use try/finally). Rented arrays are not zeroed — they may contain data from previous uses, so never assume they are clean unless you explicitly clear them.

ArrayPool<T> is most impactful for buffers that would otherwise land on the LOH (>85KB), because LOH allocations are expensive and trigger Gen 2 collections.

Advanced Questions

Q11: What is the difference between WeakReference<T> and a strong reference?

A strong reference is the normal kind — it keeps the object alive as long as the reference exists. A WeakReference<T> allows the object to be collected even while the weak reference exists. You can check whether the object is still alive and retrieve it with TryGetTarget().

Use cases: caches that should not prevent objects from being collected under memory pressure, event handlers in the observer pattern (weak event pattern to avoid memory leaks), and tracking objects without extending their lifetimes.

The pattern: store a WeakReference<T> in your cache; when you need the object, call TryGetTarget(). If it returns false, the object has been collected and you must recreate it.

Q12: How does the GC handle finalizable objects differently?

When the GC determines that a finalizable object (one with a ~Destructor method) is unreachable, it does not immediately reclaim its memory. Instead, it places the object on the finalization queue and promotes it to the next generation. A dedicated finalizer thread then runs the finalizer. Only after that completes is the object truly unreachable and eligible for collection in the subsequent GC cycle.

This means finalizable objects survive at least two GC cycles — once when they become unreachable, and once after their finalizer runs. For objects in Gen 0, this means a Gen 1 promotion and eventual Gen 2 residence. This is why GC.SuppressFinalize is so important: it eliminates this entire extra cycle.

Q13: What is memory fragmentation in the context of .NET and how do you mitigate it?

Fragmentation occurs when free memory is scattered in small non-contiguous blocks, preventing large allocations even though total free memory is sufficient. In .NET, the most common source is the Large Object Heap — because the LOH was historically never compacted, frequent allocation and deallocation of different-sized large objects left gaps that subsequent allocations could not fill.

Mitigation strategies:

- Use

ArrayPool<T>to reuse large buffers instead of allocating and discarding them. - Keep large object sizes consistent so freed slots can be reused by subsequent allocations of the same size.

- In .NET 4.5.1+, you can force LOH compaction with

GCSettings.LargeObjectHeapCompactionMode = GCLargeObjectHeapCompactionMode.CompactOncebefore aGC.Collect()call. Use this sparingly — it pauses the application. - In .NET 6+, use

GCSettings.LatencyMode = GCLatencyMode.SustainedLowLatencyto trade throughput for more frequent but shorter collections.

Q14: How would you detect and fix a memory leak in a .NET application?

Memory leaks in .NET are almost always caused by long-lived references that prevent the GC from collecting objects. Common sources: static collections that grow unbounded, event handlers that are never unsubscribed, captured closures in long-lived delegates, and caches without eviction policies.

Detection workflow:

- Take memory snapshots using dotnet-dump, Visual Studio's memory profiler, or JetBrains dotMemory.

- Compare snapshots taken at different points to identify which object types are growing.

- Analyze object retention paths — follow who is holding a reference to the leaking objects.

- Fix the root cause: unsubscribe event handlers when objects are disposed, use

WeakReference<T>for caches, implement eviction policies (MemoryCachewith size limits), and clear static collections when they are no longer needed.

A practical first step: monitor gc-heap-size over time with dotnet-counters. A heap that grows steadily without dropping indicates a leak.

Q15: What is stackalloc and when is it safe to use?

stackalloc allocates a block of memory on the stack rather than the heap. Combined with Span<T>, you can create stack-allocated buffers for small, short-lived data processing without any heap allocation or GC involvement.

Use it for small, fixed-size buffers in methods that do not recurse deeply — parsing small tokens, temporary transformation buffers, small cryptographic inputs. The risk is stack overflow: the default stack size in .NET is 1 MB (and smaller for certain thread pool threads). Allocating large arrays with stackalloc in recursive methods or deeply nested call stacks will cause a StackOverflowException.

A common pattern: use stackalloc for small sizes and fall back to ArrayPool<T> for larger ones, with a size threshold constant like 256 or 512 bytes.

FAQ

Q: Does C# have manual memory management?

Managed code does not, but unsafe code with fixed blocks and raw pointers does. stackalloc is also a form of manual stack allocation. For the vast majority of .NET code, the GC handles all memory management automatically.

Q: Does wrapping an object in using guarantee it is immediately collected?

No. using calls Dispose(), which releases unmanaged resources deterministically. It does not trigger GC collection. The managed memory of the object is still reclaimed on the next GC cycle when no live references remain.

Q: What is the difference between Dispose and Close?

Semantically they often do the same thing, but IDisposable.Dispose() is the standard contract and supports the using pattern. Close() is a convention on some types (like Stream) that may or may not call Dispose(). Always prefer Dispose() / using over Close() for reliability.

Q: When should you call GC.Collect() manually?

Almost never in production code. The GC has far better information about memory pressure than you do. The only legitimate use case is testing and benchmarking, where you want a clean state before measuring. Calling GC.Collect() in production typically hurts performance by forcing a full collection at an inopportune time.

Q: What is unmanaged memory and how do you allocate it in .NET?

Unmanaged memory is memory allocated outside the GC heap, typically using Marshal.AllocHGlobal, NativeMemory.Alloc (.NET 6+), or P/Invoke to native allocators. It is not tracked by the GC and must be freed manually. It is used primarily for interop with native libraries and for high-performance scenarios where avoiding GC overhead is critical.

Q: What is MemoryMarshal and when is it used?

MemoryMarshal is a utility class in System.Runtime.InteropServices for low-level memory manipulation — casting Span<T> to Span<byte>, creating spans over unmanaged memory, and reinterpreting memory layouts. It is used in high-performance serialization, protocol parsing, and cryptographic code where you need fine-grained control over memory layout without copying data.

☕ Found this guide useful? Buy us a coffee — it keeps the content coming every week.