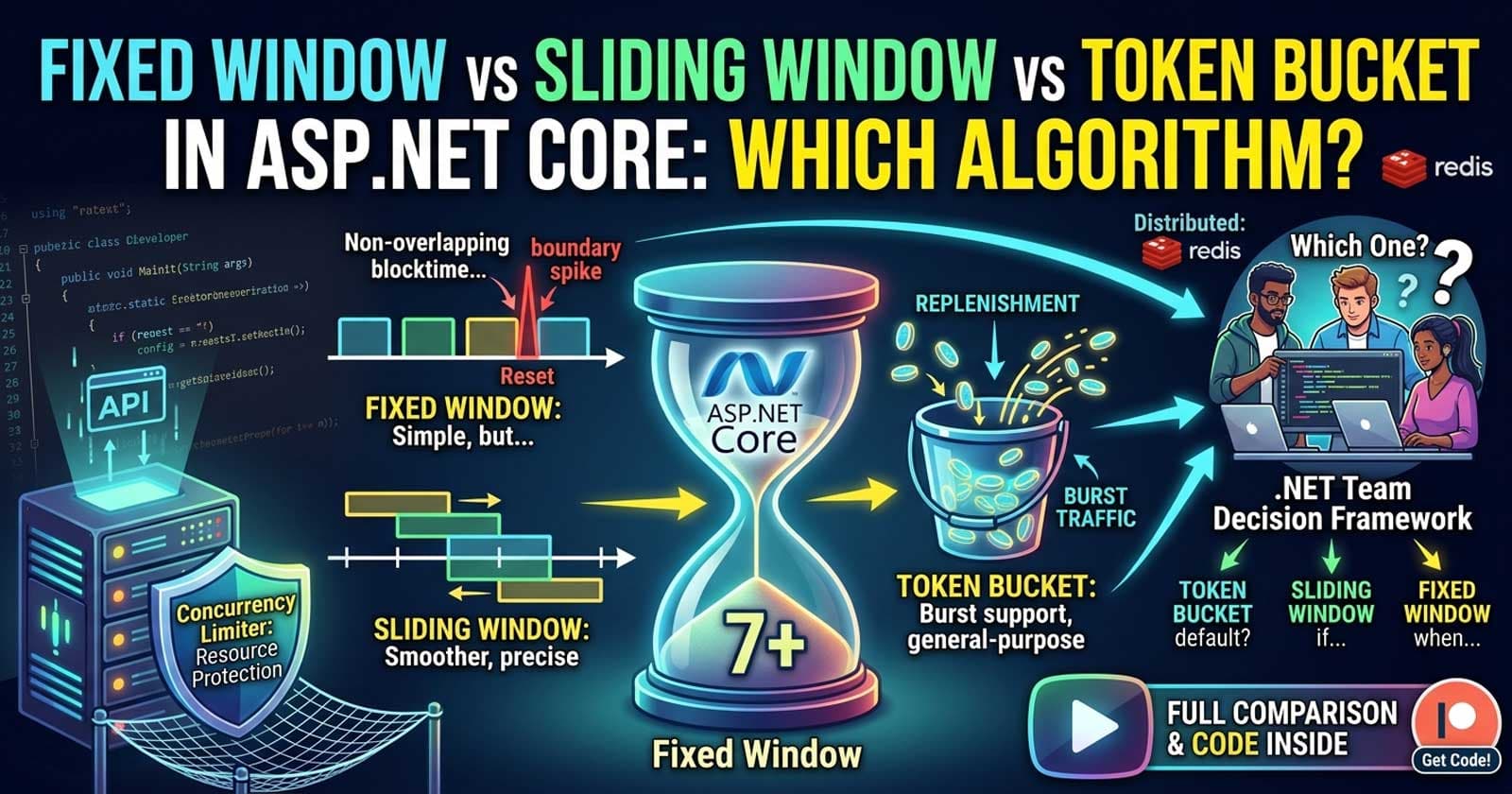

Fixed Window vs Sliding Window vs Token Bucket in ASP.NET Core: Which Rate Limiting Algorithm Should Your .NET Team Use?

Choosing the right rate limiting algorithm for your ASP.NET Core API is one of those decisions that looks straightforward on the surface but carries real consequences in production. Since .NET 7, ASP.NET Core ships with four built-in rate limiting algorithms out of the box — Fixed Window, Sliding Window, Token Bucket, and Concurrency Limiter — and teams that pick the wrong one end up with either burst traffic spikes that overwhelm downstream services or restrictive policies that degrade legitimate user experience.

This article compares all four ASP.NET Core rate limiting algorithms side by side, walks through their mechanics, trade-offs, and real-world failure modes, and gives you a clear recommendation framework for choosing the right one for your .NET team.

🎁 Want implementation-ready .NET source code you can drop straight into your project? Join Coding Droplets on Patreon for exclusive tutorials, premium code samples, and early access to new content. 👉 https://www.patreon.com/CodingDroplets

How ASP.NET Core Rate Limiting Works

ASP.NET Core's built-in rate limiting middleware, introduced in .NET 7 via the Microsoft.AspNetCore.RateLimiting namespace, operates on the concept of limiters and policies. Each limiter is backed by a System.Threading.RateLimiting primitive. These are in-process rate limiters — they work per application instance. For distributed deployments, you need a distributed backing store (Redis is the standard choice), but the algorithm decision still applies.

The middleware integrates at the pipeline level, evaluates each request against a named policy, and returns HTTP 429 with optional Retry-After headers when limits are exceeded. You can apply policies globally, per endpoint, or per controller.

The important thing before comparing algorithms is understanding what you are trying to protect:

- Server resources (CPU, memory, DB connections) → Concurrency Limiter

- Request throughput over time (API quotas) → Window-based or Token Bucket

- Burst absorption (allow a burst then throttle) → Token Bucket or Sliding Window

The Four Algorithms: Mechanics and Trade-Offs

Fixed Window Limiter

The Fixed Window algorithm divides time into discrete, non-overlapping windows of a fixed duration. A counter tracks requests in the current window. When the counter hits the limit, subsequent requests are rejected until the window resets.

How it works: If the window is 60 seconds and the limit is 100 requests, a client can send 100 requests in the first second and 100 more one second later when the window resets. This is the infamous boundary spike problem — two back-to-back windows can produce 2× the intended limit at the reset boundary.

Strengths:

- Simplest algorithm to understand and reason about

- Minimal memory footprint — only one counter per partition

- Predictable reset times, which makes it easy to communicate to API consumers via

Retry-Afterheaders

Weaknesses:

- Does not smooth traffic — allows burst at window boundaries

- A client can game the boundary by sending requests at the end of one window and the start of the next

- Doesn't reflect actual load over rolling time periods

When to use Fixed Window:

- Coarse-grained daily or hourly quotas (e.g., "1000 API calls per day")

- Internal tool APIs where traffic patterns are predictable

- Scenarios where simplicity and debuggability matter more than precision

- When communicating limits to consumers matters (reset time is easy to reason about)

Sliding Window Limiter

The Sliding Window algorithm addresses the boundary spike problem by breaking each window into segments and tracking request counts per segment. As time advances, the oldest segment drops off and the window slides forward. This creates a rolling average effect.

How it works: With a 60-second window divided into 6 segments of 10 seconds each, the allowed count is the sum of requests across all active segments. When a new segment becomes active, the oldest expires. This means the effective limit is computed over a truly rolling 60-second period, not a discrete boundary.

Strengths:

- Eliminates the boundary spike problem of Fixed Window

- Smoother traffic shaping — more accurately reflects "requests per N seconds"

- Still uses window-based semantics familiar to developers

Weaknesses:

- Higher memory footprint than Fixed Window (segments × partitions)

- Slightly more complex to debug and explain to API consumers

- Does not allow for deliberate burst followed by throttle — traffic is smoothed uniformly

When to use Sliding Window:

- Public-facing API endpoints where boundary spikes are a real concern

- Per-user rate limiting in multi-tenant systems

- When you want more accurate enforcement of "X requests per minute" semantics

- REST API quotas for external developers

Token Bucket Limiter

The Token Bucket algorithm works differently from window-based approaches. A bucket holds tokens up to a configured capacity. Tokens are added at a fixed replenishment rate. Each request consumes one (or more) tokens. If the bucket is empty, the request is rejected.

How it works: Configure a bucket with capacity 20 and replenishment of 10 tokens every 10 seconds. A client that has been idle accumulates up to 20 tokens and can burst 20 requests immediately. After the burst, they must wait for replenishment at 1 token/second. This explicitly allows bursting — a key distinction from window-based limiters.

Strengths:

- Explicitly supports controlled bursting — clients can accumulate capacity and use it in a short burst

- Replenishment rate provides a steady throughput ceiling

- Natural fit for scenarios where occasional spikes are expected and acceptable

- General-purpose algorithm recommended for most API rate limiting scenarios

Weaknesses:

- Harder to communicate "how many requests do I have left?" to consumers — depends on token accumulation state

- A client can burst the full bucket capacity immediately, which may surprise teams expecting smooth traffic

- Requires reasoning about both capacity (burst ceiling) and replenishment rate (steady-state throughput)

When to use Token Bucket:

- REST APIs that serve clients with variable usage patterns (mobile apps, browser clients)

- Webhook receivers or callback endpoints where occasional bursts are legitimate

- General-purpose API rate limiting as a default choice

- When you want to allow short bursts without rejecting legitimate traffic

Concurrency Limiter

The Concurrency Limiter is fundamentally different from the other three. Instead of counting requests over a time window, it limits the number of simultaneous in-flight requests. It does not care about time — only about how many requests are being processed concurrently at any moment.

How it works: Set a permit count of 10. If 10 requests are being processed simultaneously, the 11th waits in a queue (up to a configured queue limit) or is rejected immediately. When one of the 10 completes, a queued request is processed.

Strengths:

- Directly protects server resources (thread pool, DB connections, memory) regardless of request duration

- Effective against slow requests that tie up workers — window-based limiters would not catch this

- Simple to tune based on observed concurrency metrics

Weaknesses:

- Does not limit total throughput over time — a burst of very fast requests will all pass through

- Setting the permit count requires knowledge of actual concurrency capacity

- Not suitable as a quota enforcement mechanism

When to use Concurrency Limiter:

- Long-running endpoints (file uploads, report generation, heavy DB queries)

- Protecting downstream dependencies with fixed connection pools

- APIs that interact with rate-limited third-party services

- As a secondary limiter alongside a throughput limiter, not instead of one

Side-by-Side Comparison

| Dimension | Fixed Window | Sliding Window | Token Bucket | Concurrency Limiter |

|---|---|---|---|---|

| Measures | Requests per window | Requests per rolling window | Token consumption rate | Concurrent requests |

| Burst behavior | Allowed at boundary | Smoothed | Explicitly allowed | N/A (not time-based) |

| Memory per partition | Very low | Low–Medium | Low | Very low |

| Complexity | Low | Medium | Medium | Low |

| Best for | Daily/hourly quotas | Per-user API quotas | General API limiting | Resource protection |

| Communicating limits | Easy (window reset) | Moderate | Complex | N/A |

| Handles slow requests | No | No | No | Yes |

Combining Algorithms: The Real-World Pattern

Most production APIs need two limiters in combination:

- A throughput limiter (Token Bucket or Sliding Window) to enforce API quotas per user or API key

- A Concurrency Limiter to protect server resources and slow downstream dependencies

A typical pattern for an enterprise ASP.NET Core API:

- Global concurrency limiter: 50 concurrent requests per instance — prevents thread pool saturation under any scenario

- Per-user Token Bucket: capacity 20, replenishment 10/minute — enforces per-user quotas while allowing short bursts

- Per-endpoint Fixed Window: 10,000 requests per hour on bulk/export endpoints — coarse-grained protection for expensive operations

This layered approach means each limiter handles what it is best suited for, rather than trying to use one algorithm for all scenarios.

Decision Framework: Which Algorithm Should Your .NET Team Use?

Use this framework to make the call for your specific scenario:

Start with Token Bucket as your default. It handles the widest variety of real-world API traffic patterns. It allows legitimate burst behavior, provides a steady throughput ceiling, and is the recommendation from Microsoft's own rate limiting documentation for general-purpose API limiting. The existing GitHub repo https://github.com/codingdroplets/dotnet-rate-limiting-api demonstrates Token Bucket applied in a real API project.

Switch to Sliding Window when: your API serves external developers who game window boundaries, you need accurate "X requests per rolling minute" semantics, or you are seeing unexpected bursts at window resets in a Fixed Window implementation.

Use Fixed Window when: you are implementing daily or hourly quotas where the boundary spike is acceptable (or even desirable — it lets clients "reset" on a predictable schedule), or when simplicity and debuggability are more important than precision.

Add a Concurrency Limiter when: your endpoints have variable execution time, you have limited downstream connection pools, or you are seeing thread pool exhaustion under load. This should almost always be added as a second limiter alongside your throughput limiter, not as a replacement.

What Microsoft Docs Don't Cover

The official Microsoft documentation (learn.microsoft.com/en-us/aspnet/core/performance/rate-limit) explains each algorithm competently but avoids giving teams a clear default recommendation. In practice, most teams would be best served by starting with Token Bucket for general endpoints and layering in a Concurrency Limiter for resource-heavy paths.

There are also important operational gaps the docs don't cover:

- Distributed deployment: All four built-in limiters are in-process only. In a load-balanced deployment with multiple instances, each instance maintains its own counters. For distributed rate limiting, you need Redis-backed implementations (the

RedisRateLimitingpackage or a customIRateLimiterPolicy). - Warm-up behavior: Token Bucket starts with a full bucket on application start, meaning every client gets a full burst allowance after deployment. If this matters, consider a startup delay or pre-depleted bucket.

- Queue depth tuning: All algorithms support a

QueueLimitthat queues excess requests rather than immediately rejecting them. A deep queue can delay responses significantly; a shallow queue rejects quickly. Tune this based on your latency SLA, not just throughput.

For external authority on rate limiting algorithm design, the IETF's HTTP RateLimit headers specification (RFC 9380) provides a standardized way to communicate remaining quota and reset times to API consumers.

Recommendation

For most .NET teams building production APIs in 2026:

- Default choice: Token Bucket per-user/per-API-key + Concurrency Limiter as a safety net

- External developer APIs: Sliding Window per user, Fixed Window for daily quotas

- Internal microservices: Fixed Window (simple, debuggable) + Concurrency Limiter if calling slow downstream services

- File upload / export endpoints: Concurrency Limiter only — these are inherently latency-sensitive, not throughput-sensitive

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

Frequently Asked Questions

What is the difference between Fixed Window and Sliding Window rate limiting in ASP.NET Core?

Fixed Window divides time into discrete, non-overlapping windows. When a window expires, the counter resets entirely. This means a client can send double the limit by timing requests across a window boundary. Sliding Window divides each window into segments and tracks a rolling count, eliminating the boundary spike problem. Sliding Window is more accurate but uses more memory per partition.

When should I use Token Bucket over Sliding Window in .NET?

Use Token Bucket when your clients have legitimate burst patterns — mobile apps checking for updates, users exporting data, or any scenario where occasional spikes are expected. Token Bucket explicitly accumulates capacity during idle periods and allows it to be spent in a burst. Sliding Window smooths all traffic uniformly and does not differentiate between steady traffic and deliberate bursts.

Does ASP.NET Core rate limiting work across multiple instances in a distributed deployment?

No. The built-in ASP.NET Core rate limiting algorithms are all in-process. Each instance maintains its own counters, so in a load-balanced deployment with N instances, a client effectively gets N times the configured limit. For distributed rate limiting, you need a shared backing store such as Redis with a compatible IRateLimiterPolicy implementation.

What HTTP status code does ASP.NET Core return when rate limiting rejects a request?

ASP.NET Core returns HTTP 429 (Too Many Requests) by default when a request is rejected by a rate limiter. You can customize the rejection response, including adding a Retry-After header, using the OnRejected callback on the rate limiting policy. For Fixed Window and Sliding Window, the reset time can be calculated from the window start; for Token Bucket, it depends on token replenishment timing.

Can I apply multiple rate limiting algorithms to the same endpoint in ASP.NET Core?

Yes. You can chain multiple rate limiting policies by using chained policies or by applying middleware that calls multiple limiters. A common pattern is to apply a global Concurrency Limiter at the pipeline level and a per-user Token Bucket limiter via the [EnableRateLimiting] attribute on specific endpoints or controllers.

Is the Concurrency Limiter a replacement for throughput-based rate limiting?

No. The Concurrency Limiter protects against having too many simultaneous requests in flight, not too many requests over time. A burst of very fast requests will all pass through a Concurrency Limiter if concurrency permits it, even if the total volume is high. Use a Concurrency Limiter alongside a throughput limiter (Fixed Window, Sliding Window, or Token Bucket), not as a replacement for one.

What happens to queued requests if the application restarts?

Queued requests are held in memory and are lost if the application restarts or if the request times out before a permit becomes available. ASP.NET Core's built-in rate limiters do not persist queue state. In high-availability scenarios, design your clients to handle 429 responses and implement retry logic with exponential backoff rather than relying on server-side queuing.