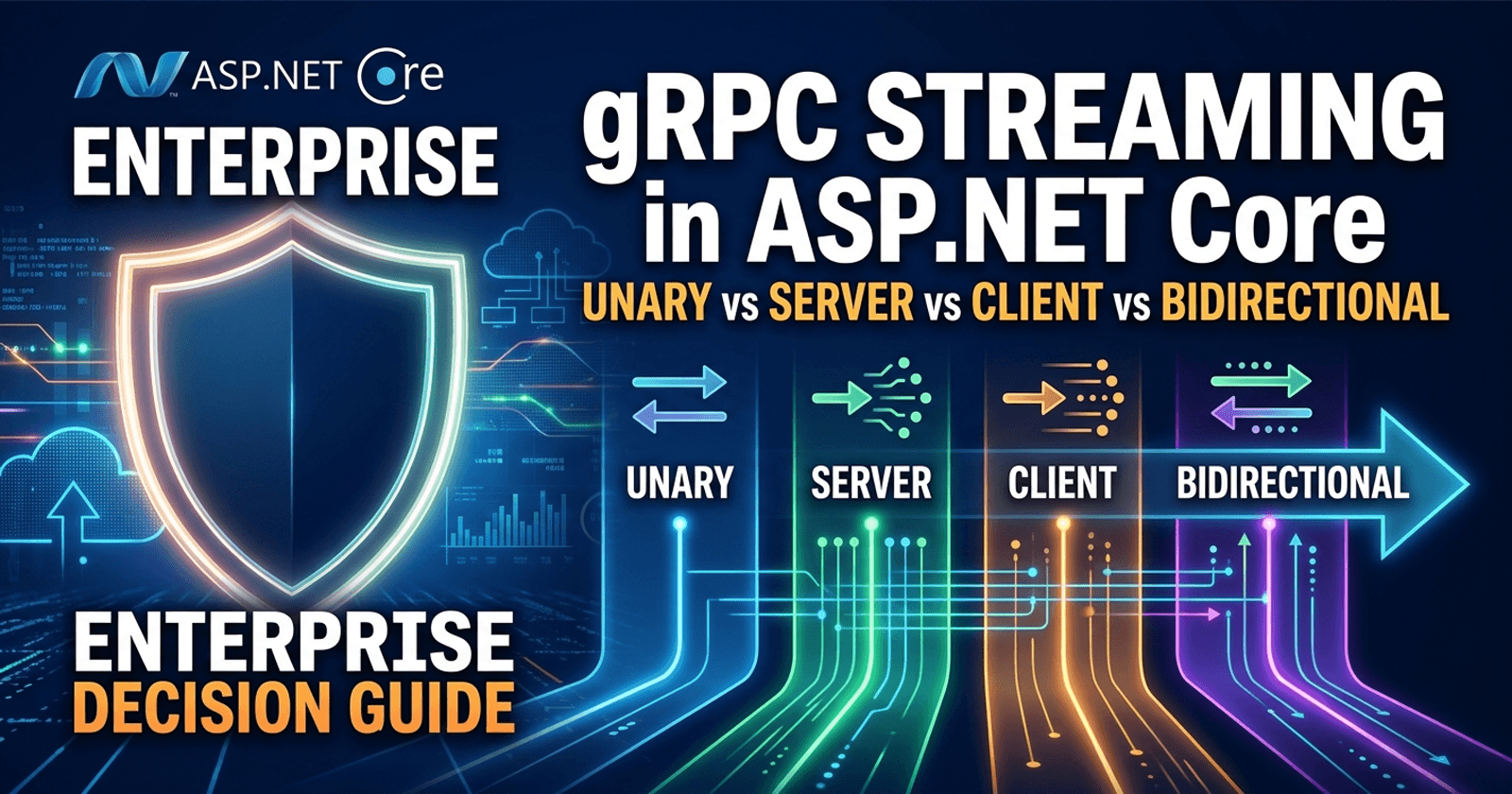

gRPC Streaming in ASP.NET Core: Unary vs Server vs Client vs Bidirectional — Enterprise Decision Guide

Picking the wrong gRPC call type is one of those decisions that looks fine in a demo and surfaces in production. A bidirectional streaming endpoint standing in for a unary call burns connection resources for no gain. A unary endpoint doing the job of server streaming forces clients to poll, adds latency, and quietly degrades reliability under load. In ASP.NET Core, all four gRPC call types are equally easy to implement — which makes it tempting to reach for whichever one is familiar rather than the one that fits. This guide cuts through that confusion.

🎁 Want implementation-ready .NET source code you can drop straight into your project? Join Coding Droplets on Patreon for exclusive tutorials, premium code samples, and early access to new content. 👉 https://www.patreon.com/CodingDroplets

What Are the Four gRPC Call Types?

gRPC defines four method types based on whether the client sends one message or a stream, and whether the server responds with one message or a stream. In ASP.NET Core, each maps directly to a proto definition and a corresponding service method signature.

Unary: One request, one response. The client sends a single message and waits for a single reply. Equivalent to a standard HTTP request/response.

Server streaming: One request, many responses. The client sends one message; the server streams back a sequence of messages and closes the stream when done.

Client streaming: Many requests, one response. The client sends a stream of messages; the server processes them and replies once when the stream is complete.

Bidirectional streaming: Many requests, many responses. Both sides send streams of messages independently and concurrently. Either side may close its stream at any point.

All four are defined in your .proto file and all four are supported natively in ASP.NET Core. The decision is architectural, not syntactic.

What Is the Right gRPC Streaming Pattern for Your Use Case?

This is the question most teams skip. They pick server streaming because it sounds more advanced, or stick with unary because it maps to what they already know from REST. Neither instinct is reliable.

Match the call type to the communication shape of the operation:

| Scenario | Best Fit |

|---|---|

| Query or command with a single result | Unary |

| Pushing a large result set to a client | Server streaming |

| Ingesting a batch or event feed from a client | Client streaming |

| Real-time collaborative or duplex communication | Bidirectional streaming |

| File upload | Client streaming |

| Notifications / live dashboard feed | Server streaming |

| Two-way chat or collaborative editing | Bidirectional streaming |

Unary Calls: The Baseline

Unary is the default pattern for most service-to-service communication. A client calls a method, the server processes the request, and the client receives a response. Deadline propagation, cancellation, and error handling all work cleanly.

Use unary when:

- The result fits in a single message

- You need simple request/response semantics

- Latency-sensitive operations where connection overhead matters

- Operations that must be idempotent and easily retried

Avoid unary when:

- The response is large enough to benefit from streaming (typically hundreds of items or more)

- The operation produces results progressively and the client can act on them as they arrive

- You need to push updates to a client that are triggered by server-side events rather than client requests

Unary calls are also the easiest to test, observe, and reason about in production. If a unary call meets the requirements, there is no architectural benefit to replacing it with a streaming variant.

Server Streaming: Push Results as They Are Ready

Server streaming is the right pattern when the server can produce results incrementally and the client benefits from receiving them before the full response is ready.

A search index returning thousands of results, a report generator that processes rows as it queries, a notification endpoint that pushes events to a connected client — all of these are better served by server streaming than by a unary call that blocks until the full response is assembled.

Use server streaming when:

- The response volume is large and the client can process it incrementally

- Results become available progressively on the server side

- You are pushing live events or data feeds to a subscribed client

- The client does not have data to send after the initial request

Avoid server streaming when:

- The result set is small and fits comfortably in a single message

- The client needs to interleave its own messages with the server's stream

- The consumer infrastructure (API gateways, load balancers) does not support long-lived streaming connections — note that Azure App Service and IIS have known limitations with bidirectional streaming, but server streaming is generally better supported

An important production consideration: server streaming connections are long-lived. Under high concurrency, holding many open streams consumes thread pool resources on the server. Design your eviction and timeout strategy before you ship to production.

Client Streaming: Efficient Batch Ingestion

Client streaming suits scenarios where the client has a sequence of messages to send and the server's reply only makes sense after processing all of them — or a significant portion of them.

Bulk telemetry ingestion, IoT sensor data pipelines, file upload, and transaction batch processing are canonical examples. The alternative — batching multiple items into a single large unary message — works at small scale but creates message size and timeout problems that client streaming avoids.

Use client streaming when:

- The client has a variable-length sequence of messages to send

- The server needs to process the full stream (or a window of it) before responding

- You need to send data incrementally rather than all at once

- Batching into a single large request would hit message size limits or timeout ceilings

Avoid client streaming when:

- The server needs to send responses before the client finishes sending — use bidirectional streaming instead

- The sequence is small and predictable — a unary call with a repeated field in the proto message may be simpler and just as effective

Anti-pattern to watch for: treating client streaming as a fire-and-forget channel without proper backpressure handling. gRPC does not enforce flow control beyond what HTTP/2 provides. If the server cannot keep up with client messages, the producer must implement its own rate limiting or the stream will stall or fail under load.

Bidirectional Streaming: Full Duplex Communication

Bidirectional streaming gives both sides independent, concurrent streams. Neither side has to wait for the other to finish before sending. This is the most flexible pattern — and the most operationally complex.

It is the right choice for real-time collaborative applications, live chat, duplex telemetry with command feedback, and any scenario where client-to-server and server-to-client messages are driven by independent events.

Use bidirectional streaming when:

- Both sides send messages independently, not in a strict request-reply pattern

- The interaction is long-lived and conversational in nature

- You need to push server-initiated messages to a client that is also sending messages to the server concurrently

- You are building real-time gaming, collaborative editing, or IoT command-and-control systems

Avoid bidirectional streaming when:

- Only one side is generating a stream — that is server or client streaming

- The interaction is fundamentally request-reply — unary handles it more cleanly

- Your deployment environment has hosting constraints: Azure App Service and IIS do not support bidirectional streaming; prefer server streaming or gRPC-Web in those environments

Bidirectional streaming connections require careful lifecycle management. Define clear rules for who initiates stream closure, how errors propagate across both streams, and how you handle half-open connections when one side disconnects unexpectedly.

Enterprise Decision Matrix

| Factor | Unary | Server Streaming | Client Streaming | Bidirectional |

|---|---|---|---|---|

| Simplicity | ✅ Highest | ✅ Moderate | ✅ Moderate | ⚠️ High complexity |

| Response before client finishes | ✅ Yes | ✅ Yes | ❌ No | ✅ Yes |

| Server push capability | ❌ No | ✅ Yes | ❌ No | ✅ Yes |

| Works on IIS / Azure App Service | ✅ Yes | ✅ Yes | ✅ Yes | ❌ No |

| Retry / idempotency | ✅ Easy | ⚠️ Manual | ⚠️ Manual | ⚠️ Complex |

| Observability complexity | Low | Medium | Medium | High |

| Resource consumption | Low | Medium | Medium | High |

| gRPC-Web compatible | ✅ Yes | ✅ Yes | ⚠️ Limited | ❌ No |

Use this matrix to match your operation's communication shape to the right call type. Always validate against your hosting environment before committing to a streaming pattern.

Anti-Patterns to Avoid

Streaming as a performance optimisation for small payloads: Opening a streaming connection has overhead. For small, bounded responses, unary is faster.

Bidirectional streaming for independent read/write streams: If the client and server are not actually interleaving messages — for example, the client sends a stream first and then the server responds with a stream — this is client-then-server streaming, not true bidirectional. A pair of unidirectional streams or a purpose-built protocol is cleaner.

Ignoring deadline propagation: Streaming connections can stay open indefinitely if you do not set deadlines. Always propagate deadlines and honour cancellation tokens in your streaming handlers.

Using gRPC streaming behind an API gateway that does not support HTTP/2: Many reverse proxies and API gateways default to HTTP/1.1 for backend connections, which breaks gRPC streaming. Verify end-to-end HTTP/2 support before exposing a streaming gRPC service publicly.

Skipping backpressure design: High-volume streaming without flow control leads to producer/consumer imbalance. Plan your backpressure strategy — whether that is manual rate limiting, System.Threading.Channels, or Deadline enforcement — before you see it fail in production.

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

Related Resources

- gRPC vs REST in .NET Microservices: Performance, Debuggability, and Team Productivity — Coding Droplets

- System.Threading.Channels in ASP.NET Core: Enterprise Decision Guide — Coding Droplets

- gRPC services and methods — Microsoft Learn (authoritative reference)

- gRPC-Web in ASP.NET Core — Microsoft Learn (hosting constraints reference)

Frequently Asked Questions

What is the difference between unary and server streaming in gRPC? Unary sends one request and receives one response — a standard request/reply pattern. Server streaming sends one request and receives a sequence of responses; the server pushes results as they become available. Use server streaming when the result set is large, incremental, or event-driven.

When should I use bidirectional streaming instead of server streaming in ASP.NET Core? Use bidirectional streaming only when both the client and the server need to send messages independently and concurrently. If only the server is generating a stream, server streaming is simpler and has fewer hosting constraints. Bidirectional streaming does not work on Azure App Service or IIS.

Does gRPC streaming work behind an API gateway? It depends on the gateway. gRPC requires HTTP/2 end-to-end. Many API gateways default to HTTP/1.1 for backend connections, which breaks gRPC streaming. YARP supports HTTP/2 backend connections. AWS API Gateway and Azure API Management have specific gRPC support that must be explicitly enabled.

Can I use gRPC streaming with gRPC-Web? Server streaming is supported with gRPC-Web with some limitations. Client streaming support in gRPC-Web is limited and implementation-dependent. Bidirectional streaming is not supported in gRPC-Web. For browser-facing services, use server streaming or unary calls.

What happens if a client disconnects mid-stream?

In ASP.NET Core, the CancellationToken on the ServerCallContext is cancelled when the client disconnects. Your streaming handler must observe this token and exit the streaming loop cleanly to release server resources. Failure to handle disconnects is a common source of resource leaks in gRPC streaming services.

Is gRPC client streaming the right approach for file uploads? Yes — client streaming maps naturally to file upload, particularly for large files or streaming uploads where the total size is not known upfront. You avoid message size limits and can stream chunks as they are ready. Pair it with a deadline to prevent stalled uploads from holding open connections indefinitely.

How do I implement backpressure in a high-volume gRPC server streaming endpoint?

gRPC relies on HTTP/2 flow control for backpressure, but this does not give you application-level control over the producer rate. For high-throughput scenarios, use System.Threading.Channels as an intermediate buffer between your data source and the streaming write loop, and implement producer-side rate limiting. This keeps the stream healthy without overwhelming the consumer.