.NET Microservices Architecture Interview Questions for Senior Developers (2026)

If you are preparing for a senior .NET developer role in 2026, microservices architecture is no longer optional knowledge — it is table stakes. Interviewers at product companies and enterprise shops alike expect senior candidates to go far beyond definitions. They want to hear how you reason about service decomposition, resilience, observability, and the real trade-offs teams face when running distributed systems in production on .NET.

🎁 Want implementation-ready .NET source code you can drop straight into your project? Join Coding Droplets on Patreon for exclusive tutorials, premium code samples, and early access to new content. 👉 https://www.patreon.com/CodingDroplets

This guide is built specifically for .NET microservices interviews in 2026 — covering patterns, tools, and decision trade-offs that appear in senior-level technical screens. Questions are grouped by difficulty: Basic, Intermediate, and Advanced. Each answer is direct and concise, focused on what interviewers actually want to hear.

Basic Questions

What Is a Microservices Architecture and How Does It Differ from a Monolith?

A microservices architecture decomposes an application into small, independently deployable services, each owning its own data and communicating over a network. A monolith bundles all concerns into a single deployable unit.

The trade-off is real: microservices give you independent deployability and team autonomy, but they introduce distributed system complexity — network latency, eventual consistency, and operational overhead that a monolith simply does not have.

For .NET teams, this often means moving from a single ASP.NET Core application to multiple ASP.NET Core Minimal API or Web API projects, each running in its own container.

What Communication Patterns Are Used Between .NET Microservices?

Two primary patterns:

- Synchronous (request/response): REST over HTTP using

HttpClientwithIHttpClientFactory, or gRPC usingGrpc.AspNetCore. Use when the caller needs an immediate response. - Asynchronous (event/message-driven): Message brokers such as RabbitMQ via MassTransit, or Azure Service Bus. Use when operations can be decoupled and eventual consistency is acceptable.

Senior candidates are expected to explain when to use each — not just what they are. Choosing synchronous communication for operations that can be async creates tight coupling and cascades failures.

What Is the Role of an API Gateway in a Microservices System?

An API gateway sits between external clients and internal services. It handles cross-cutting concerns: routing, authentication, rate limiting, SSL termination, and request aggregation.

In the .NET ecosystem, YARP (Yet Another Reverse Proxy) is the preferred open-source option for teams that want a code-first, ASP.NET Core-native gateway. Ocelot is the legacy alternative. Azure API Management is the managed option for Azure-hosted workloads.

How Does Service Discovery Work in .NET Microservices?

Service discovery allows services to find each other dynamically rather than relying on hardcoded addresses. In Kubernetes (the dominant deployment target), service discovery is built in via DNS — each service has a stable DNS name within the cluster.

For .NET teams not on Kubernetes, Consul is a common choice. .NET Aspire (introduced in .NET 8) integrates service discovery via Microsoft.Extensions.ServiceDiscovery, which supports both Kubernetes DNS and Consul backends.

What Is the Difference Between Horizontal and Vertical Scaling in Microservices?

Horizontal scaling (scale out) adds more instances of a service to handle increased load. It is the default scaling strategy in containerised microservices — Kubernetes handles this via HPA (Horizontal Pod Autoscaler).

Vertical scaling (scale up) increases the resources (CPU/memory) of an existing instance. It has a ceiling and requires a restart in most environments.

For .NET services, horizontal scaling is preferred because ASP.NET Core's request-handling model (thread pool + async I/O) is designed for stateless scale-out. Stateful operations must be externalised to Redis, a database, or a distributed cache before scale-out is safe.

Intermediate Questions

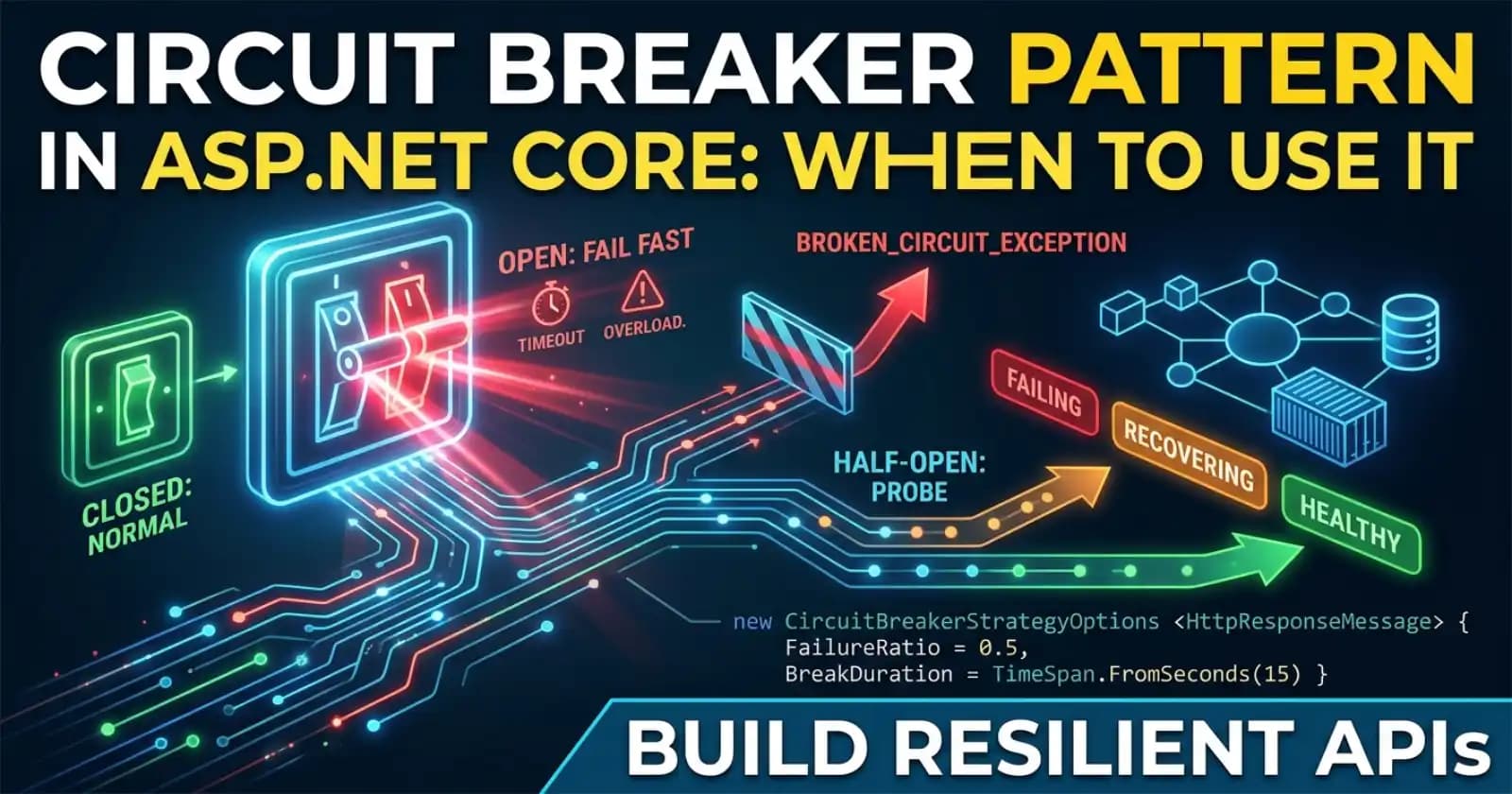

How Do You Implement Resilience in .NET Microservices?

Resilience in .NET is handled via the Microsoft.Extensions.Resilience library (the evolution of Polly for .NET 8+), built on top of Polly.Core. The core patterns:

- Retry: Retries transient failures with exponential backoff and jitter

- Circuit Breaker: Stops sending requests to a failing service after a threshold, giving it time to recover

- Timeout: Cancels slow requests before they block thread pool threads

- Bulkhead: Limits concurrent calls to a downstream service to prevent resource exhaustion

- Fallback: Returns a default or cached response when all retries fail

In .NET 8+, you configure these via AddResiliencePipeline on the IHttpClientBuilder chain, which integrates directly with IHttpClientFactory. The key interview point: combine retry with circuit breaker, because retrying into an open circuit wastes resources.

What Is the Saga Pattern and When Would You Use It in .NET?

The Saga pattern manages distributed transactions across multiple services without using a two-phase commit (2PC). A saga is a sequence of local transactions — each service completes its part and publishes an event or message, triggering the next step. If a step fails, compensating transactions undo the prior steps.

Two coordination styles:

- Choreography: Services react to each other's events. Simpler to start but harder to trace as the saga grows.

- Orchestration: A central orchestrator (a saga coordinator service) directs the steps. Easier to reason about for complex flows.

In .NET, MassTransit has first-class saga support via its State Machine API. NServiceBus offers an alternative with a more opinionated saga framework. The key interview point: sagas achieve eventual consistency, not atomicity. Interviewers want to hear you acknowledge that compensating transactions must be idempotent.

How Do You Handle Distributed Transactions Without a Saga?

When a saga is overkill (simple 2-service operations), the Outbox Pattern is the practical alternative. The service writes to its own database and an outbox table in a single local transaction. A background worker reads the outbox and publishes the message to the broker. This guarantees at-least-once delivery without a distributed transaction.

Coding Droplets has a dedicated article on the ASP.NET Core Outbox Pattern if you want the full decision breakdown.

What Is Idempotency and Why Is It Critical in Microservices?

Idempotency means that executing the same operation multiple times produces the same result as executing it once. In a distributed system, at-least-once message delivery (guaranteed by most brokers) means consumers will receive duplicate messages.

If your payment service processes the same charge event twice, you have a serious bug. The fix: every consumer must be idempotent. Common techniques in .NET include storing a processed event ID in the database and checking before processing, or using idempotency keys on HTTP endpoints stored in a distributed cache (e.g., Redis).

How Do You Implement Distributed Tracing in a .NET Microservices System?

Distributed tracing correlates requests across service boundaries so you can see the full path of a request, where latency occurred, and where it failed.

In .NET, this is done via OpenTelemetry — specifically the OpenTelemetry.Extensions.Hosting package and the AddOpenTelemetry() extension. ASP.NET Core and HttpClient are instrumented automatically. Traces are exported to Jaeger, Zipkin, or a vendor backend (Datadog, Azure Monitor).

The critical concept interviewers probe: trace context propagation. The traceparent header (W3C standard) must be forwarded through every service — HTTP calls (via IHttpClientFactory's built-in propagation) and message headers (via MassTransit's built-in OpenTelemetry support).

What Is .NET Aspire and How Does It Change Microservices Development?

.NET Aspire (GA in .NET 9, evolving in .NET 10) is an opinionated stack for building observable, cloud-ready distributed applications. It provides:

- An AppHost project that orchestrates all services, databases, and infrastructure (Redis, RabbitMQ, PostgreSQL) locally

- Service defaults that apply OpenTelemetry, health checks, and service discovery automatically to every service

- A developer dashboard showing traces, logs, and metrics during local development

The interviewer-relevant point: Aspire does not replace Kubernetes in production. It eliminates the friction of local multi-service development — docker-compose setup, manual telemetry wiring, and service discovery configuration that teams used to spend days on.

Advanced Questions

How Do You Design for Failure in .NET Microservices at Scale?

Designing for failure means assuming components will fail and making failures recoverable and observable. The principles:

Bulkhead isolation: Separate thread pools (via Polly.Bulkhead or separate IHttpClientFactory named clients) ensure a slow downstream service cannot exhaust the shared thread pool and take down the entire process.

Graceful degradation: When a non-critical service is unavailable, return a degraded but functional response (cached data, empty list, feature flag off) rather than propagating the failure.

Health checks as circuit breakers: Kubernetes liveness and readiness probes (configured via Microsoft.Extensions.Diagnostics.HealthChecks) prevent unhealthy pods from receiving traffic before they are ready.

Chaos engineering: Run controlled failure experiments (kill a service, introduce latency) in a staging environment to validate that your resilience pipeline actually holds. Tools like Chaos Monkey or Azure Chaos Studio integrate with .NET deployments.

The senior-level answer always includes a concrete example of a production failure and what was learned from it, not just the theory.

How Do You Approach Service Decomposition — What Makes a "Good" Service Boundary?

Poor service decomposition is the most common reason microservices fail. The boundaries that work are aligned with business capabilities (Domain-Driven Design bounded contexts), not technical layers.

A service is sized correctly when:

- A small team (2-3 developers) can own it end-to-end

- Its data model does not require cross-service JOINs for normal operations

- It can be deployed independently without coordinating with other teams

- Its failure is isolated and does not cascade

Warning signs of bad decomposition in .NET systems: services that share a database, services that make synchronous calls to 5+ downstream services per request, or services where every feature requires deploying 3 services simultaneously.

How Would You Secure Service-to-Service Communication in .NET?

Three layers:

Transport security: TLS everywhere. In Kubernetes, a service mesh (Istio, Linkerd) provides mutual TLS (mTLS) automatically between pods, eliminating the need to manage certificates in each service.

Authentication: For service-to-service HTTP calls, use OAuth 2.0 Client Credentials flow. Each service has its own client ID and secret, and exchanges them for a short-lived JWT from the identity server (Duende IdentityServer, Azure AD, Keycloak). The downstream service validates the JWT using

Microsoft.AspNetCore.Authentication.JwtBearer.Authorisation: Use scopes and audiences in the JWT to control which services can call which endpoints. An order service should not be able to call the payment service's admin endpoints.

The point interviewers probe at the senior level: never rely on network-level trust alone. An attacker who gets inside the cluster perimeter (or a compromised internal service) should still be stopped by proper auth at each service boundary.

How Do You Manage Configuration and Secrets Across Multiple .NET Services?

A common failure mode: environment variables scattered across Kubernetes YAML files, with database passwords in base64-encoded secrets that are actually just plaintext.

The correct approach:

- Non-sensitive config: Kubernetes ConfigMaps mounted as environment variables or files, read via

IConfiguration(ASP.NET Core's built-in provider) - Sensitive secrets: Azure Key Vault, AWS Secrets Manager, or HashiCorp Vault, accessed via the respective .NET provider (

Azure.Extensions.AspNetCore.Configuration.Secrets). Secrets are fetched at startup and rotated without redeployment. - Feature flags: Externalised to Azure App Configuration or a feature flag service, so you can change behaviour without a deployment

The .NET team has a detailed guide on secrets management on Microsoft Docs — understanding the full provider chain (IConfiguration layering) is expected at the senior level.

What Is Dapr and When Would You Choose It Over Native .NET Patterns?

Dapr (Distributed Application Runtime) is a sidecar-based runtime that provides building blocks for microservices: state management, pub/sub messaging, service invocation, secret management, and observability — all via a local HTTP/gRPC API regardless of the underlying infrastructure.

The .NET SDK (Dapr.AspNetCore, Dapr.Client) integrates Dapr with ASP.NET Core's DI container, making it feel native.

Choose Dapr when:

- You want portability across cloud providers without rewriting infrastructure code

- Your team is building polyglot microservices (some in .NET, some in Python or Go) and wants a consistent abstraction layer

- You want Dapr's Actor model for stateful, virtual-actor-based workloads

Choose native .NET patterns (MassTransit + Polly + IHttpClientFactory) when:

- Your team is .NET-only and the additional sidecar operational complexity is not worth it

- You are already deep in the Azure ecosystem and Azure Service Bus / Event Grid provide what you need natively

FAQ

What .NET tools should I know for a microservices interview in 2026?

Focus on: MassTransit (messaging), Polly / Microsoft.Extensions.Resilience (resilience), YARP (API gateway), OpenTelemetry (observability), Dapr (distributed runtime), .NET Aspire (local orchestration), and EF Core per-service with database-per-service isolation. Kubernetes fundamentals are also expected for senior roles.

Is gRPC better than REST for .NET microservices communication?

For internal service-to-service communication, gRPC offers lower latency, strongly typed contracts via Protobuf, and streaming support. For external-facing APIs consumed by browsers or third parties, REST is still the standard. Senior candidates should know both and explain when to use each rather than treating either as universally superior.

How do you prevent cascading failures in .NET microservices?

Combine bulkhead isolation, circuit breakers (via Polly.CircuitBreaker), timeouts, and retry with exponential backoff. Each service should have its own resilience pipeline for each downstream dependency, not a global catch-all. Health checks in Kubernetes ensure traffic stops reaching a service before it is ready.

What is the difference between eventual consistency and strong consistency, and which should I use?

Strong consistency means every read reflects the latest write. It requires distributed coordination (e.g., distributed transactions), which is expensive and brittle across service boundaries. Eventual consistency means the system will converge to the correct state given enough time and no new updates — it is what most microservices architectures accept.

Use eventual consistency for operations that can tolerate a brief lag (inventory updates, analytics, notifications). Use strong consistency for operations where correctness is non-negotiable (financial debits, auth token revocation). Design your system to minimise the surface area that requires strong consistency.

How do you handle versioning in .NET microservices APIs?

Use URI versioning (/v1/orders) or header versioning for external-facing APIs. For internal service contracts (especially gRPC Protobuf), use backward-compatible field additions and never remove or renumber fields. Consumer-Driven Contract Testing (CDCT) via tools like Pact ensures that producer changes do not silently break consumers. Senior candidates should also mention the importance of running multiple API versions in parallel during a migration window rather than forcing a big-bang cutover.

What is the Strangler Fig pattern and when is it useful?

The Strangler Fig pattern is the safest way to migrate a monolith to microservices. You incrementally extract capabilities from the monolith into new services, routing traffic via an API gateway or facade. The monolith remains live and handles anything not yet extracted. Over time, the extracted services "strangle" the monolith until it can be retired.

For .NET teams, this typically means putting YARP or Azure API Management in front of the existing IIS/Kestrel monolith and gradually routing specific routes to new ASP.NET Core services as they are ready.

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

Whether you are interviewing at a fintech, an enterprise SaaS company, or a cloud-native startup, the bar for senior .NET roles has moved decisively toward distributed systems fluency. Knowing the patterns is the entry ticket — knowing the trade-offs, failure modes, and real operational considerations is what separates a strong candidate from the rest. Good luck.