ASP.NET Core Rate Limiting Interview Questions for Senior .NET Developers (2026)

Rate limiting is no longer a "nice-to-have" in production APIs — it is a foundational reliability and security control that every senior .NET developer is expected to understand deeply. Whether you are preventing abuse, protecting downstream services, or enforcing SLA tiers for different customers, knowing how to design and reason about rate limiting in ASP.NET Core is a recurring theme in senior-level technical interviews.

If you want to go further than theory, the full implementation — including all four algorithms wired into a production ASP.NET Core API with per-user, per-endpoint, and per-IP policies — is available on Patreon, where you get annotated, production-ready source code ready to adapt for your own team.

Rate limiting sits squarely within Chapter 10 of the ASP.NET Core Web API: Zero to Production course, which covers all four algorithms, GlobalLimiter setup, policy partitioning, and Polly resilience in a single complete production codebase — so the context and the code are always together.

The questions below are grouped by difficulty from foundational concepts through to senior-level architecture decisions. Each answer is concise and exam-ready. The full working codebase with all rate limiting policies already configured is available on GitHub.

Basic Questions

What Is Rate Limiting and Why Does It Matter in ASP.NET Core?

Rate limiting is the practice of controlling how many requests a client — or category of client — can make to an API within a defined time window. In ASP.NET Core, the native Microsoft.AspNetCore.RateLimiting middleware (introduced in .NET 7 and refined in .NET 8, 9, and 10) provides built-in support without requiring third-party libraries.

It matters because without rate limiting, a single misbehaving client can exhaust server resources, degrade performance for everyone else, cause runaway downstream database load, and open the API to denial-of-service attacks. In enterprise APIs — especially public-facing SaaS platforms — rate limiting is also a commercial boundary: it enforces tier-based quotas that correspond to subscription plans.

What Are the Four Rate Limiting Algorithms Supported Natively in ASP.NET Core?

ASP.NET Core's rate limiting middleware exposes four built-in algorithm implementations:

Fixed Window — Counts requests within a fixed time window (e.g., 100 requests per 60-second window). When the window resets, the counter resets. Simple and cheap, but prone to burst abuse at window boundaries.

Sliding Window — Divides the window into segments and tracks requests across a rolling window. Smooths out boundary bursts. Higher memory cost than Fixed Window but more accurate.

Token Bucket — Issues tokens at a fixed replenishment rate. Each request consumes one token. Allows short bursts up to the bucket capacity, then enforces the refill rate. Ideal when clients should be able to burst briefly without penalty.

Concurrency — Limits the number of simultaneous requests in flight rather than the rate over time. Useful for protecting slow endpoints (e.g., report generation, file processing) where total throughput is less important than preventing resource saturation.

How Do You Register the Rate Limiting Middleware in ASP.NET Core?

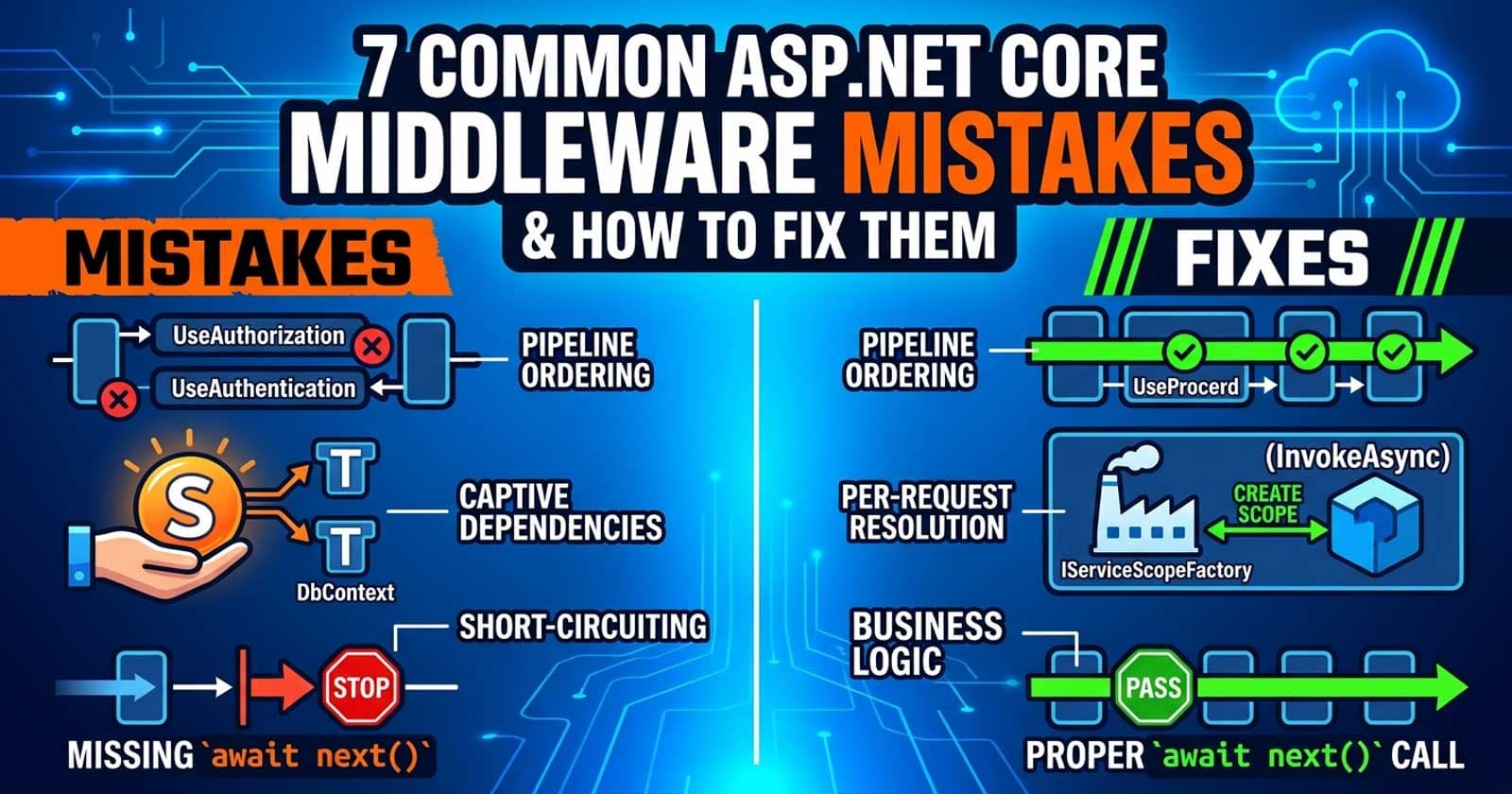

Rate limiting requires two steps: registering the service in Program.cs with builder.Services.AddRateLimiter(...) where you define one or more policies, and placing the middleware in the request pipeline with app.UseRateLimiter(). The middleware must be placed after UseRouting() and UseAuthentication() / UseAuthorization() but before MapControllers() or MapGet() endpoint mappings.

What Is the Difference Between a Global Limiter and a Named Policy?

A Global Limiter applies to every request that passes through the rate limiting middleware, regardless of the endpoint. It acts as a blanket per-instance limit and is useful as a backstop against extreme traffic spikes.

A Named Policy is applied selectively — either per-endpoint using [EnableRateLimiting("policyName")] on a controller action or Minimal API handler, or excluded explicitly with [DisableRateLimiting]. Named policies allow different endpoints to have different limits: a public search endpoint might allow 100 requests/minute while an authenticated write endpoint allows 20.

The two work together: a request must pass both the global limiter and any endpoint-level named policy.

What HTTP Status Code Should a Rate-Limited Response Return, and What Header Should Be Included?

A rate-limited response must return HTTP 429 Too Many Requests. The response should also include a Retry-After header indicating when the client may retry — either as a number of seconds or an HTTP date. Including a Retry-After header is not strictly required by the HTTP spec but is strongly recommended for API usability: clients that parse it can implement intelligent back-off, while clients that ignore it will continue to receive 429 responses.

In ASP.NET Core, the OnRejected callback in the rate limiter configuration is where you set the status code and headers on the response before it is sent.

Intermediate Questions

How Do You Partition a Rate Limiting Policy by User, IP Address, or API Key?

Partitioning determines the "bucket" a request is counted against. Without partitioning, all requests share one bucket — which is rarely useful in production. ASP.NET Core's limiter factories accept a partitionKey derived from the HttpContext.

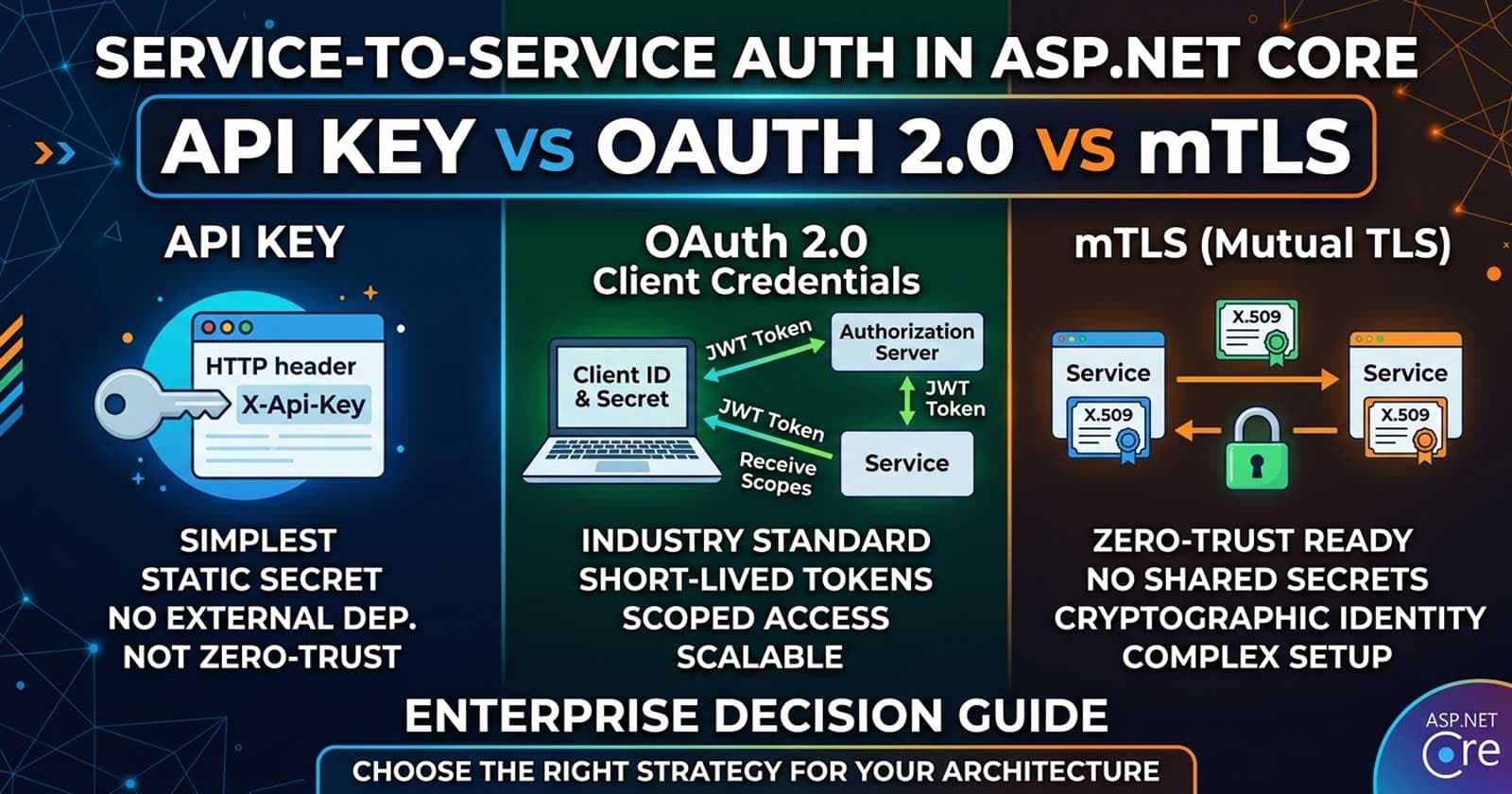

For IP-based partitioning, use context.Connection.RemoteIpAddress. For user-based partitioning, use context.User.FindFirstValue(ClaimTypes.NameIdentifier) or a custom user claim — this requires the authentication middleware to have already run, which is why UseRateLimiter() placement after UseAuthentication() matters. For API key partitioning, extract the key from a request header (e.g., X-Api-Key) and use it as the partition key.

A common enterprise pattern is tiered partitioning: anonymous requests get a strict IP-based limit, authenticated users get a more generous user-based limit, and premium API key holders get the highest limit — all within the same named policy using conditional partition logic.

What Is the OnRejected Callback and What Should You Do Inside It?

OnRejected is an async delegate that fires when a request is rejected by a rate limit policy. Inside it, you should:

- Set the HTTP response status code to

429. - Write a

Retry-Afterheader with the limiter's estimated reset time. - Return a structured error body — ideally following Problem Details (RFC 7807) so the response is consistent with other API error responses.

- Log the rejection at

Warninglevel, including the partition key (user ID or IP) so you can identify abusive clients.

You should not throw exceptions in OnRejected. This callback exists precisely to give you control over the rejection response without exception-based flow.

How Does the Sliding Window Algorithm Differ from the Fixed Window Algorithm in Practice?

The key difference is how they handle the boundary condition. With Fixed Window, a client can send 100 requests at 11:59:59 and another 100 at 12:00:01 — effectively 200 requests in two seconds — because the window reset between the two bursts. Sliding Window prevents this by tracking requests across a rolling time range divided into segments. Each segment has its own count, and the total across all segments is the enforced limit.

In .NET's implementation, Sliding Window takes a segmentsPerWindow parameter. More segments means smoother enforcement but higher memory use. In practice, Sliding Window is the better default for public-facing APIs where clients should not be able to exploit window resets.

How Do You Apply Different Rate Limits to Authenticated vs. Unauthenticated Users?

The standard pattern is to use a single named policy with a partitioning factory that branches on authentication state. When the request has an authenticated user (context.User.Identity.IsAuthenticated), use the user's ID as the partition key with a higher limit. When unauthenticated, fall back to the IP address as the partition key with a stricter limit.

This is implemented inside the AddTokenBucketLimiter (or whichever algorithm factory you choose) as conditional logic within the partitionKey selector. The partitioning logic runs synchronously per request, so it needs to be fast — no database calls.

What Is the Concurrency Limiter and When Should You Use It Instead of a Rate Limiter?

The Concurrency Limiter caps the number of requests being processed simultaneously rather than counting requests over time. It is the right choice for protecting CPU- or I/O-intensive endpoints where the risk is not high request frequency but rather too many slow operations running in parallel — report exports, file generation, email-sending, or any endpoint that calls a slow external API.

A rate limiter cannot protect against this: an endpoint that takes 30 seconds to process could have 10 concurrent requests all within the rate limit window, but still saturate the server. The Concurrency Limiter queues or rejects excess requests at the entry point, keeping concurrent load bounded.

Use Concurrency Limiter for slow, heavyweight endpoints. Use rate limiters (Fixed/Sliding/Token Bucket) for high-frequency endpoint protection.

Advanced Questions

How Does Rate Limiting Interact with Polly Resilience Pipelines in ASP.NET Core?

Rate limiting and Polly resilience solve different sides of the same problem. Rate limiting in ASP.NET Core is server-side: it protects the API from being overwhelmed. Polly resilience (configured with AddStandardResilienceHandler or custom pipelines via AddResiliencePipeline) is client-side: it protects the service's own outbound HTTP calls from failing when downstream APIs are overwhelmed.

When a downstream API returns 429 Too Many Requests, the Polly pipeline on the calling service should recognize it and — critically — not retry immediately. This is why Polly's retry policy should check for HttpStatusCode.TooManyRequests and apply exponential backoff with jitter rather than immediate retry. The Retry-After header from the upstream 429 response should ideally drive the backoff delay.

The interaction trap: aggressive Polly retry on 429 without back-off makes rate limiting worse, not better. The retry loop hammers the API exactly when it is under pressure. Always configure Polly to respect Retry-After semantics when 429 responses are in scope.

What Are the Trade-offs of In-Process Rate Limiting vs. API Gateway Rate Limiting?

In-process rate limiting (ASP.NET Core middleware) is the default for small teams and single-instance deployments. It requires no external infrastructure, is trivially configurable, and can use rich application context (user claims, custom headers, business logic).

The limitation is that it is per-instance. In a horizontally scaled deployment running three replicas, each instance has its own counters. A client that hits the limit on one instance can still send requests to the other two — the total rate across the cluster is three times the per-instance limit.

API Gateway rate limiting (Azure API Management, AWS API Gateway, Kong, NGINX) enforces limits centrally before traffic reaches any instance. It is the correct approach for multi-instance deployments. However, it cannot access application-level context (user roles, subscription tiers) without custom plugin logic or a side-channel lookup.

The recommended enterprise pattern: use an API gateway for coarse-grained IP and API-key limits, and supplement with ASP.NET Core in-process limits for per-user or per-subscription enforcement that requires application context.

How Would You Implement a Distributed Rate Limiter for a Multi-Instance ASP.NET Core Deployment?

The native ASP.NET Core rate limiting middleware does not provide distributed coordination out of the box. For a multi-instance deployment, you have two options:

Redis-backed distributed rate limiter — The most common approach. Use a library such as AspNetCoreRateLimit or a custom IRateLimiterPolicy backed by Redis atomic operations (typically Lua scripts that increment a key with a TTL). This ensures all instances share a single counter per partition key.

Fixed Window with Redis — Simpler: for each request, execute INCR key followed by EXPIRE key windowSeconds in Redis. If the returned count exceeds the limit, reject. This is not perfectly accurate under high concurrency (race conditions between INCR and EXPIRE on first request), but a Lua script resolves this atomically.

In both cases, the Redis round-trip adds latency to every request. This is the cost of distributed accuracy. For very low-latency requirements, consider accepting per-instance limits with a global backstop at the gateway layer rather than introducing Redis into the hot path.

How Should You Handle Rate Limit Rejections with Problem Details in ASP.NET Core?

Problem Details (RFC 7807) is the standard structured error format for HTTP APIs. When rate limiting rejects a request, the OnRejected callback should write a Problem Details JSON body with:

type: a URI identifying the error type (e.g.,https://tools.ietf.org/html/rfc6585#section-4)title: "Too Many Requests"status: 429detail: a human-readable message explaining the limit and when to retryinstance: the request path

In ASP.NET Core 8+, Problem Details support is built-in via IProblemDetailsService. You can inject and call it within the OnRejected handler to produce consistent responses. The advantage of using Problem Details here is that API clients that already handle your standard error schema will handle rate limiting errors without special-casing the status code.

What Are the Most Common Rate Limiting Mistakes Senior Developers Make in Production?

Not setting Retry-After — Clients have no backoff signal and hammer the API until the window resets, making the rejection storm worse.

Rejecting with 500 instead of 429 — Some misconfigured OnRejected callbacks set the wrong status code, misleading clients into treating throttling as a server fault.

Not logging partition keys — Without logging which user or IP triggered the limit, rate limiting becomes an invisible black box. You cannot distinguish a misbehaving client from a legitimate burst without the partition key in the log.

Using Fixed Window without considering burst abuse — Fixed Window is the simplest algorithm but the easiest to exploit. Senior developers should default to Sliding Window or Token Bucket for public-facing endpoints.

Testing only the happy path — Rate limiting is rarely exercised in local development. Without integration tests that actually exhaust the limit and verify the 429 response and headers, misconfigurations ship to production silently.

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

Frequently Asked Questions

What is the difference between [EnableRateLimiting] and [DisableRateLimiting] in ASP.NET Core?

[EnableRateLimiting("policyName")] applies a specific named rate limiting policy to an endpoint or controller. Without this attribute (and without a global limiter), the endpoint has no rate limit. [DisableRateLimiting] explicitly opts an endpoint out of all rate limiting, including any global limiter that has been configured. This is useful for internal health check endpoints or infrastructure routes that should not be throttled.

Can you apply multiple rate limiting policies to the same endpoint?

No — an endpoint can only be associated with one named rate limiting policy via [EnableRateLimiting]. If you need layered limits (e.g., a per-user limit and a per-endpoint global ceiling), the pattern is to encode both limits within a single policy using combined partition logic, or to rely on the global limiter as the outer bound while named policies enforce inner limits.

Does the ASP.NET Core rate limiting middleware work correctly behind a reverse proxy?

Not by default. When the API sits behind NGINX, a load balancer, or an API gateway, context.Connection.RemoteIpAddress returns the proxy's IP — not the client's. For IP-based partitioning to work correctly, you must configure ASP.NET Core to read the original client IP from the X-Forwarded-For header using app.UseForwardedHeaders() with a trusted proxy list. Without this, every client appears to share the same IP address and the rate limiter cannot distinguish between them.

When should I use AddTokenBucketLimiter over AddFixedWindowLimiter?

Use AddTokenBucketLimiter when clients have legitimate reasons to burst briefly — for example, a dashboard that loads several widgets in parallel at startup, then settles into a low steady-state request rate. The token bucket allows controlled burst up to the bucket capacity, then enforces the token replenishment rate. Use AddFixedWindowLimiter for simpler scenarios where a flat request cap per window is sufficient and burst behavior is not a concern.

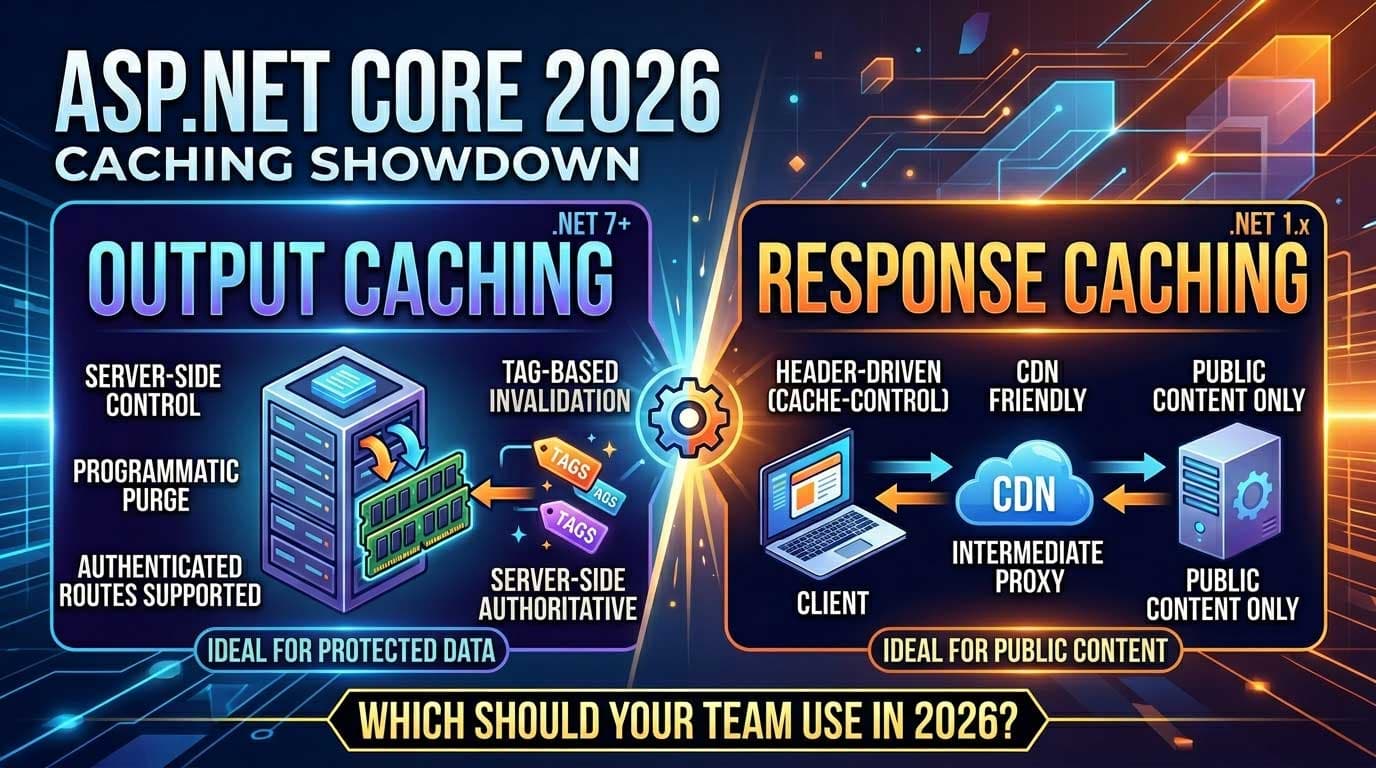

How does rate limiting interact with ASP.NET Core's output caching?

Output caching and rate limiting both sit in the middleware pipeline, but they serve different purposes and operate independently. Output caching returns cached responses directly without hitting the controller — meaning cached responses can bypass rate limiting if output caching middleware is placed before the rate limiter in the pipeline. The correct ordering is: rate limiting middleware first (before output caching), so throttled clients are rejected even when a cached response is available. This prevents the caching layer from masking rate limit exhaustion.

Is rate limiting enforced during unit tests with WebApplicationFactory?

Yes, if you use WebApplicationFactory for integration tests, the full middleware pipeline runs — including rate limiting. For tests that need to verify rate limiting behavior, this is exactly what you want. For tests that are testing unrelated functionality, you may want to configure a test-specific rate limiting policy with very high limits or disable rate limiting in the test environment by checking a configuration flag in OnRejected or by using [DisableRateLimiting] on the endpoints under test.

What happens to queued requests when the server shuts down?

Requests queued by the rate limiting middleware (when QueueLimit is greater than zero) are held in memory. On graceful shutdown, ASP.NET Core will attempt to drain the request pipeline, but queued requests that have not been dequeued by the time the shutdown timeout expires will receive a 503 Service Unavailable. In production, it is important to set QueueLimit conservatively — a large queue increases memory pressure and extends shutdown drain time.