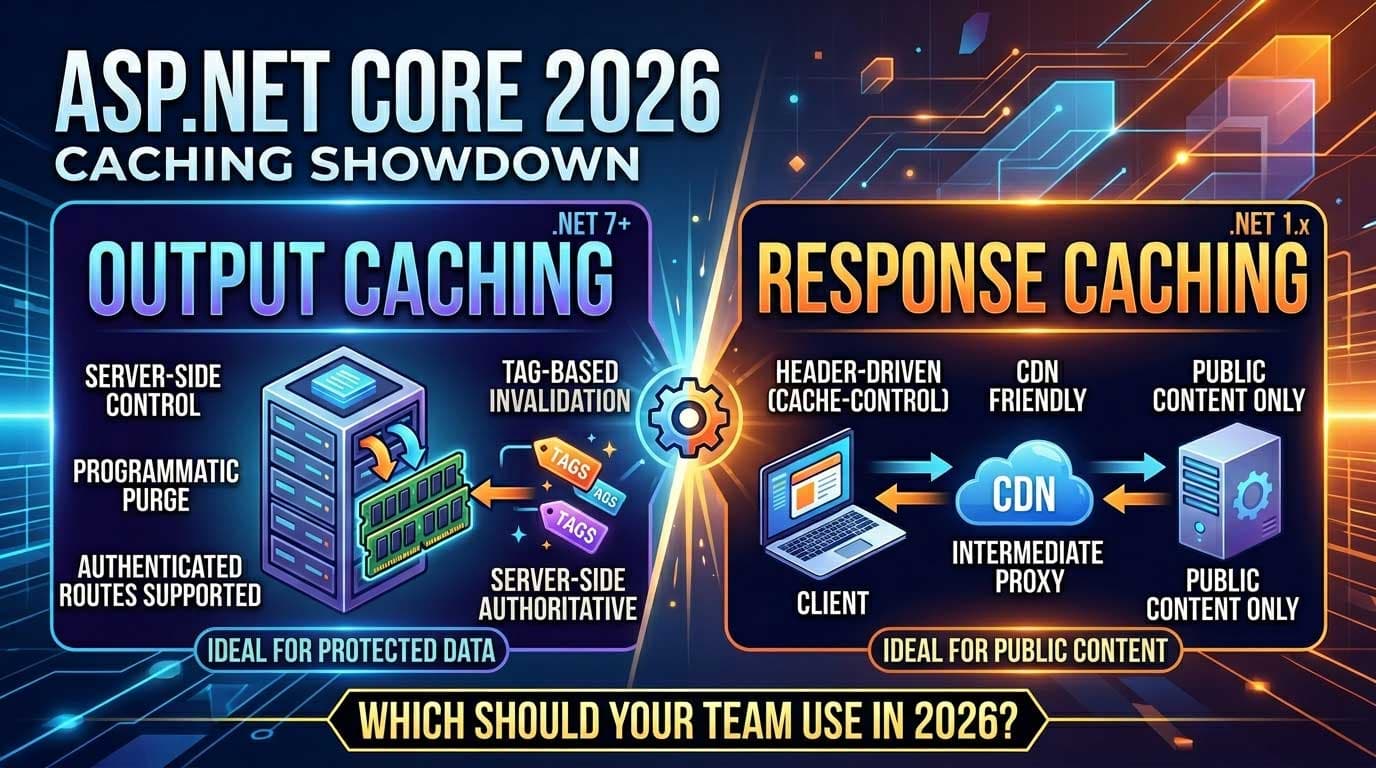

Output Caching vs Response Caching in ASP.NET Core: Which Should Your Team Use in 2026?

ASP.NET Core offers two distinct HTTP-level caching mechanisms — Output Caching and Response Caching — and teams frequently conflate them or reach for the wrong one. Both serve the same broad goal (cache HTTP responses to reduce redundant processing), but they differ significantly in where control lives, what they can actually do, and when each is the right tool. Getting this decision wrong means either over-engineering a simple cache or shipping a caching layer that silently breaks under real-world conditions.

The complete caching picture — combining Output Caching with IMemoryCache, IDistributedCache, and HybridCache into a coherent per-API strategy — is covered in Chapter 9 of the ASP.NET Core Web API: Zero to Production course, where all four mechanisms are wired together in a single working codebase with tag-based invalidation already connected.

For developers who want production-ready implementations alongside the theory, Patreon has complete, annotated source code covering both mechanisms with real invalidation patterns and edge-case handling you can drop straight into your project.

What Problem Are These Two Mechanisms Actually Solving?

Before comparing them, it helps to be precise about what each one does at the HTTP layer.

Response Caching works by instructing clients and intermediate proxies (CDNs, reverse proxies) what to cache using standard HTTP cache headers — Cache-Control, Vary, ETag, and Last-Modified. The server sets the headers; the client or proxy does the caching. The server itself may also cache responses in memory if UseResponseCaching() is in the middleware pipeline, but the core model is header-driven and relies on HTTP caching semantics.

Output Caching (introduced in .NET 7, significantly improved in .NET 7+) stores the response in server-side memory, controlled entirely by your application code. The client receives no cache headers by default. The server intercepts the next matching request before it reaches your endpoint and returns the stored response directly. The decision logic is yours, not the client's.

The fundamental difference: Response Caching is collaborative (client and server share responsibility). Output Caching is authoritative (the server owns everything).

Side-by-Side Comparison

| Dimension | Response Caching | Output Caching |

|---|---|---|

| Cache location | Client / CDN / proxy + optionally server | Server only |

| Control | HTTP headers (Cache-Control, Vary) |

Application code + policies |

| Introduced | ASP.NET Core 1.x | .NET 7 |

| Invalidation | TTL expiry only | TTL + tag-based programmatic eviction |

| Vary by | Vary header (limited) |

Any query param, header, route value, custom |

| Works behind CDN | ✅ Yes — CDN honours headers | ⚠️ Only if CDN passes through to origin |

| Authenticated routes | ❌ Not cacheable (Cache-Control: no-store) |

✅ Fully supported |

| Programmatic purge | ❌ Not possible | ✅ IOutputCacheStore.EvictByTagAsync() |

| Setup complexity | Low | Low–Medium |

Response Caching: How It Works and When It Fits

Response Caching emits Cache-Control headers and optionally caches on the server via UseResponseCaching(). The middleware respects the standard HTTP caching contract — if the response is marked no-store or the request includes certain headers, it will not cache.

A minimal setup looks like this:

// Program.cs

app.UseResponseCaching();

// Controller action

[HttpGet]

[ResponseCache(Duration = 60, Location = ResponseCacheLocation.Any)]

public IActionResult GetPublicData() { ... }

The Duration = 60 emits Cache-Control: public, max-age=60. Intermediate CDNs will cache this response for 60 seconds without ever hitting your origin server.

Where it fits:

- Public, unauthenticated content — product listings, public API responses, marketing pages

- Scenarios where you want a CDN or reverse proxy to absorb the load

- Simple TTL-based expiry with no invalidation requirements

- Teams already using

Cache-Controlheaders as part of their API contract

Where it breaks down:

- Authenticated endpoints —

Cache-Control: no-storeis automatically set for requests withAuthorizationheaders, making the cache useless - Any scenario requiring programmatic invalidation — you cannot evict a cached entry when data changes

- Fine-grained vary logic — the

Varyheader is blunt; you cannot vary by an arbitrary combination of conditions

Output Caching: How It Works and When It Fits

Output Caching intercepts the request pipeline before your endpoint runs and returns a cached response if a match exists. The cache is stored entirely on the server. Clients are unaware of it.

Setup:

// Program.cs

builder.Services.AddOutputCache(options =>

{

options.AddPolicy("products", p => p

.Expire(TimeSpan.FromMinutes(5))

.SetVaryByQuery("page", "pageSize", "sortBy")

.Tag("products-tag"));

});

app.UseOutputCache();

// Controller action

[HttpGet]

[OutputCache(PolicyName = "products")]

public async Task<IActionResult> GetProducts([FromQuery] ProductQueryParams q) { ... }

When the product data changes, you evict by tag:

// Injected into your service or repository

await _outputCacheStore.EvictByTagAsync("products-tag", cancellationToken);

⚠️

IOutputCacheStoreand tag-based eviction require .NET 7+. On earlier versions, only TTL-based expiry is available.

Where it fits:

- Authenticated API endpoints where

Cache-Control: no-storewould block Response Caching - Read-heavy endpoints with predictable variation (paginated lists, filtered results)

- Scenarios where you need to invalidate the cache when underlying data changes (write-through pattern)

- Teams who want all cache logic in application code, not HTTP headers

Where it breaks down:

- You want CDNs to cache responses at the edge — Output Cache is server-side only

- Very high-cardinality cache keys (unique per user per request) — memory grows without benefit

- Streaming responses or large payloads — Output Cache buffers the entire response in memory

The Decision Matrix

Use this to make the call quickly:

| Scenario | Use |

|---|---|

| Public content, CDN in front | Response Caching |

| Authenticated endpoints | Output Caching |

| Need programmatic invalidation | Output Caching |

| Want CDN to absorb load at edge | Response Caching |

| Complex vary logic (query + route + header) | Output Caching |

| Simple TTL, no invalidation needed | Either (Response Caching is simpler) |

| API behind nginx/YARP with no CDN | Output Caching |

Can You Use Both Together?

Yes — and in many production APIs you should. The typical pattern:

- Output Caching for authenticated or write-invalidatable endpoints (product lists, user-specific aggregates)

- Response Caching headers on public, static-ish endpoints (public catalogue, metadata endpoints) where CDN edge caching is desirable

They do not conflict as long as you are intentional about which applies where. Applying both to the same endpoint is possible but rarely useful — Output Cache will intercept first on the server, and the client may also cache based on headers.

A Common Mistake: Confusing [ResponseCache] with Output Cache

[ResponseCache] is an attribute that emits Cache-Control headers. It does not store anything on your server unless UseResponseCaching() middleware is also in the pipeline and the response qualifies.

[OutputCache] is a completely different mechanism — it stores the response server-side regardless of what headers the client sends or supports.

Teams that apply [ResponseCache] expecting server-side caching behaviour are often surprised when nothing gets cached. The attribute alone only controls headers.

💻 Working Code Sample

The dotnet-response-caching repository on GitHub demonstrates ResponseCache in a working Minimal API — useful for seeing the header output and middleware wiring in isolation before layering in Output Caching.

Frequently Asked Questions

Is Output Caching a replacement for Response Caching?

For server-side caching yes — Output Caching is the modern, server-controlled replacement for UseResponseCaching(). But it does not replace the HTTP header semantics that Response Caching provides for CDNs and browsers. They solve different parts of the caching problem.

Does Output Caching work with Minimal APIs?

Yes. Use [OutputCache(PolicyName = "...")] as an attribute on Minimal API endpoint handlers, or call .CacheOutput() in the route definition fluently.

Can Output Cache store per-user responses?

Yes, but be careful with memory. Use .SetVaryByHeader("Authorization") or a custom key provider. In practice, per-user caching only makes sense for expensive-to-compute aggregates that many requests share the same user identity for.

What happens to Output Cache entries on app restart?

The default in-process store loses all entries on restart. For distributed cache persistence across restarts and deployments, implement IOutputCacheStore with Redis. As of .NET 8+, Microsoft provides a Redis-backed store.

Should I disable Response Caching middleware if I use Output Caching?

If you are not relying on server-side UseResponseCaching() behaviour, remove it from the pipeline to avoid confusion. Keep [ResponseCache] attributes only if you intentionally want to emit Cache-Control headers for CDN consumption.

Which is better for rate-limited external API calls?

Output Caching. You control exactly when to evict and can cache aggressively without worrying about client behaviour overriding it.