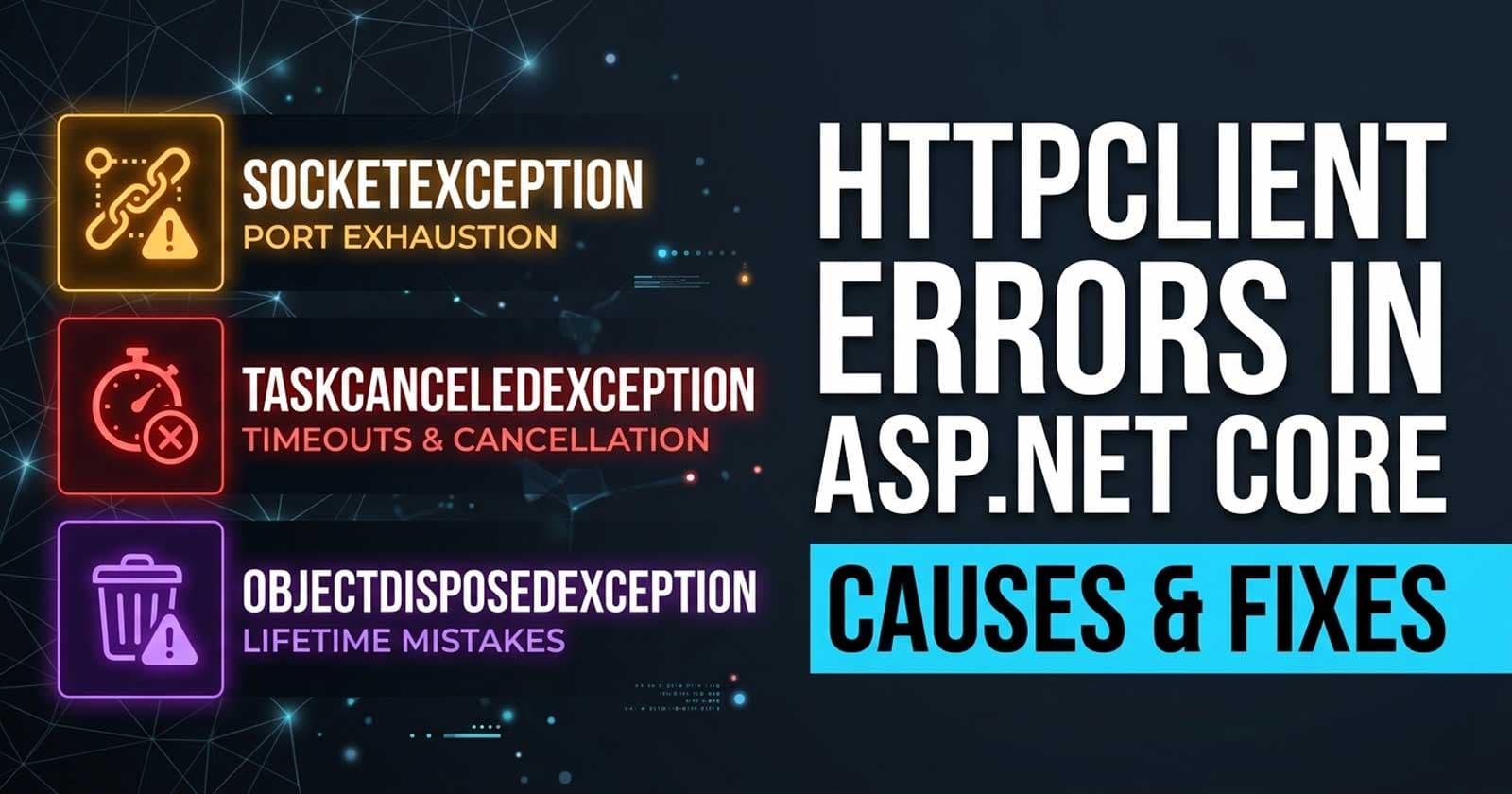

HttpClient Errors in ASP.NET Core: SocketException, TaskCanceledException, and ObjectDisposedException — Causes and Fixes

HttpClient is one of the most widely used classes in .NET, and it is also one of the most frequently misused. When things go wrong, the errors are cryptic enough that many teams spend hours chasing the wrong root cause — treating symptoms like intermittent SocketException, mysterious TaskCanceledException under load, or an ObjectDisposedException that only appears in production. If your ASP.NET Core API is throwing any of these, the underlying patterns that cause them are well understood, and the fixes are straightforward once you know what to look for. The full production-ready implementation patterns discussed here — including typed clients, resilience pipelines, and retry strategies — are available with complete source code on Patreon, so you can see exactly how these ideas wire together in a real API.

Understanding why these errors happen in the first place means understanding how HttpClient manages connections, how the default timeout interacts with cancellation, and what the DI container does — and does not — guarantee about object lifetimes. Getting all three right is what separates a stable, production-grade integration layer from one that fails unpredictably under load.

If you want to go deeper on how IHttpClientFactory should be configured from the ground up, Chapter 10 of the ASP.NET Core Web API: Zero to Production course covers resilience pipelines, named and typed client configuration, and rate limiting — all wired into a full production codebase you can run and adapt immediately.

What These Errors Actually Mean

Before diving into causes and fixes, it helps to establish a precise definition for each exception, because they are often confused with each other or incorrectly attributed.

SocketException surfaces as the inner exception of an HttpRequestException. Its message typically reads "An attempt was made to access a socket in a way forbidden by its access permissions" or "No connection could be made because the target machine actively refused it." The key signal is in the error code: WSAENOBUFS or "Too many open files" points directly to socket exhaustion.

TaskCanceledException is thrown when an HttpClient request is cancelled. The confusing part is that it is thrown for two distinct reasons — an explicit cancellation via a CancellationToken, and a timeout expiry. Before .NET 5, there was no reliable way to distinguish between the two programmatically. From .NET 5 onwards, you can check exception.CancellationToken.IsCancellationRequested versus inspecting the inner TimeoutException to separate user-initiated cancellation from deadline expiry.

ObjectDisposedException with the message "Cannot access a disposed object" on an HttpClient instance is almost always caused by one of two patterns: disposing a client that was resolved from the DI container, or accessing response content after the response message has already been disposed.

Understanding which type you are dealing with is the first diagnostic step — and each has a completely different fix.

SocketException: The Port Exhaustion Problem

Why Socket Exhaustion Happens

The classic socket exhaustion scenario has been documented since 2016 but continues to appear in production today. The root cause is creating a new HttpClient instance per request using a using block.

When HttpClient is disposed, the underlying HttpClientHandler closes the connection. However, the TCP socket enters a TIME_WAIT state and is held by the operating system for a period typically lasting 240 seconds. On a busy API that makes many outbound calls per second, this means thousands of sockets accumulate in TIME_WAIT, and the OS eventually rejects new connections.

The error you see varies by OS. On Windows it manifests as SocketException: An attempt was made to access a socket in a way forbidden by its access permissions. On Linux containers it typically appears as SocketException: Too many open files.

A related but less obvious cause is using a static HttpClient (to avoid disposal) but not configuring PooledConnectionLifetime on the handler. A static client reuses sockets correctly, but its connection pool holds onto DNS results indefinitely. If the upstream service's IP address changes — common with load balancers and Kubernetes services — the static client keeps routing to stale addresses until the process restarts.

The Fix: IHttpClientFactory

IHttpClientFactory, introduced in .NET Core 2.1, solves both problems. It manages a pool of HttpMessageHandler instances, reusing the underlying socket connections while rotating handlers according to a configurable HandlerLifetime (default: two minutes). This keeps connections alive long enough to amortise the cost of establishment, while ensuring DNS changes are picked up regularly.

Register typed or named clients in Program.cs, inject the factory or the typed client into your services, and never instantiate HttpClient directly in application code. The related IHttpClientFactory in ASP.NET Core decision guide on Coding Droplets covers the three client registration patterns in detail — named, typed, and basic — with the trade-offs for each.

The key operational check: if you are still seeing socket exhaustion after adopting IHttpClientFactory, verify that no code path is calling Dispose() on a client retrieved from the factory. The factory manages handler lifetime; manually disposing the client disrupts that lifecycle.

Diagnosing Socket Exhaustion in a Running Process

On Linux, ss -s shows the number of sockets in TIME_WAIT. On Windows, netstat -an | findstr TIME_WAIT | find /c /v "" gives the count. If this number climbs continuously under load and correlates with request rate, socket exhaustion is the root cause.

In .NET's diagnostic tooling, dotnet-counters monitor --counters System.Net.Http exposes http-11-connections-established, http-11-requests-queue-duration, and related metrics. A queue duration that grows under load — while established connections stay flat — confirms the pool is saturated.

TaskCanceledException: Timeouts vs. Cancellations

The Default Timeout Is 100 Seconds

HttpClient's default timeout is 100 seconds. For most internal API calls this is far too generous — a downstream service that takes 90 seconds to respond has almost certainly failed silently. But more importantly, when this timeout fires, the exception type is TaskCanceledException, not TimeoutException. This misleads logging and alerting pipelines into treating timeouts as user-initiated cancellations.

The right approach is to set an explicit timeout appropriate for the downstream SLA, and to instrument which cancellation reason fired. From .NET 5+, inspect the inner exception: if the inner is a TimeoutException, the HttpClient timeout fired. If exception.CancellationToken.IsCancellationRequested is true with no inner TimeoutException, the caller's token was cancelled — typically because the user disconnected or the parent request timed out.

CancellationToken Threading Issues in Background Services

A common source of false TaskCanceledException in background services and hosted jobs is linking the HttpClient call to the stoppingToken from BackgroundService.ExecuteAsync. When the host shuts down gracefully, it cancels the stoppingToken. If an in-flight HTTP request is using that token, it is cancelled. This is correct behaviour — but if it surfaces as an unhandled exception in your logging, it will look like an error.

The fix is to create a separate CancellationTokenSource for individual HTTP operations, with a timeout appropriate for that call, and link it to the stopping token using CancellationTokenSource.CreateLinkedTokenSource. This gives you a deadline per call while still respecting graceful shutdown.

Polly and Retry Loops

If you have added Polly retry policies to your IHttpClientFactory configuration — which is the right thing to do — be careful that your policy handles TaskCanceledException explicitly. A default retry policy will not retry on TaskCanceledException because Polly treats it as a deliberate cancellation. You need to configure ShouldHandle to include TaskCanceledException when the inner exception is a TimeoutException, so that network timeouts are retried while user-initiated cancellations are not.

AddStandardResilienceHandler() in .NET's Microsoft.Extensions.Http.Resilience package (part of the .NET 9/10 SDK) handles this correctly by default — it configures hedging, retry, circuit breaker, and timeout as a pipeline with sensible defaults. If you are on an older stack using raw Polly, this distinction needs to be handled manually.

ObjectDisposedException: Lifetime and Disposal Mistakes

Disposing a Client Obtained From DI

The most common cause is wrapping a typed HttpClient — or any service wrapping it — in a using block. When the container manages a service's lifetime, calling Dispose() on it breaks the container's assumptions. The next injection of that service, or any code path that holds a reference to the same instance, will encounter the disposed object.

The rule is simple: never call Dispose() on anything resolved from the DI container. The container owns the lifetime. For HttpClient instances obtained from IHttpClientFactory, this applies doubly — the factory's handler pool depends on managing disposal itself.

Reading Content After Disposal

The second common cause is reading HttpResponseMessage.Content after the response message has been disposed. This typically happens when the response is returned from a helper method that wraps the request in a using block disposing the HttpResponseMessage before the caller reads the content.

The fix is to read and materialise the response content — await response.Content.ReadAsStringAsync() or deserialise it — before the response message goes out of scope. If the helper method needs to return content to the caller, read and return the content as a string or a deserialised object, not the raw HttpResponseMessage.

IHttpClientFactory Scope Mismatch

There is a subtler ObjectDisposedException variant documented in Microsoft's IHttpClientFactory troubleshooting guide: data stored in HttpMessageHandler context (via HttpRequestMessage.Properties or the handler's own state) can "disappear" or persist unexpectedly when the DI scope on the handler does not match the application's scope. This surfaces as an ObjectDisposedException on the handler's scoped dependency.

The fix is to avoid injecting scoped services directly into HttpMessageHandler subclasses. Instead, use IHttpContextAccessor or retrieve scoped services explicitly via IServiceScopeFactory within the handler's SendAsync override.

Are You Seeing EF Core Connection Issues Too?

If your API is throwing both HttpClient errors and EF Core connection pool errors under load, they often share the same root cause: resource exhaustion under concurrent pressure. The EF Core Connection Pool Exhaustion in ASP.NET Core post covers the diagnostic and fix pattern for the database side of this — it is worth reading alongside this one if you are dealing with both.

Diagnostic Decision Flow

When you encounter one of these errors in production, a structured approach to diagnosis saves time:

Start by identifying the exception type and, critically, the inner exception. A TaskCanceledException with an inner TimeoutException is a timeout issue, not a cancellation issue. A HttpRequestException with an inner SocketException and a "Too many open files" message is socket exhaustion.

For socket exhaustion: count TIME_WAIT sockets, check whether IHttpClientFactory is being used correctly, and verify nothing in the call path is manually disposing clients.

For TaskCanceledException: check the default timeout against the downstream SLA. Check whether Polly's ShouldHandle configuration covers timeouts. Check whether background services are using the host's stopping token directly.

For ObjectDisposedException: search the call path for using blocks around DI-resolved services. Check whether response content is being read before the response is disposed.

Production Readiness Checklist

Running through the following before deploying any code that uses HttpClient significantly reduces the chance of encountering these errors in production:

- All

HttpClientinstances are registered viaIHttpClientFactoryor as typed clients — no direct instantiation - No code path calls

Dispose()on a client or service resolved from the DI container - Response content is read and materialised before the

HttpResponseMessagegoes out of scope - Explicit timeouts are configured per-client, not left at the 100-second default

- Polly retry pipelines explicitly handle

TaskCanceledExceptionwrapping aTimeoutException - Background services use linked

CancellationTokenSourceper HTTP operation, not the raw stopping token - Socket metrics are included in observability dashboards (

System.Net.Httpcounters) PooledConnectionLifetimeis configured when not usingIHttpClientFactory(static client scenarios only)

☕ Prefer a one-time tip? Buy us a coffee — every bit helps keep the content coming!

Frequently Asked Questions

Why does HttpClient throw TaskCanceledException instead of TimeoutException when it times out?

This is a known quirk in HttpClient's design: internally, the timeout is implemented using a CancellationTokenSource that fires when the deadline expires. When that token cancels the request, HttpClient throws TaskCanceledException because that is what cancellation produces. Microsoft did not change this to throw TimeoutException for backward compatibility reasons. From .NET 5 onwards, you can distinguish the two by inspecting exception.InnerException — a TimeoutException inner exception confirms the HttpClient timeout fired rather than a caller-provided token.

Is it safe to use a static HttpClient to avoid socket exhaustion?

A static HttpClient avoids socket exhaustion from over-disposal, but it introduces a different problem: DNS staleness. The static instance caches DNS results from its first connection and never refreshes them. For services behind load balancers or in Kubernetes, where DNS-based routing is common, this causes the client to route to stale IP addresses after a deployment or failover. IHttpClientFactory with its default two-minute HandlerLifetime solves both problems: sockets are reused efficiently and DNS is refreshed regularly.

Can I still get socket exhaustion after switching to IHttpClientFactory?

Yes — if any code path manually calls Dispose() on a client obtained from the factory or from DI injection, it disrupts the handler pool lifecycle and can re-introduce socket exhaustion. Use dotnet-counters to monitor System.Net.Http counters and look for a growing TIME_WAIT socket count to confirm whether exhaustion is still occurring after the migration.

What is the right timeout value for HttpClient? There is no universal answer, but a few principles help. The timeout should reflect the SLA of the downstream service — if the dependency is expected to respond within 2 seconds under normal conditions, a 5-second timeout is a reasonable ceiling. The 100-second default is almost never appropriate for synchronous API calls. For background jobs or batch processing, a longer timeout may be warranted, but it should still be intentional rather than defaulted. Configure timeouts per named or typed client so each downstream dependency has a timeout matching its own characteristics.

Why does ObjectDisposedException sometimes only appear in production and not locally?

Load and concurrency expose race conditions that sequential local testing misses. The scope mismatch variant of ObjectDisposedException — where a scoped service is injected into an HttpMessageHandler — typically manifests only when multiple requests arrive concurrently and the handler's scope is recycled while another request is still using it. Production load triggers this; a local single-threaded test run does not. Load testing with tools like k6 or bombardier before deployment surfaces this class of issue in a controlled environment.

Does Polly's AddStandardResilienceHandler cover all three of these error types?

AddStandardResilienceHandler in Microsoft.Extensions.Http.Resilience (.NET 9+) handles timeout-driven TaskCanceledException and transient HttpRequestException variants out of the box. It does not prevent ObjectDisposedException caused by incorrect disposal patterns — those are code correctness issues that resilience policies cannot compensate for. Socket exhaustion under IHttpClientFactory configured correctly is also addressed at the infrastructure level rather than the retry level.

How do I tell which of these errors is causing a 503 in production?

Structured logging is the most direct path. Ensure your global exception handler or IExceptionHandler implementation logs the full exception chain including inner exceptions, not just the top-level exception type. A 503 triggered by SocketException: Too many open files buried inside HttpRequestException inside TaskCanceledException is invisible if you only log the outermost exception message. Use Serilog's destructuring (@exception) or OpenTelemetry's exception recording to capture the full chain as structured data.